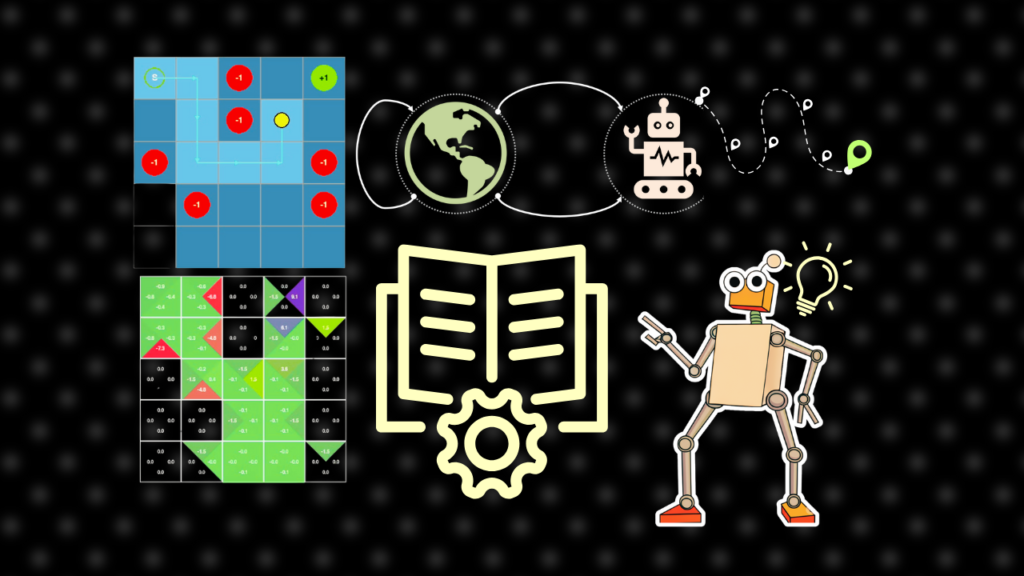

the elemental ideas you must know to grasp Reinforcement Studying!

We are going to progress from absolutely the fundamentals of “what even is RL” to extra superior subjects, together with agent exploration, values and insurance policies, and distinguish between fashionable coaching approaches. Alongside the best way, we will even be taught concerning the numerous challenges in RL and the way researchers have tackled them.

On the finish of the article, I will even share a YouTube video I made that explains all of the ideas on this article in a visually participating approach. In case you are not a lot of a reader, you possibly can take a look at that companion video as an alternative!

Word: All photos are produced by the writer except in any other case specified.

Reinforcement Studying Fundamentals

Suppose you wish to prepare an AI mannequin to discover ways to navigate an impediment course. RL is a department of Machine Studying the place our fashions be taught by gathering experiences – taking actions and observing what occurs. Extra formally, RL consists of two elements – the agent and the atmosphere.

The Agent

The educational course of includes two key actions that occur over and over: exploration and coaching. Throughout exploration, the agent collects experiences within the atmosphere by taking actions and discovering out what occurs. After which, through the coaching exercise, the agent makes use of these collected experiences to enhance itself.

The Surroundings

As soon as the agent selects an motion, the atmosphere updates. It additionally returns a reward relying on how effectively the agent is doing. The atmosphere designer applications how the reward is structured.

For instance, suppose you might be engaged on an atmosphere that teaches an AI to keep away from obstacles and attain the purpose. You possibly can program your atmosphere to return a optimistic reward when the agent is shifting nearer to the purpose. But when the agent collides with an impediment, you possibly can program it to obtain a big adverse reward.

In different phrases, the atmosphere offers a optimistic reinforcement (a excessive optimistic reward, for instance) when the agent does one thing good and a punishment (a adverse reward for instance) when it does one thing dangerous.

Though the agent is oblivious to how the atmosphere truly operates, it could actually nonetheless decide from its reward patterns easy methods to choose optimum actions that result in most rewards.

Coverage

At every step, the agent AI observes the present state of the atmosphere and selects an motion. The purpose of RL is to be taught a mapping from observations to actions, i.e. “given the state I’m observing, what motion ought to I select”?

In RL phrases, this mapping from the state to motion can be known as a coverage.

This coverage defines how the agent behaves in numerous states, and in deep reinforcement studying we be taught this operate by coaching some sort of a deep neural community.

Reinforcement Studying

Understanding the distinctions and interaction between the agent, the coverage, and the atmosphere may be very integral to grasp Reinforcement Studying.

- The Agent is the learner that explores and takes actions inside the atmosphere

- The Coverage is the technique (typically a neural community) that the agent makes use of to find out which motion to take given a state. In RL, our final purpose is to coach this technique.

- The Surroundings is the exterior system that the agent interacts with, which offers suggestions within the type of rewards and new states.

Here’s a fast one-liner definition it is best to keep in mind:

In Reinforcement Studying, the agent follows a coverage to pick actions inside the atmosphere.

Observations and Actions

The agent explores the atmosphere by taking a sequence of “steps”. Every step is one resolution. The agent observes the atmosphere’s state. Decides on an motion. Receives a reward. Observes the subsequent state. On this part, let’s perceive what observations and actions are.

Statement

Statement is what the agent sees from the atmosphere – the data it receives concerning the atmosphere’s present state. In an impediment navigation atmosphere, the commentary is perhaps LiDAR projections to detect the obstacles. For Atari video games, it is perhaps a historical past of the previous couple of pixel frames. For textual content technology, it is perhaps the context of the generated tokens to this point. In chess, it’s the place of all of the items, whose transfer it’s, and so forth.

The commentary ideally comprises all the data the agent must take an motion.

The motion house is all of the out there choices the agent can take. Actions may be discrete or steady. A discrete motion house is when the agent has to decide on between a selected set of categorical choices. For instance, in Atari video games, the actions is perhaps the buttons of an Atari controller. For textual content technology, it’s to decide on between all of the tokens current within the mannequin’s vocabulary. In chess, it could possibly be a listing of accessible strikes.

The atmosphere designer may select a steady motion house – the place the agent generates steady values to take a “step” within the atmosphere. For instance, in our impediment navigation instance, the agent can select the x and y velocities to get a fantastic grain management of the motion. In a human character management job, the motion is commonly to output the torque or goal angle for each joint within the character’s skeleton.

An important lesson

However right here is one thing essential to grasp: To the agent and the coverage – the atmosphere and its specifics could be a full black field. The agent will obtain vector-state data as an commentary, generate an motion, obtain a reward, and later be taught from it.

So in your thoughts, you possibly can take into account the agent and the atmosphere as two separate entities. The atmosphere defines the state house, the motion house, the reward methods, and the principles.

These guidelines are decoupled from how the agent explores and the way the coverage is educated on the collected experiences.

When learning a analysis paper, you will need to make clear in our thoughts which side of RL we’re studying about. Is it a few new atmosphere? Is it a few new coverage coaching technique? Is it about an exploration technique? Relying on the reply, you possibly can deal with different issues as a black field.

Exploration

How does the agent discover and acquire experiences?

Each RL algorithm should clear up one of many largest dilemmas in coaching RL brokers – exploration vs exploitation.

Exploration means making an attempt out new actions to assemble details about the atmosphere. Think about you might be studying to struggle a boss in a troublesome online game. At first, you’re going to attempt totally different approaches, totally different weapons, spells, random issues simply to see what sticks and what doesn’t.

Nonetheless, when you begin seeing some rewards, like constantly deal injury to the boss, you’ll cease exploring and begin exploiting the technique you have got already acquired. Exploitation means greedily choosing actions you assume will get one of the best rewards.

An excellent RL exploration technique should steadiness exploration and exploitation.

A preferred exploration technique is Epsilon-Grasping, the place the agent explores with a random motion a fraction of the time (outlined by a parameter epsilon), and exploits its best-known motion the remainder of the time. This epsilon worth is often excessive at first and is regularly decreased to favor exploitation because the agent learns.

Epsilon grasping solely works in discrete motion areas. In steady areas, exploration is commonly dealt with in two fashionable methods. A technique is so as to add a little bit of random noise to the motion the agent decides to take. One other fashionable approach is so as to add an entropy bonus to the loss operate, which inspires the coverage to be much less sure about its decisions, naturally resulting in extra different actions and exploration.

Another methods to encourage exploration are:

- Design the atmosphere to make use of random initialization of states firstly of the episodes.

- Intrinsic exploration strategies the place the agent acts out of its personal “curiosity.” Algorithms like Curiosity and RND reward the agent for visiting novel states or taking actions the place the end result is tough to foretell.

I cowl these fascinating strategies in my Agentic Curiosity video, so remember to test that out!

Coaching Algorithms

A majority of analysis papers and educational subjects in Reinforcement Studying are about optimizing the agent’s technique to select actions. The purpose of optimization algorithms is to be taught actions that maximize the long-term anticipated rewards.

Let’s check out the totally different algorithmic decisions one after the other.

Mannequin-Based mostly vs Mannequin-Free

Alright, so our agent has explored the atmosphere and picked up a ton of expertise. Now what?

Does the agent be taught to behave straight from these experiences? Or does it first attempt to mannequin the atmosphere’s dynamics and physics?

One strategy is model-based studying. Right here, the agent first makes use of its expertise to construct its personal inside simulation, or a world mannequin. This mannequin learns to foretell the implications of its actions, i.e., given a state and motion, what’s the ensuing subsequent state and reward? As soon as it has this mannequin, it could actually observe and plan fully inside its personal creativeness, operating 1000’s of simulations to search out one of the best technique with out ever taking a dangerous step in the true world.

That is notably helpful in environments the place gathering actual world expertise may be costly – like robotics or self-driving automobiles. Examples of Mannequin-Based mostly RL are: Dyna-Q, World Models, Dreamer, and so forth. I’ll write a separate article sometime to cowl these fashions in additional element.

The second is named model-free studying. That is what the remainder of the article goes to cowl. Right here, the agent treats the atmosphere as a black field and learns a coverage straight from the collected experiences. Let’s speak extra about Mannequin-free RL within the subsequent part.

Worth-Based mostly Studying

There are two major approaches to model-free RL algorithms.

Worth-based algorithms be taught to judge how good every state is. Coverage-based algorithms be taught straight easy methods to act in every state.

In value-based strategies, the RL agent learns the “worth” of being in a selected state. The worth of a state actually means how good the state is. The instinct is that if the agent is aware of which states are good, it could actually choose actions that result in these states extra recurrently.

And grateful there’s a mathematical approach of doing this – the Bellman Equation.

V(s) = r + γ * max V(s’).

This recurrence equation principally says the worth V(s) of state s is the same as the rapid reward r of being within the state plus the worth of one of the best next-state s‘ the agent can attain from s. Gamma (γ) is a reduced issue (between 0 and 1) that nerfs the goodness of the subsequent state. It primarily decides how a lot the agent cares about rewards within the distant future versus rapid rewards. A γ near 1 makes the agent “far-sighted,” whereas a γ near 0 makes the agent “short-sighted,” greedily caring virtually solely concerning the very subsequent reward.

Q-Studying

We learnt the instinct behind state values, however how can we use that data to be taught actions? The Q-Studying equation solutions this.

Q(s, a) = r + γ * max_a Q(s’, a’)

The Q-value Q(s,a) is the quality-value of the motion a in state s. The above equation principally states: The standard of an motion a in state s is the rapid reward r you get from being in state s, plus the discounted high quality worth of the subsequent greatest motion.

So in abstract:

- Q-values are the standard values of every motion in every state.

- V-values are the worth of a selected state; it is the same as the utmost Q-value of all actions in that state.

- Coverage π at a selected state is the motion that has the very best Q-value in that state.

To be taught extra about Q-Studying, you possibly can analysis Deep Q Networks, and their descendants, like Double Deep Q Networks and Dueling Deep Q Networks.

Worth-based studying trains RL brokers by studying the worth of being in particular states. Nonetheless, is there a direct technique to be taught optimum actions while not having to be taught state values? Sure.

Coverage studying strategies straight be taught optimum motion methods with out explicitly studying state values. Earlier than we find out how, we should be taught one other essential idea first. Temporal Distinction Studying vs Monte Carlo Sampling.

TD Studying vs MC Sampling

How does the agent consolidate future experiences to be taught?

In Temporal Distinction (TD) Studying, the agent updates its worth estimates after each single step utilizing the Bellman equation. And it does so by seeing its personal estimate of the Q-value within the subsequent state. This technique is named 1-step TD Studying, or one-step Temporal Distinction Studying. You are taking one step and replace your studying based mostly in your previous estimates.

The second possibility is named Monte-Carlo sampling. Right here, the agent waits for all the episode to complete earlier than updating something. After which it makes use of the whole return from the episode:

Q(s,a) = r₁ + γr₂ + γ²r₃ + … + γⁿrₙ

Commerce-offs between TD Studying and MC Sampling

TD Studying is fairly cool coz the agent can be taught one thing from each single step, even earlier than it completes an episode. Which means it can save you your collected experiences for a very long time and maintain coaching even on outdated experiences, however with new Q-values. Nonetheless, TD studying is closely biased by the agent’s present estimate of the state. So if the agent’s estimates are fallacious, it should maintain reinforcing these fallacious estimates. That is known as the “bootstrapping downside.”

Alternatively, Monte Carlo studying is at all times correct as a result of it makes use of the true returns from precise episodes. However in most RL environments, rewards and state transitions may be random. Additionally, because the agent explores the atmosphere, its personal actions may be random, so the states it visits throughout rollout are additionally random. This leads to the pure TD-Studying technique affected by excessive variance points as returns can range dramatically between episodes.

Coverage Gradients

Alright, now that we have now understood the idea of TD-Studying vs MC Sampling, it’s time to get again to Coverage-Based mostly Studying strategies.

Recall that value-based strategies like DQN first need to explicitly calculate the worth, or Q-value, for each single potential motion, after which they choose one of the best one. However it’s potential to skip this step, and Coverage Gradient strategies like REINFORCE do precisely that.

In REINFORCE, the coverage community outputs chances for every motion, and we prepare it to extend the likelihood of actions that result in good outcomes. For discrete areas, PG strategies output the likelihood of every motion as a categorical distribution. For steady areas, PG strategies output as Gaussian distributions, predicting the imply and customary deviation of every component within the motion vector.

So the query is, how precisely do you prepare such a mannequin that straight predicts motion chances from states?

Right here is the place the Coverage Gradient Theorem is available in. On this article, I’ll clarify the core concept intuitively.

- Our coverage gradient mannequin is commonly denoted within the literature as pi_theta(a|s). Right here, theta denotes the weights of the neural community. pi_theta(a|s) is the expected likelihood of motion a in state s by neural community theta.

- From a newly initialized coverage community, we let the agent play out a full episode and acquire all of the rewards.

- For each motion it took, determine the full discounted return that got here after it. That is performed utilizing the Monte Carlo strategy.

- Lastly, to truly prepare the mannequin, the coverage gradient theorem asks us to maximise the components offered within the determine under.

- If the return was excessive, this replace will make that motion extra possible sooner or later by growing pi(a|s). If the return was adverse, this replace will make the motion much less possible by lowering the pi(a|s).

The excellence between Q-Studying and REINFORCE

One of many core variations between Q-Studying and REINFORCE is that Q-Studying makes use of 1-step TD Studying, and REINFORCE makes use of Monte Carlo Sampling.

By utilizing 1-step TD, Q-learning should decide the standard worth Q of every state-action risk. As a result of recall that in 1-step TD the agent can take only one step within the atmosphere and decide a top quality rating of the state.

Alternatively, with Monte Carlo sampling, the agent doesn’t have to depend on an estimator to be taught. As a substitute, it makes use of precise returns noticed throughout exploration. This makes REINFORCE “unbiased” with the caveat that it requires a number of samples to appropriately estimate the worth of a trajectory. Moreover, the agent can not prepare till it absolutely finishes a trajectory (that’s attain a terminal state), and it can not reuse trajectories after the coverage community updates.

In observe, REINFORCE typically results in stability points and pattern inefficiency. Let’s speak about how Actor Critic addresses these limitations.

Benefit Actor Critic

If you happen to attempt to use vanilla REINFORCE on most complicated issues, it should battle, and the explanation why is twofold.

The primary is as a result of it suffers from excessive variance coz it’s a Monte Carlo sampling technique. Second, it has no sense of baseline. Like, think about an atmosphere that at all times provides you a optimistic reward, then the returns won’t ever be adverse, so REINFORCE will improve the possibilities of all actions, albeit in a disproportionate approach.

We don’t wish to reward actions only for getting a optimistic rating. We wish to reward them for being higher than common.

And that’s the place the idea of benefit turns into essential. As a substitute of simply utilizing the uncooked return to replace our coverage, we’ll subtract the anticipated return for that state. So our new replace sign turns into:

Benefit = The Return you bought – The Return you anticipated

Whereas Benefit provides us a baseline for our noticed returns, let’s additionally talk about the idea of Actor Critic strategies.

Actor Critic combines one of the best of Worth-Based mostly Strategies (like DQN) and one of the best of Coverage-Based mostly Strategies (like REINFORCE). Actor Critic strategies prepare a separate “critic” neural community that’s solely educated to judge states, very like the Q-Community from earlier.

The actor technique, however, learns the coverage.

Combining Benefit and Actor critics, we are able to perceive how the popular A2C algorithm works:

- Initialize 2 neural networks: the coverage or actor community, and the worth or critic community. The actor community inputs a state and outputs motion chances. The critic community inputs a state and outputs a single float representing the state’s worth.

- We generate some rollouts within the atmosphere by querying the actor

- We replace the critic community utilizing both TD Studying or Monte Carlo Studying. There are extra superior approaches, like Generalized Advantage Estimates as effectively, that mix the 2 approaches for extra secure studying.

- We consider the benefit by subtracting the noticed return from the common return generated by the Critic Community

- Lastly, we replace the Coverage community by utilizing the benefit and the coverage gradient equation.

Actor-critic strategies clear up the variance downside in coverage gradients by utilizing a price operate as a baseline. PPO (Proximal Coverage Optimization) extends A2C by including the ideas of “belief areas” into the training algorithm, which prevents extreme adjustments to the community weights throughout studying. We received’t get into particulars about PPO on this article; perhaps sometime we’ll open that Pandora’s field.

Conclusion

This text is a companion piece to the YouTube video under I made. Be at liberty to test it out, in the event you loved this learn.

Each algorithm makes particular decisions for every query, and these decisions cascade by way of all the system, affecting every little thing from pattern effectivity to stability to real-world efficiency.

In the long run, creating an RL algorithm is about answering these issues by making your decisions. DQNs select to be taught values. coverage strategies straight learns a **coverage**. Monte Carlo strategies replace after a full episode utilizing precise returns – this makes them unbiased, however they’ve excessive variance due to the stochastic nature of RL exploration. TD Studying as an alternative chooses to be taught at each step based mostly on the agent’s personal estimates. Actor Critic strategies mix DQNs and Coverage Gradients by studying an actor and a critic community individually.

Word that there’s quite a bit we didn’t cowl at this time. However this can be a good base to get you began with Reinforcement Studying.

That’s the top of this text, see you within the subsequent one! You should use the hyperlinks under to find extra of my work.

My Patreon:

https://www.patreon.com/NeuralBreakdownwithAVB

My YouTube channel:

https://www.youtube.com/@avb_fj

Observe me on Twitter:

https://x.com/neural_avb

Learn my articles:

https://towardsdatascience.com/author/neural-avb/