are sometimes introduced as black packing containers.

Layers, activations, gradients, backpropagation… it may well really feel overwhelming, particularly when the whole lot is hidden behind mannequin.match().

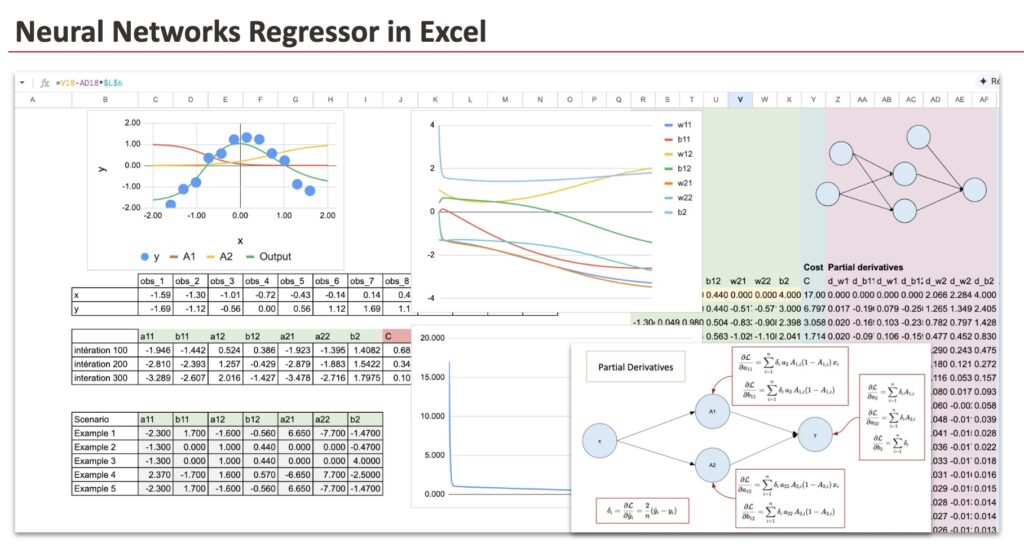

We’ll construct a neural community regressor from scratch utilizing Excel. Each computation will likely be express. Each intermediate worth will likely be seen. Nothing will likely be hidden.

By the top of this text, you’ll perceive how a neural community performs regression, how ahead propagation works, and the way the mannequin can approximate non-linear capabilities utilizing just some parameters.

Earlier than beginning, when you’ve got not already learn my earlier articles, it’s best to first have a look at the implementation of linear regression and logistic regression.

You will notice {that a} neural community isn’t a brand new object. It’s a pure extension of those fashions.

As common, we are going to observe these steps:

- First, we are going to have a look at how the mannequin of a Neural Community Regressor works. Within the case of neural networks, this step is known as ahead propagation.

- Then we are going to practice this operate utilizing gradient descent. This course of is known as backpropagation.

1. Ahead propagation

On this half, we are going to outline our mannequin, then implement it in Excel to see how the prediction works.

1.1 A Easy Dataset

We’ll use a quite simple dataset that I generated. It consists of simply 12 observations and a single characteristic.

As you’ll be able to see, the goal variable has a nonlinear relationship with x.

And for this dataset, we are going to use two neurons within the hidden layer.

1.2 Neural Community Construction

Our instance neural community has:

- One enter layer with the characteristic x as enter

- One hidden layer with two neurons within the hidden layer, and these two neurons will permit us to create a nonlinear relationship

- The output layer is only a linear regression

Right here is the diagram that represents this neural community, together with all of the parameters that have to be estimated. There are a complete of seven parameters.

Hidden layer:

- a11: weight from x to hidden neuron 1

- b11: bias of hidden neuron 1

- a12: weight from x to hidden neuron 2

- b12: bias of hidden neuron 2

Output layer:

- a21: weight from hidden neuron 1 to output

- a22: weight from hidden neuron 2 to output

- b2: output bias

At its core, a neural community is only a operate. A composed operate.

If you happen to write it explicitly, there may be nothing mysterious about it.

We often signify this operate with a diagram product of “neurons”.

In my view, one of the best ways to interpret this diagram is as a visible illustration of a composed mathematical operate, not as a declare that it actually reproduces how organic neurons work.

Why does this operate work?

Every sigmoid behaves like a clean step.

With two sigmoids, the mannequin can improve, lower, bend, and flatten the output curve.

By combining them linearly, the community can approximate clean non-linear curves.

This is the reason for this dataset, two neurons are already sufficient. However would you be capable of discover a dataset for which this construction isn’t appropriate?

1.3 Implementation of the operate in Excel

On this part, we are going to suppose that the 7 coefficients are already discovered. And we are able to then implement the formulation we noticed simply earlier than.

To visualise the neural community, we are able to use new steady values of x starting from -2 to 2 with a step of 0.02.

Right here is the screenshot, and we are able to see that the ultimate operate matches the form of the enter information fairly effectively.

2. Backpropagation (Gradient descent)

At this level, the mannequin is totally outlined.

Since it’s a regression downside, we are going to use the MSE (imply squared error), identical to for a linear regression.

Now, we’ve to search out the 7 parameters that reduce the MSE.

2.1 Particulars of the backpropagation algorithm

The precept is straightforward. BUT, since there are lots of composed capabilities and plenty of parameters, we’ve to be organized with the derivatives.

I cannot derive all of the 7 partial derivatives explicitly. I’ll simply give the outcomes.

As we are able to see, there may be the error time period. So so as to implement the entire course of, we’ve to observe this loop:

- initialize the weights,

- compute the output (ahead propagation),

- compute the error,

- compute gradients utilizing partial derivatives,

- replace the weights,

- repeat till convergence.

2.2 Initialization

Let’s get began by placing the enter dataset in a column format, which is able to make it simpler to implement the formulation in Excel.

In principle, we are able to start with random values for the initialization of the values of the parameters. However in follow, the variety of iterations will be giant to realize full convergence. And for the reason that value operate isn’t convex, we are able to get caught in a neighborhood minimal.

So we’ve to decide on “correctly” the preliminary values. I’ve ready some for you. You may make small adjustments to see what occurs.

2.3 Ahead propagation

Within the columns from AG to BP, we carry out the ahead propagation part. We compute A1 and A2 first, adopted by the output. These are the identical formulation used within the earlier a part of the ahead propagation.

To simplify the computations and make them extra manageable, we carry out the calculations for every remark individually. This implies we’ve 12 columns for every hidden layer (A1 and A2) and the output layer. As an alternative of utilizing a summation formulation, we calculate the values for every remark individually.

To facilitate the for loop course of in the course of the gradient descent part, we set up the coaching dataset in columns, and we are able to then lengthen the formulation in Excel by row.

2.4 Errors and the Value operate

In columns BQ to CN, we are able to now compute the values of the price operate.

2.5 Partial derivatives

We will likely be computing 7 partial derivatives equivalent to the weights of our neural community. For every of those partial derivatives, we might want to compute the values for all 12 observations, leading to a complete of 84 columns. Nevertheless, we’ve made efforts to simplify this course of by organizing the sheet with coloration coding and formulation for ease of use.

So we are going to start with the output layer, for the parameters: a21, a22 and b2. We are able to discover them within the columns from CO to DX.

Then for the parameters a11 and a12, we are able to discover them from columns DY to EV:

And eventually, for the bias parameters b11 and b12, we use columns EW to FT.

And to wrap it up, we sum all of the partial derivatives throughout the 12 observations. These aggregated gradients are neatly organized in columns Z to AF. The parameter updates are then carried out in columns R to X, utilizing these values.

2.6 Visualization of the convergence

To raised perceive the coaching course of, we visualize how the parameters evolve throughout gradient descent utilizing a graph. On the identical time, the lower of the price operate is tracked in column Y, making the convergence of the mannequin clearly seen.

Conclusion

A neural community regressor isn’t magic.

It’s merely a composition of elementary capabilities, managed by a sure variety of parameters and educated by minimizing a well-defined mathematical goal.

By constructing the mannequin explicitly in Excel, each step turns into seen. Ahead propagation, error computation, partial derivatives, and parameter updates are not summary ideas, however concrete calculations which you could examine and modify.

The complete implementation of our neural community, from ahead propagation to backpropagation, is now full. You’re inspired to experiment by altering the dataset, the preliminary parameter values, or the training fee, and observe how the mannequin behaves throughout coaching.

By way of this hands-on train, we’ve seen how gradients drive studying, how parameters are up to date iteratively, and the way a neural community regularly shapes itself to suit the info. That is precisely what occurs inside trendy machine studying libraries, solely hidden behind a number of traces of code.

When you perceive it this manner, neural networks cease being black packing containers.