article about SVM, the following pure step is Kernel SVM.

At first sight, it seems to be like a very completely different mannequin. The coaching occurs within the twin kind, we cease speaking a couple of slope and an intercept, and all of a sudden every thing is a couple of “kernel”.

In right this moment’s article, I’ll make the phrase kernel concrete by visualizing what it actually does.

There are lots of good methods to introduce Kernel SVM. In case you have learn my earlier articles, that I like to begin from one thing easy that you just already know.

A basic strategy to introduce Kernel SVM is that this: SVM is a linear mannequin. If the connection between the options and the goal is non-linear, a straight line won’t separate the lessons effectively. So we create new options. Polynomial regression continues to be a linear mannequin, we merely add polynomial options (x, x², x³, …). From this standpoint, a polynomial kernel performs polynomial regression implicitly, and an RBF kernel could be seen as utilizing an infinite collection of polynomial options…

Possibly one other day we are going to observe this path, however right this moment we are going to take a special one: we begin with KDE.

Sure, Kernel Density Estimation.

Let’s get began.

1. KDE as a sum of particular person densities

I launched KDE within the article about LDA and QDA, and at the moment I stated we might reuse it later. That is the second.

We see the phrase kernel in KDE, and we additionally see it in Kernel SVM. This isn’t a coincidence, there’s a actual hyperlink.

The thought of KDE is easy:

round every information level, we place a small distribution (a kernel).

Then, we add all these particular person densities collectively to acquire a world distribution.

Hold this concept in thoughts. Will probably be the important thing to understanding Kernel SVM.

We are able to additionally regulate one parameter to regulate how easy the worldwide density is, from very native to very easy, as illustrated within the GIF under.

As , KDE is a distance or density-based mannequin, so right here, we’re going to create a hyperlink between two fashions from two completely different households.

2. Turning KDE right into a mannequin

Now we reuse precisely the identical concept to construct a perform round every level, after which this perform can be utilized for classification.

Do you do not forget that the classification process with the weight-based fashions is first a regression process, as a result of the worth y is at all times thought-about as steady? We solely do the classification half after we acquired the choice perform or f(x).

2.1. (Nonetheless) utilizing a easy dataset

Somebody as soon as requested me why I at all times use round 10 information factors to clarify machine studying, saying it’s meaningless.

I strongly disagree.

If somebody can not clarify how a Machine Studying mannequin works with 10 factors (or much less) and one single function, then they don’t actually perceive how this mannequin works.

So this won’t be a shock for you. Sure, I’ll nonetheless use this quite simple dataset, that I already used for logistic regression and SVM. I do know this dataset is linearly separable, however it’s fascinating to check the outcomes of the fashions.

And I additionally generated one other dataset with information factors that aren’t linearly separable and visualized how the kernelized mannequin works.

2.2. RBF kernel centered on factors

Allow us to now apply the KDE concept to our dataset.

For every information level, we place a bell-shaped curve centered on its x worth. At this stage, we don’t care about classification but. We’re solely doing one easy factor: creating one native bell round every level.

This bell has a Gaussian form, however right here it has a selected identify: RBF, for Radial Foundation Perform.

On this determine, we are able to see the RBF (Gaussian) kernel centered on this level x₇

The identify sounds technical, however the concept is definitely quite simple.

When you see RBFs as “distance-based bells”, the identify stops being mysterious.

Find out how to learn this intuitively

- x is any place on the x-axis

- x₇ is the middle of the bell (the seventh level)

- γ (gamma) controls the width of the bell

So the bell reaches its most precisely on the level.

As x strikes away from x₇, the worth decreases easily towards 0.

Position of γ (gamma)

- Small γ means vast bell (easy, world affect)

- Giant γ means slender bell (very native affect)

So γ performs the identical function because the bandwidth in KDE.

At this stage, nothing is mixed but. We’re simply constructing the elementary blocks.

2.3. Combining bells with class labels

On the figures under, you first see the person bells, every centered on an information level.

As soon as that is clear, we transfer to the following step: combining the bells.

This time, every bell is multiplied by its label yi.

Consequently, some bells are added and others are subtracted, creating influences in two reverse instructions.

This is step one towards a classification perform.

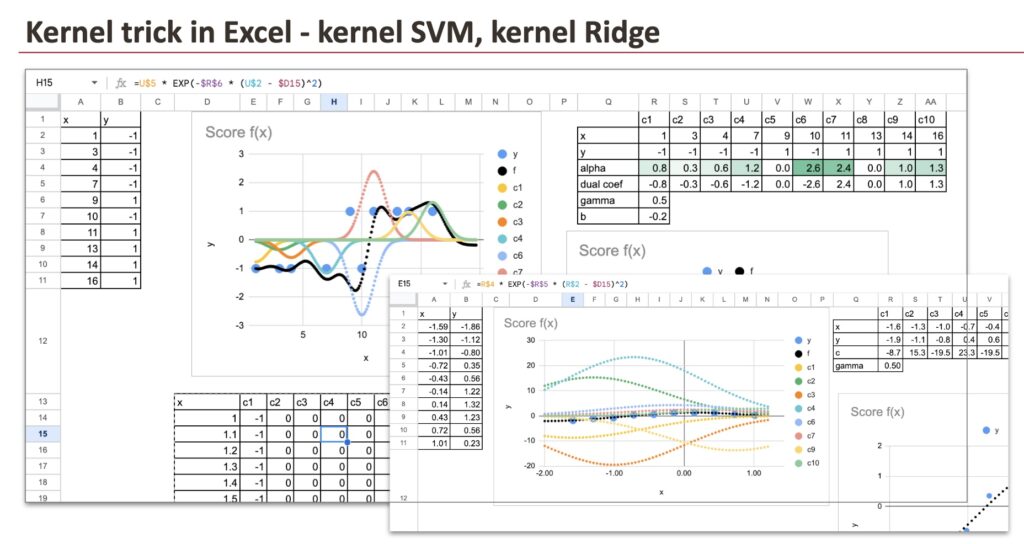

And we are able to see all of the parts from every information level which might be including collectively in Excel to get the ultimate rating.

This already seems to be extraordinarily just like KDE.

However we’re not achieved but.

2.4. From equal bells to weighted bells

We stated earlier that SVM belongs to the weight-based household of fashions. So the following pure step is to introduce weights.

In distance-based fashions, one main limitation is that each one options are handled as equally vital when computing distances. After all, we are able to rescale options, however that is typically a guide and imperfect repair.

Right here, we take a special method.

As a substitute of merely summing all of the bells, we assign a weight to every information level and multiply every bell by this weight.

At this level, the mannequin continues to be linear, however linear within the area of kernels, not within the unique enter area.

To make this concrete, we are able to assume that the coefficients αi are already identified and immediately plot the ensuing perform in Excel. Every information level contributes its personal weighted bell, and the ultimate rating is simply the sum of all these contributions.

If we apply this to a dataset with a non-linearly separable boundary, we clearly see what Kernel SVM is doing: it suits the info by combining native influences, as a substitute of making an attempt to attract a single straight line.

3. Loss perform: the place SVM actually begins

To this point, we’ve got solely talked in regards to the kernel a part of the mannequin. We have now constructed bells, weighted them, and mixed them.

However our mannequin is known as Kernel SVM, not simply “kernel mannequin”.

The SVM half comes from the loss perform.

And as chances are you’ll already know, SVM is outlined by the hinge loss.

3.1 Hinge loss and help vectors

The hinge loss has an important property.

If some extent is:

- accurately labeled, and

- far sufficient from the choice boundary,

then its loss is zero.

As a direct consequence, its coefficient αi turns into zero.

Just a few information factors stay energetic.

These factors are referred to as help vectors.

So regardless that we began with one bell per information level, within the closing mannequin, only some bells survive.

Within the instance under, you may see that for some factors (as an illustration factors 5 and eight), the coefficient αi is zero. These factors are usually not help vectors and don’t contribute to the choice perform.

Relying on how strongly we penalize violations (by means of the parameter C), the variety of help vectors can enhance or lower.

It is a essential sensible benefit of SVM.

When the dataset is giant, storing one parameter per information level could be costly. Because of hinge loss, SVM produces a sparse mannequin, the place solely a small subset of factors is stored.

3.2 Kernel ridge regression: similar kernels, completely different loss

If we maintain the identical kernels however substitute the hinge loss with a squared loss, we receive kernel ridge regression:

Identical kernels.

Identical bells.

Totally different loss.

This results in an important conclusion:

Kernels outline the illustration.

The loss perform defines the mannequin.

With kernel ridge regression, the mannequin should retailer all coaching information factors.

Since squared loss doesn’t pressure any coefficient to zero, each information level retains a non-zero weight and contributes to the prediction.

In distinction, Kernel SVM produces a sparse answer: solely help vectors are saved, all different factors disappear from the mannequin.

3.3 A fast hyperlink with LASSO

There may be an fascinating parallel with LASSO.

In linear regression, LASSO makes use of an L1 penalty on the primal coefficients. This penalty encourages sparsity, and a few coefficients turn into precisely zero.

In SVM, hinge loss performs the same function, however in a special area.

- LASSO creates sparsity within the primal coefficients

- SVM creates sparsity within the twin coefficients αi

Totally different mechanisms, similar impact: solely the vital parameters survive.

Conclusion

Kernel SVM isn’t just about kernels.

- Kernels construct a wealthy, non-linear illustration.

- Hinge loss selects solely the important information factors.

The result’s a mannequin that’s each versatile and sparse, which is why SVM stays a robust and stylish software.

Tomorrow, we are going to have a look at one other mannequin that offers with non-linearity. Keep tuned.