to this “Advent Calendar” of Machine learning and deep learning in Excel.

For Day 1, we start with the k-NN (k-Nearest Neighbors) regressor algorithm. And as you will note, that is actually the only mannequin and it’s a good strategy to begin.

For many who already know this mannequin, listed here are some questions for you. Hopefully, they are going to make you wish to proceed studying. And there are some delicate classes that aren’t taught in conventional programs.

- Is scaling of steady options essential for this mannequin?

- How can categorical options be dealt with?

- What must be carried out to the continual options to enhance mannequin efficiency?

- What forms of distance measures may be extra appropriate in sure conditions? For instance, when predicting home costs the place geographical location issues?

Spoiler: with a naive k-NN, you can’t get the perfect scaling robotically.

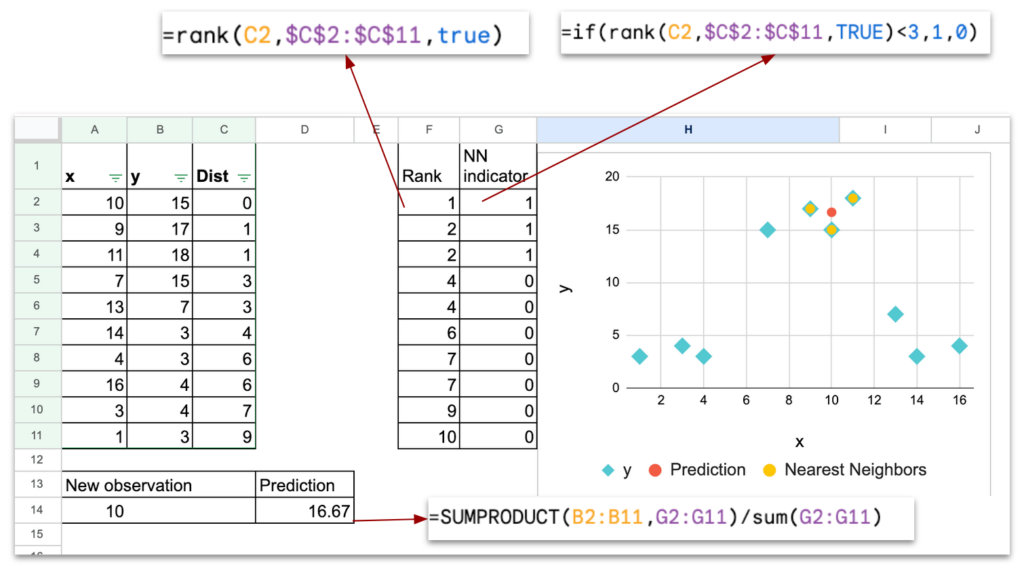

It’s also a possibility, in case you are not conversant in Excel formulation, to make use of formulation akin to RANK, IF, SUMPRODUCT, and different helpful Excel capabilities.

You need to use this link to get the Excel/Google Sheet file, and my recommendation is that you simply observe the article, and also you do some testing with the file to higher perceive.

The precept of k-NN

If you wish to promote or purchase an condominium, how would you estimate the worth?

Please take into consideration a really life like method, not some complicated mannequin that it’s a must to spend hours to construct.

One thing that you are able to do for actual.

Properly, you’ll most likely ask your neighbors who’ve an condominium of the identical or related measurement. And also you calculate the typical worth of those flats.

Sure, that’s precisely the concept of k-NN, for k-Nearest Neighbors: seek for probably the most related examples, and use their values to estimate the brand new one.

For instance this activity with a concrete instance of home pricing estimation, we’ll use this well-known dataset known as California Housing Dataset. That is the Census knowledge from California block teams, used to foretell median home worth.

Every remark will not be a person home, however it’s nonetheless fascinating to make use of this instance.

Here’s a fast description of the variables.

Goal variable is MedHouseVal, which is the median home worth, in items of 100,000 USD (instance: 3.2 means 320,000 {dollars}).

The function variables are as follows:

1. MedInc: median earnings (in items of 10,000 USD)

2. HouseAge: median age of homes

3. AveRooms: common variety of rooms per family

4. AveBedrms: common variety of bedrooms per family

5. Inhabitants: folks residing within the block group

6. AveOccup: common variety of occupants per family

7. Latitude: geographic latitude

8. Longitude: geographic longitude

k-NN with One Steady Characteristic

Earlier than we use a number of options to search out the neighbors, let’s first solely use one function and some observations.

Though the method for one steady function can be quite simple, we’ll nonetheless observe each step. We first discover our dataset, then we practice the mannequin with a hyperparameter, and ultimately, we will use the mannequin to foretell.

Coaching dataset

Right here is the plot of this straightforward dataset of 10 observations. The x-axis is the continual function, and the y-axis is the goal variable.

Now, think about that we have now to foretell the worth for a brand new remark x=10. How can we do this?

Mannequin coaching?

Step one for nearly all machine studying fashions is coaching.

However for k-NN, your mannequin is your whole dataset. In different phrases, you don’t have to coach the mannequin, you employ the unique dataset instantly.

So in scikit-learn, while you do mannequin.match, for a k-NN estimator, nothing actually occurs.

Some could ask: what about ok?

Properly, ok is the hyperparameter. So it’s a must to select a worth for ok, and it may be tuned.

Prediction for one new remark

For the hyperparameter ok, we’ll use ok=3, for the reason that dataset could be very small.

For one function variable, the gap can trivially be the absolute worth of the distinction of the worth between the brand new remark and the others.

Within the sheet “algo1D”, you possibly can change the worth of the brand new remark, and use the filter on distance column C to order the dataset within the rising order, the 3-nearest neighbors can be plotted.

To make the calculation extra automated, we will use RANK operate to see the smallest observations by way of distance.

And we can also create a column of indicators (column G), with indicator = 1, in the event that they belong to the k-nearest neighbors.

Lastly, for the prediction, we will use SUMPRODUCT to calculate the typical worth of all y values with indicator =1.

Within the plot,

- the sunshine blue dots characterize the dataset

- the crimson dot represents the brand new remark with the anticipated y worth

- the yellow dots characterize the 3-nearest neighbors of the brand new remark (in crimson)

Let’s recap — the prediction section consists of the next steps:

- For one given new remark, calculate the gap between this new remark and all of the observations within the coaching dataset.

- Determine the ok observations which have the shortest distance. In Excel, we’ll use the filter to order manually the coaching dataset. Or we will use RANK (and a indicator column) to get the highest ok observations.

- Calculate the anticipated worth, by calculating the typical worth of the goal variable, through the use of SUMPRODUCT.

Prediction for an interval of recent observations

Within the sheet “algo1D f” (f for ultimate), I plotted the prediction for an inventory of recent observations, starting from 1 to 17.

With a programming language, we may do it simply in a loop, and for a bigger variety of new observations, so the illustration may very well be denser.

With Excel, I manually repeated the next steps:

- enter a worth for x

- order the gap column

- copy-paste the prediction

Impact of the hyperparameter ok

The hyperparameter that’s utilized in k-NN is the variety of neighbors that we take note of for the calculation of common worth.

We often use this following graph to elucidate how a mannequin may be underfitted, or overfitted.

In our case, if ok is small, there is usually a danger of overfitting.

If ok is giant, there may be danger of underfitting.

The intense case of very giant ok is that ok may be the entire variety of the coaching dataset. And the worth of the prediction would be the identical for each new remark: it’s the international common.

So, we will say that k-NN improves the concept of predicting with a calculation of the typical worth with a number of observations which can be near the brand new remark.

k-NN with Two Steady Options

Now, we’ll research the case of two steady function variables x1 and x2. And we’ll solely discuss concerning the variations with the earlier scenario of 1 function variable.

Two steady function variables dataset

When we have now two function variables, I can not plot in 3D with Excel, so the plot incorporates solely x1 as x-axis, and x2 as y-axis.

So don’t be confused with the earlier dataset, for which y-axis represents the goal worth y.

Prediction with the Euclidean distance

Now that we have now two options, we have now to take each of them into consideration.

One common distance we will use is the Euclidean Distance.

Then we will use the identical course of to the highest ok observations which have the minimal distance with the brand new remark.

To get a visible plot, we will use the identical colours

- Blue for coaching dataset

- Pink for the brand new remark

- Yellow for the discovered ok nearest neighbors

Affect of the size of the variables

When you might have two options, one query that we will ask is the influence of the size of the function for the results of prediction.

First, let’s see this straightforward instance, I multiplied the function x2 by 10.

Will this scaling influence the predictions? The reply is in fact sure.

And we will simply examine them, as within the following picture.

It’s simple sufficient to know that the Euclidean Distance sums the squared distinction of the options, no matter their scales.

Consequently, the function that has a big scale will dominate the gap.

On the subject of function scaling, one frequent operation is standardization (additionally known as centering and discount) or min–max scaling. The concept is to put all options on a comparable scale.

BUT, let’s take into consideration this case: what if one function is expressed in {dollars}, and the opposite in yen.

In the true world, the right relation between the 2 scales is about 1 greenback = 156 yen (as of November 2025). We all know this as a result of we perceive the that means of the items.

How would the mannequin know this? It DOES NOT.

The one hyperparameter is ok, and the mannequin doesn’t alter something to right for variations in items or scales. k-NN has no inside mechanism for understanding that two options have completely different items.

And that is solely the begining of the issues…

k-NN with the California Housing dataset

Now, let’s lastly use the real-world dataset of California Housing dataset.

With the one-feature dataset, we received the essential thought of how k-NN works. With two-feature dataset, we noticed that the size of options is essential.

Now, with this real-world dataset, we’ll see that the heterogeneous nature of the options make the Euclidean distance meaningless.

We are going to see another extra essential concepts after we use k-NN in observe.

Naive utility of k-NN regressor

Since all options on this dataset are steady, we will simply calculate the Euclidean Distance. And we outline a quantity ok, to calculate the typical worth of the goal variable, right here MedHouseVal.

In Excel, you possibly can simply do this your self. Or you possibly can help me here and get all of the information.

Notion of distance primarily based on completely different options

I mentioned that the earlier utility is naive, as a result of if you happen to look nearer, you will note these issues:

MedInc (median earnings) is expressed in items of 10,000 USD. If we resolve to specific it in 100,000 USD or in 1,000 USD as an alternative, the prediction will change, as a result of k-NN is delicate to the size of the options. We noticed this drawback earlier than.

Now, furthermore, every function has a unique nature.

- MedInc is an sum of money (in {dollars}).

- HouseAge is an age in years.

- AveRooms is a depend of rooms.

- Inhabitants is a lot of individuals.

- Latitude and longitude are geographic coordinates.

Due to this fact, the Euclidean distance is doomed.

Various kinds of distances

The commonest selection is the Euclidean distance, however it isn’t the one one.

We are able to additionally use Manhattan distance when options characterize grid-like actions, and Cosine distance when solely the route issues (as with textual content embeddings).

Every distance modifications how “nearest” is outlined, and due to this fact can change which neighbors KNN selects.

Relying on the info, different distances may be extra applicable.

For instance, with latitude and longitude, we will use the true geographical distance (in meters) as an alternative of a easy Euclidean distance on levels.

Within the California Housing dataset, that is particularly helpful as a result of we have now the precise latitude and longitude of every district.

Nonetheless, as soon as we attempt to mix these geographical distances with different variables (akin to median earnings, variety of rooms, or inhabitants), the issue turns into extra complicated, as a result of the variables have very completely different natures and scales.

Within the cartography renderings beneath, I used k-NN as a smoothing operate to refine the values related to completely different areas of Paris.

On the left, every space has solely a single worth, so from one quarter to its neighboring quarters, there is usually a discontinuity of the variable.

On the precise, k-NN permits me to estimate a worth for each particular tackle by smoothing the data primarily based on close by areas.

Furthermore, for indicators such because the proportion of sure skilled classes, I additionally utilized population-based weighting in order that bigger areas have a stronger affect within the smoothing course of.

As a conclusion, when the scenario permits it, selecting a extra particular distance might help us higher seize the underlying actuality.

By linking the gap to the character of the info, we will make k-NN rather more significant: geographical distance for coordinates, cosine distance for embeddings, and so forth. The selection of distance isn’t just a technical element, it modifications how the mannequin “sees” the world and which neighbors it considers related.

How Categorical Options may be Modeled

You might hear that categorical options can’t be dealt with in k-NN fashions.

However this isn’t utterly true.

k-NN can work with categorical variables so long as we will outline a distance between two observations.

Many individuals will say: “simply use one-hot encoding.”

Others point out label encoding, or ordinal encoding.

However these strategies behave very otherwise in a distance-based mannequin.

To make this clear, we’ll use one other dataset: the diamond price dataset (CC BY 4.0 license), which incorporates a number of options akin to carat, minimize, shade, and readability.

For simplicity, we’ll use solely carat (numerical) and readability (categorical) to display a number of outcomes.

Predicting Costs with Carat

First, we’ll begin with carat, because you most likely know that the worth of a diamond relies upon primarily on the dimensions (carat) of the stone.

The graphic beneath exhibits how k-NN can discover diamonds with related sizes to estimate the worth.

One-Scorching Encoding for Readability Characteristic

Now allow us to have a look at readability.

Beneath is the desk of classes with their meanings, and we apply one-hot encoding to remodel every class right into a binary vector.

| Readability | Which means |

| IF | Internally Flawless |

| VVS1 | Very Very Barely Included 1 |

| VVS2 | Very Very Barely Included 2 |

| VS1 | Very Barely Included 1 |

| VS2 | Very Barely Included 2 |

| SI1 | Barely Included 1 |

| SI2 | Barely Included 2 |

| I1 | Included 1 |

On this desk, we see that for the brand new diamond with readability VVS2, the closest neighbors are all diamonds from the identical readability class.

The numerical function carat has little or no affect on the gap, whereas it’s a extra essential function, as you possibly can see within the worth column.

Key situation 1: all classes are equally distant

When utilizing Euclidean distance on one-hot vectors:

- IF vs VVS1 → distance = √2

- IF vs SI2 → distance = √2

- IF vs I1 → distance = √2

Each completely different class is at precisely the identical distance.

This doesn’t mirror the true diamond grading scale.

Key situation 2: scaling drawback with steady variables

As a result of we mix one-hot readability with carat (a steady function), we face one other drawback:

- carat values in our instance are beneath 1

- readability vectors have variations of √2 → readability dominates the gap calculation

So even small modifications in readability overpower the impact of carat.

That is precisely the identical scaling situation we face with multi-continuous options, however even stronger.

Ordinal Encoding for Readability

Now we will strive encoding the Readability function with numerical labels. However as an alternative of utilizing the traditional labels 1, 2, 3… we use expert-based labels that mirror the true grading scale.

The concept is to translate the readability ranges into values that behave extra like a steady function, much like carat, even when readability will not be strictly steady.

With this expert-based encoding, the distances grow to be extra significant.

Carat and readability are actually on comparable scales, so neither function utterly dominates the gap calculation.

So, we acquire a higher stability between measurement and readability when choosing neighbors, which provides extra life like predictions.

Conclusions

In conclusion, the k-NN regressor is a extremely non-linear, native estimator. It’s so native that solely the Okay closest observations are literally used.

After implementing k-NN regressor in Excel, I feel that we will actually ask this query: Is the k-NN regressor actually a Machine Studying mannequin?

- There isn’t a mannequin coaching

- When predicting, the number of the neighbor observations doesn’t rely upon the worth of goal variable

However, it’s so simple to know, that with Excel, we will simply implement the entire algorithm. Furthermore, we will alter the gap as we want.

Businesspeople can see the concept instantly: to foretell a worth, we have a look at related observations.

The precise issues with k-NN, and all fashions which can be primarily based on distance:

- the size of the options

- the heterogeneous nature of options, which makes the sum meaningless

- the particular distance that must be outlined in concrete conditions

- for categorical options, label/ordinal encoding may very well be optimized if we may discover the optimum scaling.

So briefly, the issue is the scaling of the options. We might imagine that they are often tuned as hyperparameters, however then the tuning would require a lot time.

We are going to see later that it’s precisely the motivation behind one other household of fashions.

Right here, the notion of scale can be equal to the notion of function significance, as a result of in k-NN the significance of every function is outlined earlier than utilizing the mannequin.

So that is solely the start of our journey. We are going to uncover collectively different fashions that may do higher, from this straightforward mannequin, by bettering in numerous instructions: function scaling, from distance to likelihood, splitting to higher mannequin every class…