forecasting roughly $50 billion in promoting income utilizing econometrics, time-series fashions, and causal inference. When a senior VP requested how assured we ought to be in a quantity, I couldn’t hand them a degree estimate and shrug. I needed to quantify the uncertainty, hint the causal chain, and clarify which assumptions would break the forecast in the event that they turned out to be mistaken.

None of that work concerned a Giant Language Mannequin (LLM). None of it may have.

For those who’re an information scientist who’s been feeling left behind by the AI wave, this text is the reframe. The talents the business is abandoning are the precise ones changing into scarcer, extra demanded, and higher compensated. Whereas everybody else chases the following basis mannequin, the market is quietly repricing the basics.

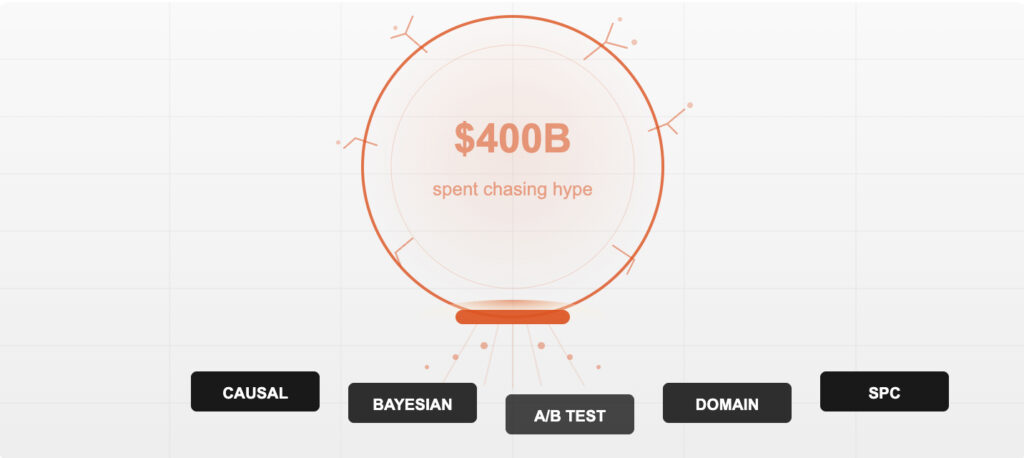

This piece lays out 5 particular abilities (I name them the Anti-Hype Stack), explains why every one resists automation, and offers you a 90-day roadmap to construct them. However first, a fast have a look at why the hype is cracking.

The $300 Billion Hole

In 2025, hyperscaler firms dedicated nearly $400 billion in capital expenditure on AI infrastructure. Precise enterprise AI income? Roughly $100 billion. That’s a 4:1 ratio of spending to incomes.

A National Bureau of Economic Research study from February 2026 discovered that 90% of corporations reported no measurable productiveness influence from AI. Less than 30% of CEOs had been glad with their GenAI returns. And Gartner positioned Generative AI squarely in the Trough of Disillusionment.

This doesn’t imply AI is ineffective. It means the bubble is deflating on schedule, the best way each expertise bubble does. The dot-com bust didn’t kill the web. It killed the businesses that confused hype with product-market match. The survivors (those that bought books and optimized logistics) had been those obsessive about measurement, experimentation, and unglamorous operational rigor.

The identical correction is occurring in knowledge science. And the ability set that survives it’s the one constructed on causation, not correlation.

The boat everybody rushed to board is taking over water. The shore they deserted is trying more and more stable.

The Anti-Hype Ability Stack

5 abilities. Each is counter-cyclical (turns into extra useful as hype recedes), immune to LLM automation (requires human judgment that pattern-matching can’t replicate), and instantly tied to the enterprise outcomes executives really pay for.

I didn’t decide these from a textbook. They’re the talents I’ve relied on throughout 4 industries (healthcare, retail, increased training, digital promoting) and almost a decade of utilized work. The technical stack barely modified between domains. What modified every part was realizing which of those instruments to achieve for and when.

Picture by the creator.

1. Causal Inference: The Ability That Solutions “Why”

What it’s

Figuring out whether or not X really causes Y, not simply whether or not they correlate. The toolkit: Randomized Managed Trials (RCTs), Distinction-in-Variations (DiD), interrupted time collection, instrumental variables, regression discontinuity, and Directed Acyclic Graphs (DAGs).

Why I imagine that is the #1 ability

I as soon as used interrupted time collection evaluation to isolate the causal influence of a significant promotional occasion on advert income forecasts. The predictive mannequin mentioned the occasion boosted income. The causal mannequin informed a distinct story: roughly 40% of that obvious “increase” was cannibalized from surrounding weeks. Clients weren’t spending extra; they had been shifting after they spent. That single evaluation modified how the forecasting staff modeled promotional occasions going ahead, bettering accuracy by 12% (value about $2 million yearly in a single product vertical).

An LLM can describe instrumental variables. Ask ChatGPT and also you’ll get a stable textbook reply. However it will probably’t do the reasoning, as a result of causal reasoning requires understanding the data-generating course of, intervening on variables, and reasoning about counterfactuals that by no means seem in any coaching corpus.

The market sign

A Causalens survey discovered Causal AI was the #1 method AI leaders deliberate to undertake, with almost 70% of AI-driven organizations implementing causal reasoning by 2026. Organizations making use of causal strategies to promoting reported 35% increased ROI than these utilizing correlation-based focusing on.

You may predict buyer churn with 95% accuracy and nonetheless don’t know how you can cut back it. Prediction with out causation is an costly solution to watch issues occur.

2. Experimental Design: Past the Primary A/B Check

What it’s

Designing managed experiments that isolate the impact of a selected intervention. This goes effectively past splitting visitors 50/50. It contains multi-armed bandits, factorial designs, sequential testing, and (critically) quasi-experimental strategies for conditions the place you can’t randomize.

The place this will get actual

I’ve watched groups deploy machine studying fashions throughout a number of retail areas that scored effectively on holdout units however failed in manufacturing. The rationale was all the time the identical: no one designed the rollout as a correct experiment. No staggered deployment. No matched controls. No pre-registered success metric. The mannequin “labored” on historic knowledge, however with out an experimental framework, there was no solution to distinguish real elevate from seasonal noise, choice bias, or regression to the imply.

Operating a t-test on two teams is simple. Designing an experiment that accounts for community results, carryover, and Simpson’s paradox? That takes coaching most knowledge science packages skip fully. And it’s the half no AI coding assistant can do for you, as a result of the arduous downside isn’t statistical computation. It’s convincing a product staff to withhold a characteristic from a management group lengthy sufficient to measure the impact.

The market sign

Zalora increased its checkout rate by 12.3% by a single well-designed experiment on product web page copy. PayU gained 5.8% in conversions by testing the elimination of 1 kind area. These aren’t ML mannequin enhancements. They’re enterprise outcomes from rigorous experimental considering.

3. Bayesian Reasoning: Sincere Uncertainty

What it’s

A framework for updating beliefs as new proof arrives, quantifying uncertainty, and incorporating prior information into fashions. In follow: Bayesian A/B testing, hierarchical fashions, and probabilistic programming (PyMC, Stan).

Why I discovered this out of necessity

If you’re answerable for income forecasts that roll as much as the CFO, a degree estimate just isn’t a solution. “We count on $X” means nothing with out “and right here’s the vary, and right here’s what would make us revise.” I discovered Bayesian strategies as a result of frequentist confidence intervals weren’t chopping it. A 95% CI that spans a variety wider than all the quarterly goal isn’t helpful to anybody making a call. What decision-makers wanted was a posterior distribution: “There’s a 75% likelihood income falls between A and B, and listed here are the three assumptions that, if violated, shift the distribution.”

Bayesian considering requires a essentially completely different psychological mannequin from the frequentist statistics that dominate most curricula. Chance represents levels of perception, not long-run frequencies. The educational curve is actual. However when you cross it, you cease reporting numbers with out uncertainty bands, and also you begin giving individuals what they really have to resolve.

The market sign

Bayesian methods excel in small-data environments the place classical approaches break down: medical trials with restricted members, early-stage product experiments, and danger modeling with sparse historical past. They’re additionally important for trustworthy uncertainty quantification, the one factor that point-estimate ML fashions deal with worst.

In a world drowning in AI-generated predictions, the scarcest useful resource isn’t one other forecast. It’s a reputable clarification of trigger and impact, with an trustworthy confidence interval connected.

4. Area Modeling: The Ability You Can’t Bootcamp

What it’s

Translating enterprise context into mathematical construction. Understanding the data-generating course of (how the info got here to exist), figuring out the correct loss operate (what you really care about optimizing), and realizing which options are causes versus results.

What 4 industries taught me

I’ve constructed fashions in healthcare (processing thousands and thousands of affected person information each day), retail (forecasting merchandise gross sales throughout 15+ areas), increased training (scholar enrollment pipelines), and digital promoting (econometric fashions for multi-billion-dollar income streams). The Python didn’t change. The SQL didn’t change. What modified every part was understanding why a hospital’s readmission price spiked in February (flu season, not a mannequin failure), why a retailer’s demand forecast collapsed in week 47 (Black Friday cannibalization, not a distribution shift), and why an advert income forecast wanted to deal with a tentpole occasion as a structural break slightly than an outlier.

AI instruments can course of knowledge. They’ll’t perceive the context that determines whether or not a sample is sign or artifact. That understanding comes from sustained publicity to a selected business and the flexibility to assume when it comes to techniques slightly than datasets.

The market sign

Area experience is why an information scientist in healthcare or finance earns 25-40% more than a generalist with the identical technical abilities. The mannequin isn’t the bottleneck. Understanding what the mannequin ought to optimize is.

5. Statistical Course of Management: Understanding When One thing Really Modified

What it’s

Monitoring techniques and processes over time to differentiate sign from noise. Management charts, course of functionality evaluation, and root trigger investigation. Initially from manufacturing; now utilized to ML mannequin monitoring, knowledge pipeline well being, and enterprise metric monitoring.

A lesson from manufacturing ML

I as soon as helped construct an object detection pipeline for automated retail stock monitoring. The mannequin hit 95% mAP on the take a look at set. It went to manufacturing. Three weeks later, accuracy began drifting and no one observed for a month, as a result of there was no course of management layer. As soon as we added management charts monitoring detection confidence distributions, inference latency, and have drift metrics, we may distinguish seasonal shelf rearrangements (noise) from real mannequin degradation (sign). The distinction: catching an issue in week one versus week 5. In stock administration, that hole interprets on to empty cabinets and misplaced income.

ML and Statistical Process Control (SPC) are complementary tools, not competing ones. Each manufacturing ML system wants SPC. Nearly none have it, as a result of the ability lives in industrial engineering departments, not knowledge science packages.

The market sign

Manufacturing firms utilizing SPC alongside ML obtain measurably decrease defect charges by catching course of anomalies earlier than they cascade. In tech, SPC-based monitoring catches mannequin degradation weeks earlier than accuracy metrics flag an issue.

Why LLMs Can’t Exchange This Stack

The plain objection: gained’t AI ultimately study to do causal reasoning too?

Not anytime quickly. The reason being structural.

LLMs are correlation engines. They predict the following token primarily based on statistical patterns in coaching knowledge. They’ll describe causal inference strategies, however they’ll’t do causal reasoning, as a result of it requires understanding a data-generating course of, intervening on variables, and reasoning about counterfactuals that by no means seem in any coaching corpus.

Think about a concrete instance. An e-commerce firm notices that clients who use their cell app spend 40% greater than desktop customers. A predictive mannequin would fortunately forecast increased income in case you push extra individuals to obtain the app. A causal thinker would cease and ask: does the app trigger increased spending, or do high-spending clients simply choose apps? The intervention (pushing downloads) solely works if the primary clarification is true. No language mannequin can resolve this by pattern-matching over textual content. It requires designing an experiment, amassing new knowledge, and making use of a causal framework.

That is irreducibly human work. And the 5 abilities above are the toolkit for doing it.

The 90-Day Roadmap

Studying about these abilities and constructing them are two various things. Right here’s a concrete plan, organized by what you can begin this week versus what takes longer to develop. Each advice comes from what I’ve personally used or seen produce outcomes.

None of this requires a GPU cluster. None of it requires a subscription to the newest AI platform. A pocket book, some knowledge, and the willingness to decelerate and think twice about what you’re measuring and why.

The place This Is Heading

Three shifts are already seen out there.

The “AI engineer” function will cut up. One observe turns into infrastructure (MLOps, deployment, scaling), which is software program engineering. The opposite turns into choice science (causal inference, experimentation, strategic evaluation), which is what knowledge science was purported to be earlier than it bought distracted by Kaggle leaderboards.

The premium shifts from prediction to prescription. Prediction is commoditizing. AutoML and AI coding assistants can construct a good predictive mannequin in hours. However translating a prediction right into a advice (“elevate costs by 3% for this section, and right here’s why we’re 85% assured it will increase margin”) requires causal reasoning, area experience, and Bayesian uncertainty quantification. That mixture is uncommon.

Belief turns into the differentiator. As AI-generated evaluation floods each group, the flexibility to elucidate why a advice is credible (right here’s the experiment, right here’s the arrogance interval, right here’s what would change our thoughts) separates evaluation that will get acted on from evaluation that will get ignored. Statistical rigor turns into the moat.

Prediction is changing into a commodity. The premium is shifting to prescription: “do X, right here’s why, and right here’s our confidence degree.”

4 hundred billion {dollars} is chasing a expertise whose paying clients can’t clarify what they’re getting for his or her cash. The correction will come. It all the time does.

When it arrives, the individuals nonetheless standing gained’t be those who discovered to immediate a language mannequin. They’ll be those who can design an experiment, hint a causal chain, and inform a room filled with skeptical executives precisely how assured they need to be in a advice and precisely what proof would change their thoughts.

The bubble is cracking. Beneath it, the bottom is stable. Begin constructing on it.

References

- IntuitionLabs. “AI Bubble vs. Dot-com Bubble: A Data-Driven Comparison.” 2025.

- Davenport, Thomas H. and Bean, Randy. “Five Trends in AI and Data Science for 2026.” MIT Sloan Administration Evaluate, 2026.

- Gartner. “Generative AI in Trough of Disillusionment.” Procurement Journal, 2025.

- Pragmatic Coders. “We Analyzed 4 Years of Gartner’s AI Hype So You Don’t Make a Bad Investment in 2026.” 2026.

- Acalytica. “Causal AI Disruption Across Industries (2025-2026).” 2025.

- PyMC Labs. “From Uncertainty to Insight: How Bayesian Data Science Can Transform Your Business.” 2024.

- Contentsquare. “6 Real Examples and Case Studies of A/B Testing.” 2025.

- Acerta Analytics. “The Difference Between Machine Learning and SPC, and Why It Matters.” 2024.

- DASCA. “Essential Skills for Data Science Professionals in 2026 and Beyond.” 2025.

- Wikipedia. “AI Bubble.” (Accessed February 2026).