Hany Farid, a professor at UC Berkeley who makes a speciality of digital forensics however wasn’t concerned within the Microsoft analysis, says that if the trade adopted the corporate’s blueprint, it could be meaningfully harder to deceive the general public with manipulated content material. Subtle people or governments can work to bypass such instruments, he says, however the brand new normal might remove a good portion of deceptive materials.

“I don’t suppose it solves the issue, however I feel it takes a pleasant massive chunk out of it,” he says.

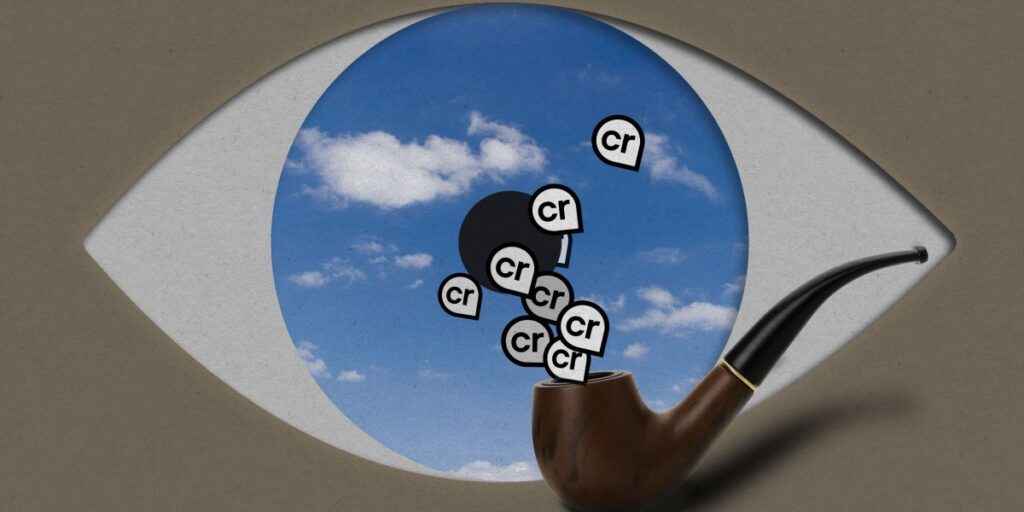

Nonetheless, there are causes to see Microsoft’s method for instance of considerably naïve techno-optimism. There’s growing evidence that individuals are swayed by AI-generated content material even after they know that it’s false. And in a current study of pro-Russian AI-generated movies concerning the struggle in Ukraine, feedback declaring that the movies had been made with AI acquired far much less engagement than feedback treating them as real.

“Are there individuals who, it doesn’t matter what you inform them, are going to imagine what they imagine?” Farid asks. “Sure.” However, he provides, “there are a overwhelming majority of People and residents all over the world who I do suppose need to know the reality.”

That need has not precisely led to pressing motion from tech corporations. Google began including a watermark to content material generated by its AI instruments in 2023, which Farid says has been useful in his investigations. Some platforms use C2PA, a provenance normal Microsoft helped launch in 2021. However the full suite of adjustments that Microsoft suggests, highly effective as they’re, would possibly stay solely solutions in the event that they threaten the enterprise fashions of AI corporations or social media platforms.