Discovering a greater method

Each time an Amsterdam resident applies for advantages, a caseworker critiques the applying for irregularities. If an utility seems suspicious, it may be despatched to the town’s investigations division—which may result in a rejection, a request to right paperwork errors, or a advice that the candidate obtain much less cash. Investigations can even occur later, as soon as advantages have been dispersed; the end result might power recipients to pay again funds, and even push some into debt.

Officers have broad authority over each candidates and present welfare recipients. They will request financial institution information, summon beneficiaries to metropolis corridor, and in some instances make unannounced visits to an individual’s residence. As investigations are carried out—or paperwork errors mounted—much-needed funds could also be delayed. And sometimes—in additional than half of the investigations of functions, in accordance with figures offered by Bodaar—the town finds no proof of wrongdoing. In these instances, this may imply that the town has “wrongly harassed folks,” Bodaar says.

The Good Verify system was designed to keep away from these eventualities by finally changing the preliminary caseworker who flags which instances to ship to the investigations division. The algorithm would display screen the functions to determine these almost certainly to contain main errors, based mostly on sure private traits, and redirect these instances for additional scrutiny by the enforcement staff.

If all went nicely, the town wrote in its inner documentation, the system would enhance on the efficiency of its human caseworkers, flagging fewer welfare candidates for investigation whereas figuring out a better proportion of instances with errors. In a single doc, the town projected that the mannequin would forestall as much as 125 particular person Amsterdammers from going through debt assortment and save €2.4 million yearly.

Good Verify was an thrilling prospect for metropolis officers like de Koning, who would handle the undertaking when it was deployed. He was optimistic, because the metropolis was taking a scientific method, he says; it could “see if it was going to work” as an alternative of taking the perspective that “this should work, and it doesn’t matter what, we are going to proceed this.”

It was the form of daring concept that attracted optimistic techies like Loek Berkers, an information scientist who labored on Good Verify in solely his second job out of faculty. Talking in a restaurant tucked behind Amsterdam’s metropolis corridor, Berkers remembers being impressed at his first contact with the system: “Particularly for a undertaking inside the municipality,” he says, it “was very a lot a type of modern undertaking that was attempting one thing new.”

Good Verify made use of an algorithm known as an “explainable boosting machine,” which permits folks to extra simply perceive how AI fashions produce their predictions. Most different machine-learning fashions are sometimes thought to be “black packing containers” working summary mathematical processes which might be arduous to grasp for each the staff tasked with utilizing them and the folks affected by the outcomes.

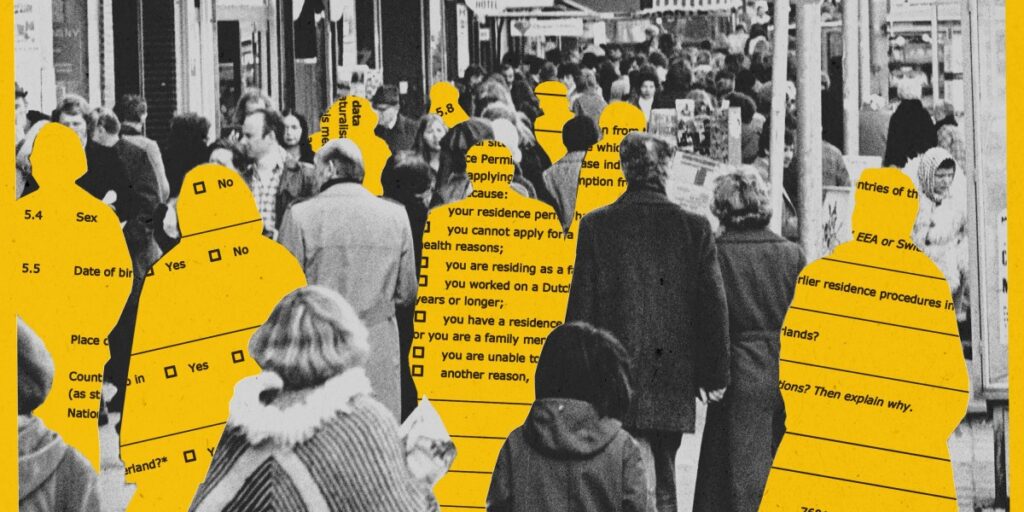

The Good Verify mannequin would think about 15 traits—together with whether or not candidates had beforehand utilized for or acquired advantages, the sum of their belongings, and the variety of addresses that they had on file—to assign a threat rating to every individual. It purposefully averted demographic elements, corresponding to gender, nationality, or age, that had been thought to result in bias. It additionally tried to keep away from “proxy” elements—like postal codes—that won’t look delicate on the floor however can change into so if, for instance, a postal code is statistically related to a specific ethnic group.

In an uncommon step, the town has disclosed this info and shared a number of variations of the Good Verify mannequin with us, successfully inviting exterior scrutiny into the system’s design and performance. With this information, we had been capable of construct a hypothetical welfare recipient to get perception into how a person applicant could be evaluated by Good Verify.

This mannequin was educated on an information set encompassing 3,400 earlier investigations of welfare recipients. The thought was that it could use the outcomes from these investigations, carried out by metropolis workers, to determine which elements within the preliminary functions had been correlated with potential fraud.