MIT researchers have spent greater than a decade finding out strategies that allow robots to search out and manipulate hidden objects by “seeing” by way of obstacles. Their strategies make the most of surface-penetrating wi-fi alerts that replicate off hid gadgets.

Now, the researchers are leveraging generative synthetic intelligence fashions to beat a longstanding bottleneck that restricted the precision of prior approaches. The result’s a brand new methodology that produces extra correct form reconstructions, which might enhance a robotic’s potential to reliably grasp and manipulate objects which might be blocked from view.

This new approach builds a partial reconstruction of a hidden object from mirrored wi-fi alerts and fills within the lacking components of its form utilizing a specifically educated generative AI mannequin.

The researchers additionally launched an expanded system that makes use of generative AI to precisely reconstruct a complete room, together with all of the furnishings. The system makes use of wi-fi alerts despatched from one stationary radar, which replicate off people shifting within the house.

This overcomes one key problem of many current strategies, which require a wi-fi sensor to be mounted on a cell robotic to scan the atmosphere. And in contrast to some well-liked camera-based strategies, their methodology preserves the privateness of individuals within the atmosphere.

These improvements might allow warehouse robots to confirm packed gadgets earlier than delivery, eliminating waste from product returns. They might additionally permit good house robots to know somebody’s location in a room, bettering the protection and effectivity of human-robot interplay.

“What we’ve carried out now’s develop generative AI fashions that assist us perceive wi-fi reflections. This opens up a number of fascinating new functions, however technically it is usually a qualitative leap in capabilities, from having the ability to fill in gaps we weren’t in a position to see earlier than to having the ability to interpret reflections and reconstruct complete scenes,” says Fadel Adib, affiliate professor within the Division of Electrical Engineering and Laptop Science, director of the Sign Kinetics group within the MIT Media Lab, and senior writer of two papers on these strategies. “We’re utilizing AI to lastly unlock wi-fi imaginative and prescient.”

Adib is joined on the first paper by lead writer and analysis assistant Laura Dodds; in addition to analysis assistants Maisy Lam, Waleed Akbar, and Yibo Cheng; and on the second paper by lead writer and former postdoc Kaichen Zhou; Dodds; and analysis assistant Sayed Saad Afzal. Each papers can be offered on the IEEE Convention on Laptop Imaginative and prescient and Sample Recognition.

Surmounting specularity

The Adib Group beforehand demonstrated using millimeter wave (mmWave) alerts to create accurate reconstructions of 3D objects which might be hidden from view, like a misplaced pockets buried below a pile.

These waves, that are the identical kind of alerts utilized in Wi-Fi, can cross by way of frequent obstructions like drywall, plastic, and cardboard, and replicate off hidden objects.

However mmWaves often replicate in a specular method, which implies a wave displays in a single path after putting a floor. So massive parts of the floor will replicate alerts away from the mmWave sensor, making these areas successfully invisible.

“After we wish to reconstruct an object, we’re solely in a position to see the highest floor and we will’t see any of the underside or sides,” Dodds explains.

The researchers beforehand used ideas from physics to interpret mirrored alerts, however this limits the accuracy of the reconstructed 3D form.

Within the new papers, they overcame that limitation through the use of a generative AI mannequin to fill in components which might be lacking from a partial reconstruction.

“However the problem then turns into: How do you prepare these fashions to fill in these gaps?” Adib says.

Normally, researchers use extraordinarily massive datasets to coach a generative AI mannequin, which is one cause fashions like Claude and Llama exhibit such spectacular efficiency. However no mmWave datasets are massive sufficient for coaching.

As a substitute, the researchers tailored the pictures in massive pc imaginative and prescient datasets to imitate the properties in mmWave reflections.

“We have been simulating the property of specularity and the noise we get from these reflections so we will apply current datasets to our area. It will have taken years for us to gather sufficient new knowledge to do that,” Lam says.

The researchers embed the physics of mmWave reflections instantly into these tailored knowledge, creating an artificial dataset they use to show a generative AI mannequin to carry out believable form reconstructions.

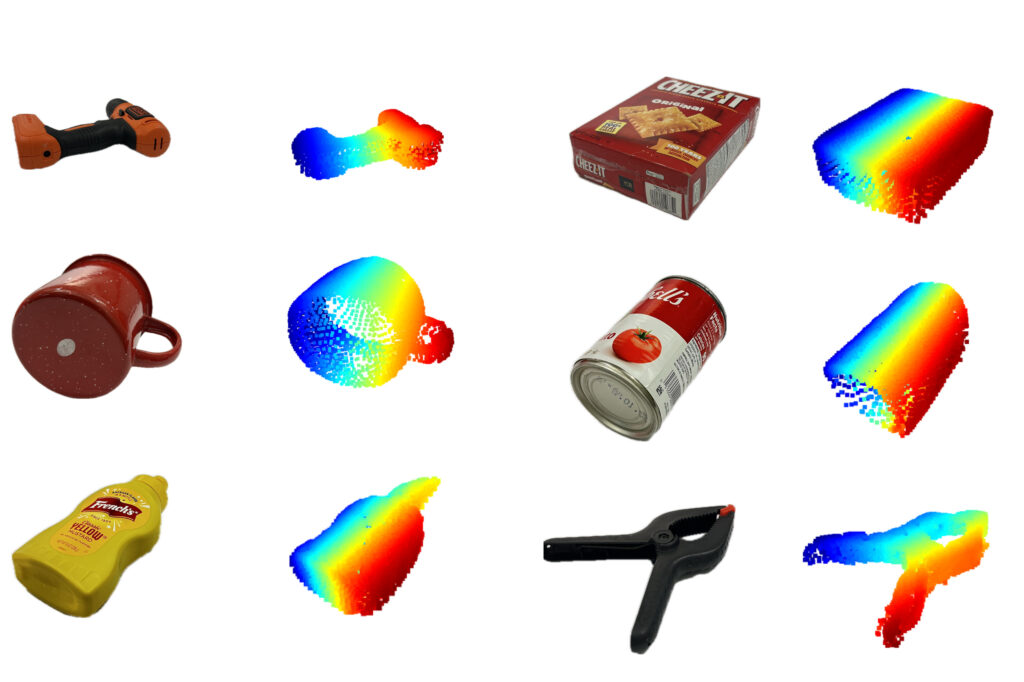

The entire system, known as Wave-Former, proposes a set of potential object surfaces primarily based on mmWave reflections, feeds them to the generative AI mannequin to finish the form, after which refines the surfaces till it achieves a full reconstruction.

Wave-Former was in a position to generate trustworthy reconstructions of about 70 on a regular basis objects, comparable to cans, containers, utensils, and fruit, boosting accuracy by almost 20 % over state-of-the-art baselines. The objects have been hidden behind or below cardboard, wooden, drywall, plastic, and cloth.

Seeing “ghosts”

The staff used this identical method to construct an expanded system that absolutely reconstructs complete indoor scenes by leveraging mmWave reflections off people shifting in a room.

Human movement generates multipath reflections. Some mmWaves replicate off the human, then replicate once more off a wall or object, after which arrive again on the sensor, Dodds explains.

These secondary reflections create so-called “ghost alerts,” that are mirrored copies of the unique sign that change location as a human strikes. These ghost alerts are often discarded as noise, however in addition they maintain details about the format of the room.

“By analyzing how these reflections change over time, we will begin to get a rough understanding of the atmosphere round us. However making an attempt to instantly interpret these alerts goes to be restricted in accuracy and determination.” Dodds says.

They used an analogous coaching methodology to show a generative AI mannequin to interpret these coarse scene reconstructions and perceive the habits of multipath mmWave reflections. This mannequin fills within the gaps, refining the preliminary reconstruction till it completes the scene.

They examined their scene reconstruction system, known as RISE, utilizing greater than 100 human trajectories captured by a single mmWave radar. On common, RISE generated reconstructions that have been about twice as exact than current strategies.

Sooner or later, the researchers wish to enhance the granularity and element of their reconstructions. In addition they wish to construct massive basis fashions for wi-fi alerts, like the muse fashions GPT, Claude, and Gemini for language and imaginative and prescient, which might open new functions.

This work is supported, partially, by the Nationwide Science Basis (NSF), the MIT Media Lab, and Amazon.