On this article, I focus on how one can positive(visible giant language fashions, usually known as vLLMs) like Qwen 2.5 VL 7B. I’ll introduce you to a dataset of handwritten digits, which the bottom model of Qwen 2.5 VL struggles with. We are going to then examine the dataset, annotate it, and use it to create a fine-tuned Qwen 2.5 VL, specialised in extracting hand-written textual content.

Overview

The principle aim of this text is to fine-tune a VLM on a dataset, an essential machine-learning approach in at present’s world, the place language fashions revolutionize the best way knowledge scientists and ML engineers work and obtain. I can be discussing the next matters:

- Motivation and Objective: Why use VLMs for textual content extraction

- Benefits of VLMs

- The dataset

- Annotation and fine-tuning

- SFT technical particulars

- Outcomes and plots

Notice: This text is written as a part of the work performed at Findable. We don’t revenue financially from this work. It’s performed to spotlight the technical capabilities of recent vision-language fashions and digitize and share a helpful handwritten phenology dataset, which can have a major affect on local weather analysis. Moreover, the subject of this text was coated in a presentation in the course of the Data & Draft event by Netlight.

You’ll be able to view all of the code used for this text in our GitHub repository, and all data is available on HuggingFace. Should you’re particularly within the extracted phenology knowledge from Norway, together with geographical coordinates similar to the information, the data is straight accessible on this Excel sheet.

Motivation and Objective

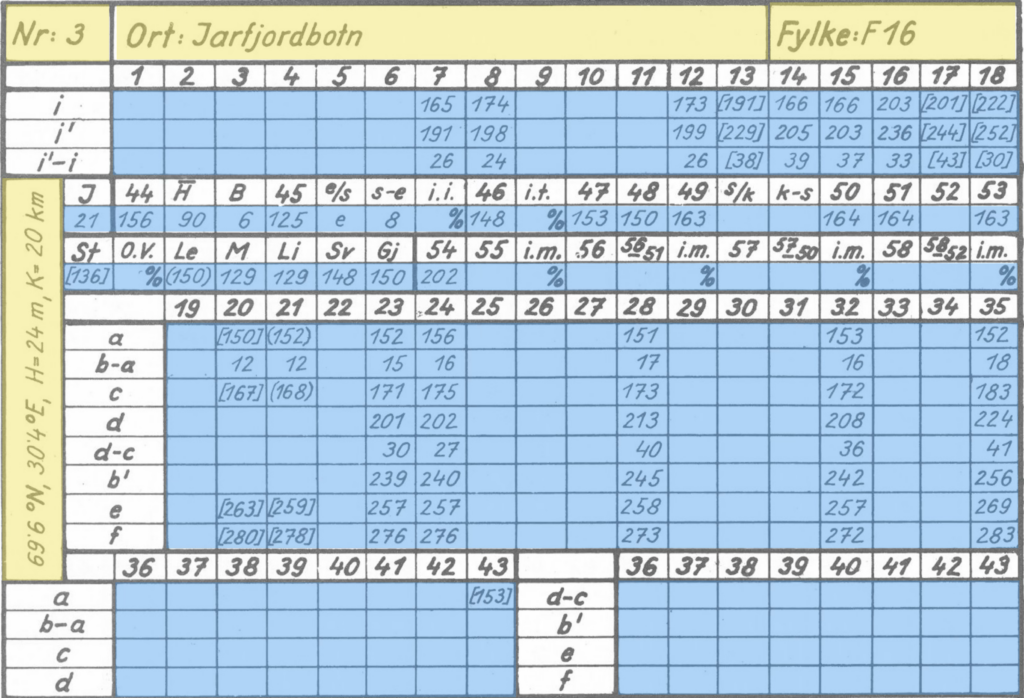

The aim of this text is to indicate you how one can fine-tune a VLM corresponding to Qwen for optimized efficiency on a selected activity. The duty we’re engaged on right here is extracting handwritten textual content from a collection of photographs. The work on this article relies on a Norwegian phenology dataset, which you’ll learn extra about in the README in this GitHub repository. The principle level is that the data contained in these photographs is very helpful and may, for instance, be used to conduct local weather analysis. There may be additionally definitive scientific curiosity on this subject, for instance, this article on analysing long-term changes in when plants flower, or the Eastern Pennsylvania Phenology Project.

Notice that the information extracted is introduced in good religion, and I don’t make any claims as to what the information implies. The principle aim of this text is to indicate you how one can extract this knowledge and current you with the extracted knowledge, for use for scientific analysis.

The consequence mannequin I make on this article can be utilized to extract the textual content from all photographs. This knowledge can then be transformed to tables, and you may plot the data as you see within the picture under:

If you’re solely interested by viewing the information extracted on this examine, you possibly can view it in this parquet file.

Why do we have to use VLMs

When trying on the photographs, it’s possible you’ll suppose we should always apply conventional OCR to this drawback. OCR is the science of extracting textual content from photographs, and in earlier years, it has been dominated by engines like Tesseract, DocTR, and EasyOCR.

Nevertheless, these fashions are sometimes outperformed by the fashionable giant language fashions, significantly those incorporating imaginative and prescient (sometimes known as VLMs or VLLMs)—the picture under highlights why you wish to use a VLM as an alternative of conventional OCR engines. The primary column exhibits instance photographs from our dataset, and the 2 different columns evaluate EasyOCR vs the fine-tuned Qwen mannequin we are going to prepare on this article.

This highlights the principle purpose to make use of a VLM over a standard OCR engine, to extract textual content from photographs: VLMs usually outperform conventional OCR engines when extracting textual content from photographs.

Benefits of VLMs

There are a number of benefits to utilizing VLMs when extracting textual content from photographs. Within the final part, you noticed how the output high quality from the VLM exceeds the output high quality of a standard OCR engine. One other benefit is that you could present directions to VLMs on the way you need it to behave, which conventional OCR engines can not present.

The 2 foremost benefits of VLMs are thus:

- VLMs excel at OCR (significantly handwriting)

- You’ll be able to present directions

VLMs are good at OCR as a result of it’s a part of the coaching course of for these fashions. That is, for instance, talked about in Qwen 2.5 VL Technical report section 2.2.1 Pre-Training Data, the place they listing an OCR dataset as a part of their pre-training knowledge.

Handwriting

Extracting handwritten textual content has been notoriously troublesome up to now and continues to be a problem at present. The rationale for that is that handwriting is non-standardized.

With non-standardized, I check with the truth that the characters will look vastly completely different from individual to individual. For instance of a standardized character, in the event you write a personality on a pc, it’s going to persistently look very related throughout completely different computer systems and folks writing it. As an example, the pc character “a” appears very related irrespective of which pc it’s written on. This makes it less complicated for an OCR engine to select up the character, for the reason that characters it extracts from photographs, almost definitely, look fairly just like the characters it encountered in its coaching set.

Handwritten textual content, nonetheless, is the other. Handwriting varies broadly from individual to individual, which is why you typically battle with studying different folks’s handwriting. OCR engines even have this precise drawback. If characters differ broadly, there’s a decrease probability that it has encountered a selected character variation in its coaching set, thus making extracting the proper character from a picture tougher.

You’ll be able to, for instance, have a look at the picture under. Think about solely trying on the ones within the picture (so masks over the 7). Trying on the picture now, the “1” appears fairly just like a “7”. You’re, after all, in a position to separate the 2 characters as a result of you possibly can see them in context, and suppose critically that if a seven appears prefer it does (with a horizontal line), the primary two characters within the picture have to be ones.

Conventional OCR engines, nonetheless, don’t have this skill. They don’t have a look at your complete picture, suppose critically about one character’s look, and use that to find out different characters. They need to merely guess which character it’s when trying on the remoted digit.

How you can separate the digit “1” from “7”, ties properly into the following part, about offering directions to the VLMs, when extracting textual content.

I’d additionally like so as to add that some OCR engines, corresponding to TrOCR, are made to extract handwritten textual content. From expertise, nonetheless, such fashions aren’t comparable in efficiency to state-of-the-art VLMs corresponding to Qwen 2.5 VL.

Offering directions

One other important benefit of utilizing VLMs for extracting textual content is that you could present directions to the mannequin. That is naturally not possible with conventional OCR engines since they extract all of the textual content within the picture. They’ll solely enter a picture and never separate textual content directions for extracting the textual content from the picture. After we wish to extract textual content utilizing Qwen 2.5 VL, we offer a system immediate, such because the one under.

SYSTEM_PROMPT = """

Beneath is an instruction that describes a activity, write a response that appropriately completes the request.

You're an skilled at studying handwritten desk entries. I offers you a snippet of a desk and you'll

learn the textual content within the snippet and return the textual content as a string.

The texts can include the next:

1) A quantity solely, the quantity can have from 1 to three digits.

2) A quantity surrounded by odd parenthesis.

3) A quantity surrounded by sqaure brackets.

5) The letter 'e', 's' or 'ok'

6) The p.c signal '%'

7) No textual content in any respect (clean picture).

Directions:

**Normal Guidelines**:

- Return the textual content as a string.

- If the snippet comprises no textual content, return: "unknown".

- In an effort to separate the digit 1 from the digit 7, know that the digit 7 at all times could have a horizontal stroke showing in the course of the digit.

If there is no such thing as a such horizontal stroke, the digit is a 1 even when it'd appear like a 7.

- Beware that the textual content will usually be surrounded by a black border, don't confuse this with the textual content. Particularly

it's simple to confuse the digit 1 with elements of the border. Borders must be ignored.

- Ignore something OUTSIDE the border.

- Don't use any code formatting, backticks, or markdown in your response. Simply output the uncooked textual content.

- Reply **ONLY** with the string. Don't present explanations or reasoning.

"""The system immediate units the define for a way Qwen ought to extract the textual content, which supplies Qwen a serious benefit over conventional OCR engines.

There are primarily two factors that give it a bonus:

- We will inform Qwen which characters to anticipate within the picture

- We will inform Qwen what characters appear like (significantly essential for handwritten textual content.

You’ll be able to see level one addressed within the factors 1) -> 7), the place we inform it that it may well solely see 1–3 digits, which digits and letters it may well see, and so forth. This can be a important benefit, since Qwen is conscious that if it detects characters out of this vary, it’s almost definitely misinterpreting the picture, or a selected problem. It could higher predict which character it thinks is within the picture.

The second level is especially related for the issue I discussed earlier of separating “1” from “7,” which look fairly related. Fortunately for us, the creator of this dataset was in line with how he wrote 1s and 7s. The 1s had been at all times written diagonally, and 7s at all times included the horizontal stroke, which clearly separates the “7” from a “1,” at the least from a human perspective of trying on the picture.

Nevertheless, offering such detailed prompts and specs to the mannequin is simply doable as soon as you actually perceive the dataset you’re engaged on and its challenges. That is why you at all times must spend time manually inspecting the information when engaged on a machine-learning drawback corresponding to this. Within the subsequent part, I’ll focus on the dataset we’re engaged on.

The dataset

I begin this part with a quote from Greg Brockman (President of OpenAI as of writing this text), highlighting an essential level. In his tweet, he refers to the truth that knowledge annotation and inspection aren’t prestigious work, however nonetheless, it’s probably the most essential duties you will be spending time on when engaged on a machine-learning mission.

At Findable, I began as an information annotator and proceeded to handle the labeling staff at Findable earlier than I now work as an information scientist. The work with annotation highlighted the significance of manually inspecting and understanding the information you’re engaged on, and taught me how one can do it successfully. Greg Brockman is referring to the truth that this work shouldn’t be prestigious, which is commonly appropriate, since knowledge inspection and annotation will be monotonous. Nevertheless, you need to at all times spend appreciable time inspecting your dataset when engaged on a machine-learning drawback. This time will offer you insights that you could, for instance, use to offer the detailed system immediate I highlighted within the final part.

The dataset we’re engaged on consists of round 82000 photographs, corresponding to those you see under. The cells differ in width from 81 to 93 pixels and in top from 48 to 57 pixels, that means we’re engaged on very small photographs.

When beginning this mission, I first hung out trying on the completely different photographs to know the variations within the dataset. I, for instance, discover:

- The “1”s look just like the “7”s

- There may be some faint textual content in among the photographs (for instance, the “8” within the backside left picture above, and the “6” within the backside proper picture

- From a human perspective, all the photographs are very readable, so we should always be capable of extract all of the textual content accurately

I then proceed by utilizing the bottom model of Qwen 2.5 VL 7B to foretell among the photographs and see which areas the mannequin struggles with. I instantly observed that the mannequin (unsurprisingly) had issues separating “1”s from “7”s.

After this means of first manually inspecting the information, then predicting a bit with the mannequin to see the place it struggles, I word down the next knowledge challenges:

- “1” and “7” look related

- Dots within the background on some photographs

- Cell borders will be misinterpreted as characters

- Parentheses and brackets can typically be confused

- The textual content is faint in some photographs

We’ve got to unravel these challenges when fine-tuning the mannequin to extract the textual content from the photographs, which I focus on within the subsequent part.

Annotation and fine-tuning

After correctly inspecting your dataset, it’s time to work on annotation and fine-tuning. Annotation is the method of setting labels to every picture, and fine-tuning is utilizing these labels to enhance the standard of your mannequin.

The foremost aim when doing the annotation is to create a dataset effectively. This implies rapidly producing loads of labels and guaranteeing the standard of the labels is excessive. To realize this aim of quickly making a high-quality dataset, I divided the method into three foremost steps:

- Predict

- Evaluation & appropriate mannequin errors

- Retrain

It’s best to word that this course of works nicely when you might have a mannequin already fairly good at performing the duty. On this drawback, for instance, Qwen is already fairly good at extracting the textual content from the photographs, and solely makes errors in 5–10% of the circumstances. If in case you have a very new activity for the mannequin, this course of is not going to work as nicely.

Step 1: Predict

Step one is to foretell (extract the textual content) from a number of hundred photographs utilizing the bottom mannequin. The particular variety of photographs you expect on does probably not matter, however you need to attempt to strike a stability between gathering sufficient labels so a coaching run will enhance the mannequin sufficient (step 3) and considering the overhead required to coach a mannequin.

Step 2: Evaluation & appropriate mannequin errors

After you might have predicted on a number of hundred samples, it’s time to overview and proper the mannequin errors. It’s best to arrange your atmosphere to simply show the photographs and labels and repair the errors. Within the picture under, you possibly can see my setup for reviewing and correcting errors. On the left facet, I’ve a Jupyter pocket book the place I can run the cell to show the next 5 samples and the corresponding line to which the label belongs. On the precise facet, all my labels are listed on the corresponding traces. To overview and proper errors, I run the Jupyter pocket book cell, make certain the labels on the precise match the photographs on the left, after which rerun the cell to get the next 5 photographs. I repeat this course of till I’ve appeared via all of the samples.

Step 3: Retrain:

Now that you’ve got a number of hundred appropriate samples, it’s time to prepare the mannequin. In my case, I take Qwen 2.5 VL 7B and tune it to my present set of labels. I fine-tune utilizing the Unsloth package deal, which gives this pocket book on fine-tuning Qwen (the pocket book is for Qwen 2 VL, however all of the code is identical, besides altering the naming, as you see within the code under). You’ll be able to try the following part to study extra particulars concerning the fine-tuning course of.

The coaching creates a fine-tuned model of the mannequin, and I’m going again to step 1 to foretell on a number of hundred new samples. I repeat this cycle of predicting, correcting, and coaching till I discover mannequin efficiency converges.

# that is the unique code within the pocket book

mannequin, tokenizer = FastVisionModel.from_pretrained(

"unsloth/Qwen2-VL-7B-Instruct",

load_in_4bit = False, # that is initially set to True, however when you've got the processing energy, I like to recommend setting it to False

use_gradient_checkpointing = "unsloth",

)

# to coach Qwen 2.5 VL (as an alternative of Qwen 2 VL), be sure to use this as an alternative:

mannequin, tokenizer = FastVisionModel.from_pretrained(

"unsloth/Qwen2.5-VL-7B-Instruct",

load_in_4bit = False,

use_gradient_checkpointing = "unsloth",

)To find out how nicely my mannequin is performing, I additionally create a check set on which I check every fine-tuned mannequin. I by no means prepare on this check set to make sure unbiased outcomes. This check set is how I can decide whether or not the mannequin’s efficiency is converging.

SFT technical particulars

SFT stands for supervised fine-tuning, which is the method of updating the mannequin’s weights to carry out higher on the dataset we offer. The issue we’re engaged on right here is sort of fascinating, as the bottom Qwen 2.5 VL mannequin is already fairly good at OCR. This differs from many different duties we apply VLMs to at Findable, the place we sometimes train the VLM a very new activity with which it primarily has no prior expertise.

When fine-tuning a VLM corresponding to Qwen on a brand new activity, mannequin efficiency quickly will increase when you begin coaching it. Nevertheless, the duty we’re engaged on right here is sort of completely different, since we solely wish to nudge Qwen to be a bit bit higher at studying the handwriting for our specific photographs. As I discussed, the mannequin’s efficiency is round 90–95 % correct (relying on the particular photographs we check on), on this dataset.

This requirement of solely nudging the mannequin makes the mannequin tremendous delicate to the tuning course of parameters. To make sure we nudge the mannequin correctly, we do the next

- Set a low studying charge, to solely barely replace the weights

- Set a low LoRA rank to solely replace a small set of the mannequin weights

- Guarantee all labels are appropriate (the mannequin is tremendous delicate to only a few annotation errors)

- Steadiness the dataset (there are loads of clean photographs, we filter out a few of them)

- Tune all layers of the VLM

- Carry out a hyperparameter search

I’ll add some extra notes on among the factors:

Label correctness

Label correctness is of utmost significance. Just some labeling errors can have a detrimental impact on mannequin efficiency. For instance, after I was engaged on fine-tuning my mannequin, I observed the mannequin began complicated parentheses “( )” with brackets “[ ]”. That is, after all, a major error, so I began trying into why this occurred. My first instinct was that this was as a consequence of points with a few of my labels (i.e, some photographs that had been the truth is parentheses, had obtained a label with brackets). I began trying into my labels and observed this error in round 0.5% of them.

This helped me make an fascinating statement, nonetheless. I had a set of round 1000 labels. 99.5% of the labels had been appropriate, whereas 0.5% (5 labels!) had been incorrect. Nevertheless, after fine-tuning my mannequin, it really carried out worse on the check set. This highlights that only a few incorrect labels can harm your mannequin’s efficiency.

The rationale so few errors can have such a big affect is that the mannequin blindly trusts the labels you give it. The mannequin doesn’t have a look at the picture and suppose Hmmm, why is that this a bracket when the picture has a parenthesis? (such as you would possibly do). The mannequin blindly trusts the labels and accepts it as a incontrovertible fact that this picture (which is a parenthesis) comprises a bracket. This actually degrades mannequin efficiency, as you’re giving incorrect data, which it now makes use of to carry out future predictions.

Information balancing

One other element of the fine-tuning is that I stability out the dataset to restrict the variety of clean photographs. Round 70% of the cells include clean photographs, and I wish to keep away from spending an excessive amount of fine-tuning on these photographs (the mannequin already manages to disregard these cells very well). Thus, I be sure that a most of 30% of the information we fine-tune comprises clean photographs.

Deciding on layers to tune

The picture under exhibits the overall structure of a VLM:

A consideration to make when fine-tuning VLMs is which layers you fine-tune. Ideally, you wish to tune all of the layers (marked in inexperienced within the picture under), which I additionally did when engaged on this drawback. Nevertheless, typically you should have compute constraints, which makes tuning all layers troublesome, and also you may not must tune all layers. An instance of this might be when you’ve got a really image-dependent activity. At Findable, for instance, we classify drawings from architects, civil engineers, and so forth. That is naturally a really vision-dependent activity, and that is an instance of a case the place you possibly can probably get away with solely tuning the imaginative and prescient layers of the mannequin (the ViT — Imaginative and prescient transformer, and the Imaginative and prescient-Language adapter, typically known as a projector).

Hyperparameter search

I additionally did a hyperparameter search to seek out the optimum set of parameters to fine-tune the mannequin. It’s value noting, nonetheless, {that a} hyperparameter search is not going to at all times be doable. Some coaching processes for big language fashions can take a number of days, and in such eventualities, performing an in depth hyperparameter search shouldn’t be possible, so you’ll have to work together with your instinct to discover a good set of parameters.

Nevertheless, for this drawback of extracting handwritten textual content, I had entry to an A100 80 GB GPU. The photographs are fairly small (lower than 100px in every path), and I’m working with the 7B mannequin. This made the coaching take 10–20 minutes, which makes an in a single day hyperparameter search possible.

Outcomes and plots

After repeating the cycle of coaching the mannequin, creating extra labels, retraining, and so forth, I’ve created a high-performing fine-tuned mannequin. Now it’s time to see the ultimate outcomes. I’ve made 4 check units, every consisting of 278 samples. I run EasyOCR, the bottom Qwen 2.5 VL 7B model (Qwen base mannequin), and the fine-tuned mannequin on the information, and you may see the leads to the desk under:

Thus, the outcomes clearly present that the fine-tuning has labored as anticipated, vastly enhancing mannequin efficiency.

To finish off, I’d additionally prefer to share some plots you may make with the information.

If you wish to examine the information additional, it’s all contained in this parquet file on HuggingFace.

Conclusion

On this article, I’ve launched you to a Phenology dataset, consisting of small photographs with handwritten textual content. The issue I’ve addressed on this article is how one can extract the handwritten textual content from these photographs successfully. First, we inspected the dataset to know what it appears like, the variance within the knowledge, and the challenges the imaginative and prescient language mannequin faces when extracting the textual content from the photographs. I then mentioned the three-step pipeline you need to use to create a labelled dataset and fine-tune a mannequin to enhance efficiency. Lastly, I highlighted some outcomes, displaying how fine-tuning Qwen works higher than the bottom Qwen mannequin, and I additionally confirmed some plots representing the information we extracted.

The work on this article is carried out by Eivind Kjosbakken and Lars Aurdal.