Think about you’ve gotten an x-ray report and it is advisable to perceive what accidents you’ve gotten. One possibility is you’ll be able to go to a health care provider which ideally you must however for some motive, when you can’t, you need to use Multimodal Giant Language Fashions (MLLMs) which can course of your x-ray scan and inform you exactly what accidents you’ve gotten in keeping with the scans.

In easy phrases, MLLMs are nothing however a fusion of a number of fashions like textual content, picture, voice, movies, and so on. that are able to not solely processing a standard textual content question however can course of questions in a number of varieties resembling photographs and sound.

So on this article, we’ll stroll you thru what MLLMs are, how they work and what are the highest MMLMs you need to use.

What are Multimodal LLMs?

Not like conventional LLMs which might solely work with one sort of knowledge—principally textual content or picture, these multimodal LLMs can work with a number of types of information much like how people can course of imaginative and prescient, voice, and textual content suddenly.

At its core, multimodal AI takes in varied types of information, resembling textual content, photographs, audio, video, and even sensor information, to supply a richer and extra subtle understanding and interplay. Take into account an AI system that not solely views a picture however can describe it, perceive the context, reply questions on it, and even generate associated content material primarily based on a number of enter sorts.

Now, let’s take the identical instance of an x-ray report with the context of how a multimodal LLM will perceive the context of it. Right here’s a easy animation explaining the way it first processes the picture through the picture encoder to transform the picture into vectors and afterward it makes use of LLM which is educated over medical information to reply the question.

Supply: Google multimodal medical AI

How do Multimodal LLMs work?

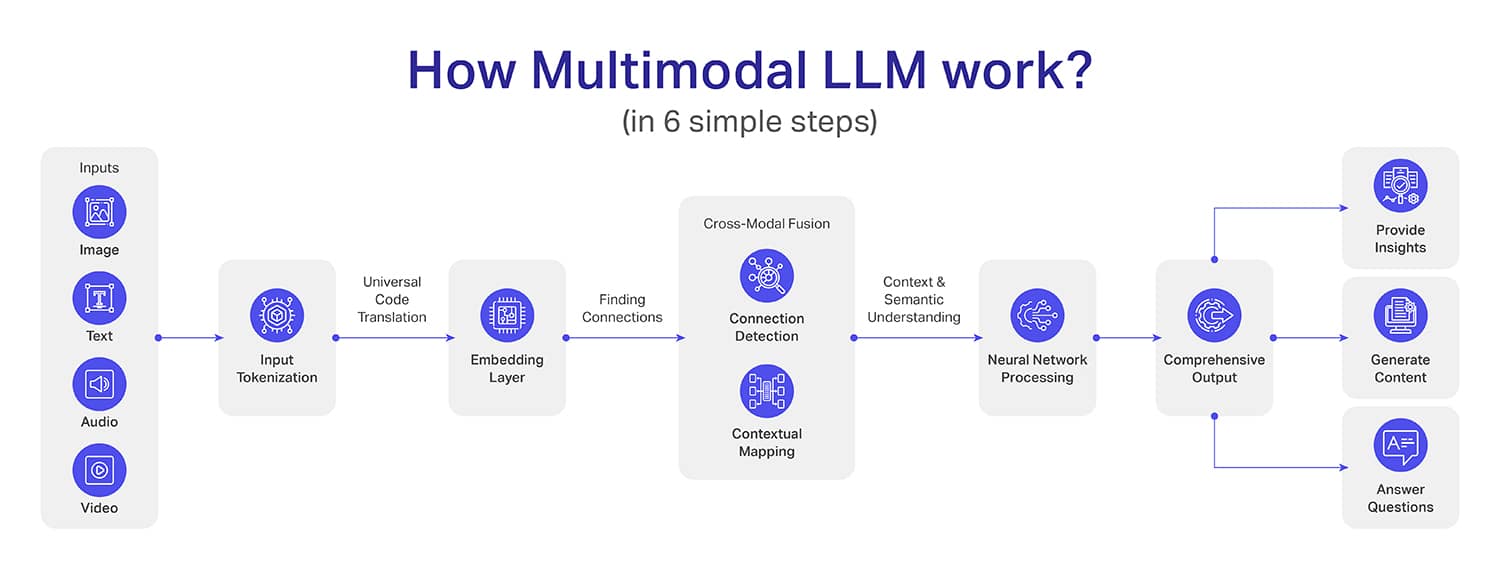

Whereas the interior workings of multimodal LLMs are fairly complicated (greater than LLMs), we have now tried breaking them down into six easy steps:

Step 1: Enter Assortment – This is step one the place the information is collected and undergoes the preliminary processing. For instance, photographs are transformed into pixels sometimes utilizing convolutional neural community (CNN) architectures.

Textual content inputs are transformed into tokens utilizing algorithms like BytePair Encoding (BPE) or SentencePiece. Alternatively, audio alerts are transformed into spectrograms or mel-frequency cepstral coefficients (MFCCs). Video information nevertheless is damaged down to every body in sequential kind.

Step 2: Tokenization – The concept behind tokenization is to transform the information into a typical kind in order that the machine can perceive the context of it. For instance, to transform textual content into tokens, pure language processing (NLP) is used.

For picture tokenization, the system makes use of pre-trained convolutional neural networks like ResNet or Imaginative and prescient Transformer (ViT) architectures. The audio alerts are transformed into tokens utilizing sign processing strategies in order that audio waveforms may be transformed into compact and significant expressions.

Step 3: Embedding Layer – On this step, the tokens (which we achieved within the earlier step) are transformed into dense vectors in a means that these vectors can seize the context of the information. The factor to notice right here is every modality develops its personal vectors that are cross-compatible with others.

Step 4: Cross-Modal Fusion – Until now, fashions had been in a position to perceive the information until the person mannequin degree however from the 4th step, it modifications. In cross-modal fusion, the system learns to attach dots between a number of modalities for deeper contextual relationships.

One good instance the place the picture of a seashore, a textual illustration of a trip on the seashore, and audio clips of waves, wind, and a cheerful crowd work together. This manner the multimodal LLM not solely understands the inputs but additionally places the whole lot collectively as one single expertise.

Step 5: Neural Community Processing – Neural community processing is the step the place info gathered from the cross-modal fusion (earlier step) will get transformed into significant insights. Now, the mannequin will use deep studying to research the intricate connections that had been discovered throughout cross-modal fusion.

Picture a case the place you mix x-ray stories, affected person notes, and symptom descriptions. With neural community processing, it is not going to solely record info however will create a holistic understanding that may determine potential well being dangers and recommend attainable diagnoses.

Step 6 – Output Era – That is the ultimate step the place the MLLM will craft a exact output for you. Not like conventional fashions which are sometimes context-limited, MLLM’s output could have a depth and a contextual understanding.

Additionally, the output can have a couple of format resembling making a dataset, creating a visible illustration of a situation, and even an audio or video output of a selected occasion.

[Also Read: RAG vs. Fine-Tuning: Which One Suits Your LLM?]

What are the Purposes of Multimodal Giant Language Fashions?

Regardless that the MLLM is a lately tossed time period, there are tons of of purposes the place you will see outstanding enhancements in comparison with conventional strategies, all because of MLLMs. Listed below are some necessary purposes of MLLM: