DataRobot and Nebius have partnered to introduce AI Manufacturing facility for Enterprises, a joint resolution designed to speed up the event, operation, and governance of AI brokers. This platform permits brokers to succeed in manufacturing in days, fairly than months.

AI Manufacturing facility for Enterprises offers a scalable, cost-effective, ruled, and managed enterprise-grade platform for brokers. It achieves this by combining DataRobot’s Agent Workforce Platform: essentially the most complete, versatile, safe, and enterprise-ready agent lifecycle administration platform, with Nebius’ purpose-built cloud infrastructure for AI.

Our partnership

Nebius: The aim-built cloud for AI

The problem at this time is that general-purpose cloud platforms usually introduce unpredictable efficiency, latency, and a “virtualization tax” that cripples steady, production-scale AI. To resolve this, DataRobot is leveraging Nebius AI Cloud, a GPU cloud platform engineered from the {hardware} layer up particularly to ship the bare-metal efficiency, low latency, and predictable throughput important for sustained AI coaching and inference. This eliminates the “noisy-neighbor” downside and ensures your most demanding agent workloads run reliably, delivering predictable outcomes and clear prices. Nebius’ Token Manufacturing facility augments the providing by offering a pay-per-token mannequin entry layer for key open-source fashions, which clients can use throughout agent constructing and experimentation, after which deploy the identical fashions with DataRobot when working the brokers in manufacturing.

DataRobot: Seamlessly construct, function, and govern brokers at scale

DataRobot’s Agent Workforce Platform is essentially the most complete Agent Lifecycle Administration platform that permits clients to construct, function, and govern their brokers seamlessly.

The platform presents two major elements:

- An enterprise-grade, scalable, dependable, and cost-effective runtime for fashions and brokers, that includes out-of-the-box governance and monitoring.

- A simple-to-use agent builder surroundings that permits clients to seamlessly construct production-ready brokers in hours, fairly than days or months.

Complete enterprise-grade runtime capabilities

- Scalable, cost-effective runtime: Options single-click deployment of fifty+ NIMs and Hugging Face fashions with autoscaling or deploy any containerized artifacts by way of Workload API (each with inbuilt monitoring/governance), optimized utilization via endpoint degree multi-tenancy (token quota), and high-availability inferencing. You may deploy containerized brokers, functions or different composite methods constructed utilizing a mix of say LLMs, area particular libraries like PhysicsNemo, cuOpt and so forth., or your personal proprietary fashions, with a single command utilizing Workload API.

- Governance and monitoring: Gives the {industry}’s most complete out-of-the-box metrics (behavioral and operational), tracing capabilities for agent execution paths, full lineage/versioning with audit logging, and industry-leading governance towards Safety, Operational, and Compliance Dangers with real-time intervention and automatic reporting.

- Safety and id: Consists of Unified Identification and Entry Administration with OAuth 2.0, granular RBAC for least-privilege entry throughout assets, and safe secret administration with an encrypted vault.

Complete enterprise-grade agent constructing capabilities

- Builder instruments: Help for fashionable frameworks (Langchain, Crew AI, Llamaindex, Nvidia NeMo Agent Toolkit) and out-of-the-box help for MCP, authentication, managed RAG, and information connectors. Nebius token manufacturing unit integration allows on-demand mannequin use throughout the construct.

- Analysis & tracing: Trade-leading analysis with LLM as a Decide, Human-in-the-Loop, Playground/API, and agent tracing. Gives complete behavioral (e.g., activity adherence) and operational (latency, price) metrics, plus customized metric help.

- Out-of-the field manufacturing readiness: Enterprise hooks summary away infrastructure, safety, authentication, and information complexity. Brokers deploy with a single command; DataRobot handles element deployment with embedded monitoring and governance at each the total agent and particular person element/device ranges.

Wish to take brokers you have got constructed elsewhere, and even open supply {industry} particular fashions, LLMs and so forth, and deploy them in a scalable, safe and ruled method utilizing the AI Manufacturing facility? Or would you wish to construct brokers with out worrying concerning the heavy lifting of creating them manufacturing prepared? This part will present you learn how to do each.

Desk of contents

2. Governed, Monitored Model Inference Deployment

a. Out-of-the-box observability and governance for deployed models

3. Agent deployment using the Workload API

a. Out-of-the-box observability and governance for deployed agentic workloads

4. Enterprise-Ready Agent Building Capabilities

a. A comprehensive toolkit for every builder

b. Evaluation capabilities: the “how-to”

c. Enterprise hooks: Deployment-ready from day one

1. DataRobot STS on Nebius

DataRobot Single-Tenant SaaS (STS) is deployed on Nebius Managed Kubernetes and could be backed by GPU-enabled node teams, high-performance networking, and storage choices acceptable for AI workloads.For DataRobot deployments, Nebius is a high-performance low price surroundings for agent workloads. Devoted NVIDIA clusters (H100, H200, B200, B300, GB200 NVL72, GB300 NVL72) allow environment friendly tensor parallelism and KV-cache-heavy serving patterns, whereas InfiniBand RDMA helps high-throughput cross-node scaling. The DataRobot/Nebius partnership offers a sturdy AI infrastructure:

- Managed kubernetes with GPU-aware scheduling simplifies STS set up and upgrades, pre-configured with NVIDIA operators.

- Devoted GPU employee swimming pools (H100, B200, and so forth.) isolate demanding STS providers (LLM inference, vector databases) from generic CPU-only workloads.

- Excessive-throughput networking and storage help massive mannequin artifacts, embeddings, and telemetry for steady analysis and logging.

- Safety and tenancy is maintained: STS makes use of devoted tenant boundaries, whereas Nebius IAM and community insurance policies meet enterprise necessities.

- Constructed-in node well being monitoring proactively identifies and addresses GPU/community points for secure clusters and smarter upkeep.

2. Ruled, monitored mannequin inference deployment

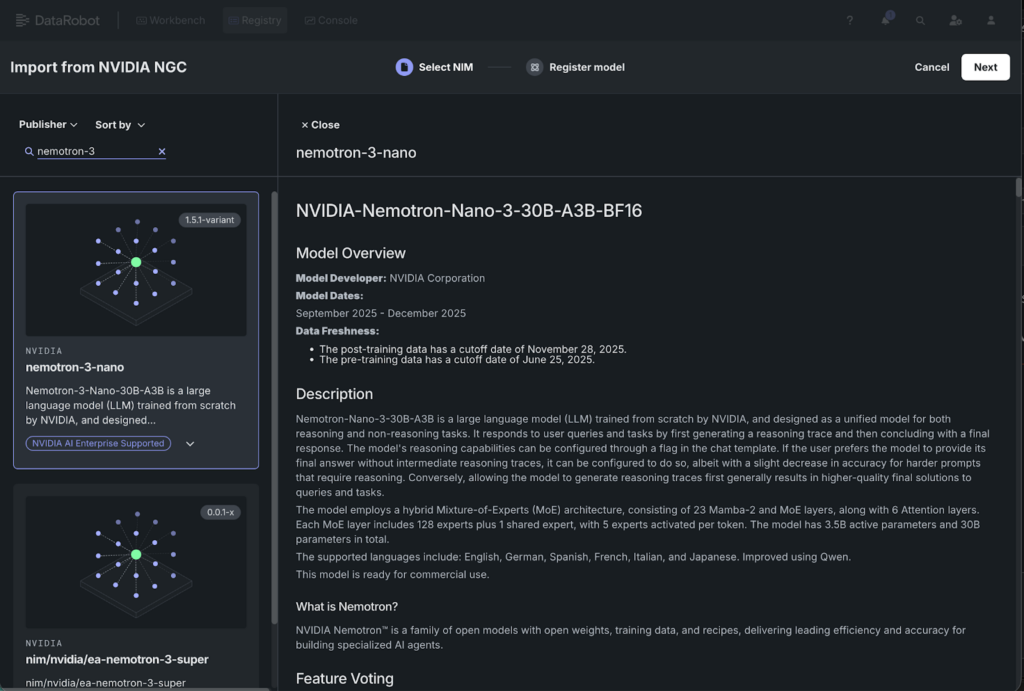

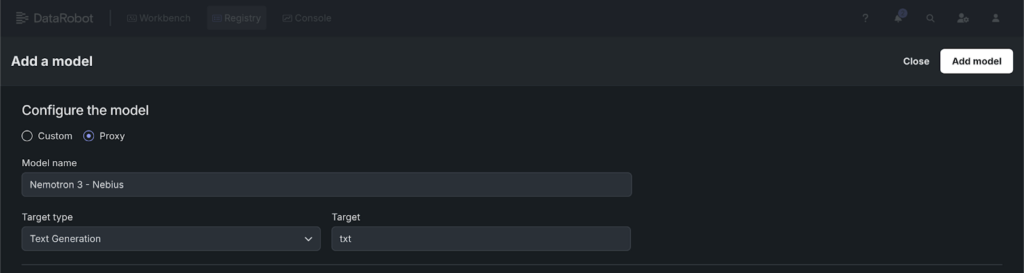

The problem with GenAI isn’t getting a mannequin working; it’s getting it working with the identical monitoring, governance, and safety your group expects. DataRobot’s NVIDIA NIM integration deploys NIM containers from NGC onto Nebius GPUs in 4 clicks:

- In Registry > Fashions, click on Import from NVIDIA NGC and browse the NIM gallery.

- Choose the mannequin, evaluate the NGC mannequin card, and select a efficiency profile.

- Evaluation the GPU useful resource bundle routinely really useful based mostly on the NIM’s necessities.

- Click on Deploy, choose the Serverless surroundings, and deploy the mannequin.

Out-of-the-box observability and governance for deployed fashions

- Automated monitoring & danger evaluation: Leverage the NeMo Evaluator integration for mannequin faithfulness, groundness, and relevance scoring. Routinely scan for Bias, PII, and Immediate Injection dangers.

- Actual-time moderation & deep observability: DataRobot presents a platform for NIM moderation and monitoring. Deploy out-of-the-box guards for dangers like PII, Immediate Injection, Toxicity, and Content material Security. OTel-compliant monitoring offers visibility into NIM operational well being, high quality, security, and useful resource use.

- Enterprise governance & compliance: DataRobot offers the executive layer for secure, organization-wide scaling. It routinely compiles monitoring and analysis information into compliance documentation, mapping efficiency to regulatory requirements for audits and reporting.

3. Agent deployment utilizing the Workload API

An MCP device server, a LangGraph agent, a FastAPI backend, composite methods constructed utilizing mixture of say LLMs and area particular libraries like cuOpt, PhysicsNemo and so forth; these are containers, not fashions, and so they want their very own path to manufacturing. The Workload API offers you a ruled endpoint with autoscaling, monitoring, and RBAC in a single API name.

curl -X POST "${DATAROBOT_API_ENDPOINT}/workloads/"

-H "Authorization: Bearer ${DATAROBOT_API_TOKEN}"

-H "Content material-Kind: software/json"

-d '{

"title": "agent-service",

"significance": "HIGH",

"artifact": {

"title": "agent-service-v1",

"standing": "locked",

"spec": {

"containerGroups": [{

"containers": [{

"imageUri": "your-registry/agent-service:latest",

"port": 8080,

"primary": true,

"entrypoint": ["python", "server.py"],

"resourceRequest": {"cpu": 1, "reminiscence": 536870912},

"environmentVars": [

],

"readinessProbe": {"path": "/readyz", "port": 8080}

}]

}]

}

},

"runtime": {

"replicaCount": 2,

"autoscaling": {

"enabled": true,

"insurance policies": [{

"scalingMetric": "inferenceQueueDepth",

"target": 70,

"minCount": 1,

"maxCount": 5

}]

}

}

}'

The agent is instantly accessible at /endpoints/workloads/{id}/ with monitoring, RBAC, audit trails, and autoscaling.

Out-of-the-box observability and governance for deployed agentic workloads

DataRobot drives the AI Manufacturing facility by offering sturdy governance and observability for agentic workloads:

- Observability (OTel Normal): DataRobot standardizes on OpenTelemetry (OTel): logs, metrics, and traces—to make sure constant, high-fidelity telemetry for all deployed entities. This telemetry seamlessly integrates with present enterprise observability stacks, permitting customers to watch important dimensions, together with:

- Agent-specific metrics: Reminiscent of Agent Process Adherence and Agent Process Accuracy.

- Operational well being and useful resource utilization.

- Tracing and Logging: OTel-compliant tracing interweaves container-level logs with execution spans to simplify root trigger evaluation inside complicated logic loops.

- Governance and Entry Management: DataRobot enforces enterprise-wide authentication and authorization protocols throughout deployed brokers utilizing OAuth-based entry management mixed with Function-Primarily based Entry Management (RBAC).

4. Enterprise-ready agent constructing capabilities

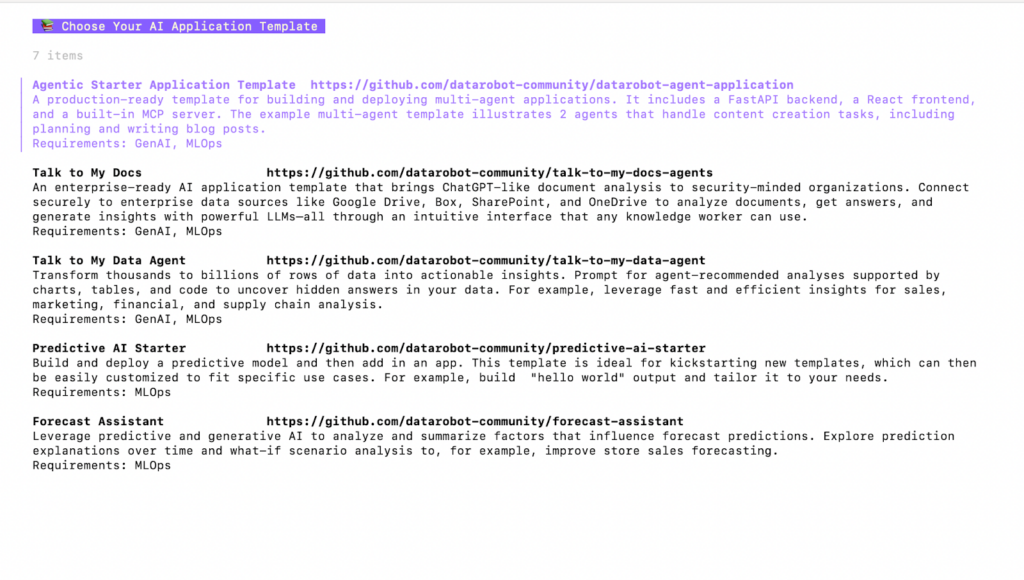

A complete toolkit for each builder with the DataRobot Agent Workforce Platform on Nebius

The DataRobot Agent Workforce Platform helps builders construct brokers sooner by extending present flows. Our builder kits help complicated multi-agent workflows and single-purpose bots, accommodating numerous instruments and environments.

Our equipment contains native help contains:

- Open supply frameworks: Native integration with LangChain, CrewAI, and LlamaIndex.

- NAT (Node Structure Tooling): DataRobot’s framework for modular, node-based agent design.

- Superior requirements: Abilities, MCP (Mannequin Context Protocol) for information/device interplay, and sturdy Immediate Administration for versioning/optimization.

The Nebius benefit: DataRobot’s Agent Workforce Platform integrates with the Nebius Token Manufacturing facility, permitting builders to devour fashions like Nemotron 3 (and any open supply mannequin) on a pay-per-token foundation throughout the experimental part. This allows speedy, low-cost iteration with out heavy infrastructure provisioning. As soon as perfected, brokers can seamlessly transition from the Token Manufacturing facility to a devoted deployment (e.g., NVIDIA NIM) for enterprise scale and low latency.

Getting Began: Constructing is straightforward utilizing our Node Structure Tooling (NAT). You outline agent nodes as structured, testable steps in YAML.

First, join your deployed LLM within the Nebius token elements to DataRobot

Add DataRobot deployment to you agentic starter software within the DataRobot CLI

capabilities:

planner:

_type: chat_completion

llm_name: datarobot_llm

system_prompt: |

You're a content material planner. You create transient, structured outlines for weblog articles.

You determine crucial factors and cite related sources. Preserve it easy and to the purpose -

that is simply a top level view for the author.

Create a easy define with:

1. 10-15 key factors or details (bullet factors solely, no paragraphs)

2. 2-3 related sources or references

3. A short recommended construction (intro, 2-3 sections, conclusion)

Do NOT write paragraphs or detailed explanations. Simply present a targeted checklist.

author:

_type: chat_completion

llm_name: datarobot_llm

system_prompt: |

You're a content material author working with a planner colleague.

You write opinion items based mostly on the planner's define and context. You present goal and

neutral insights backed by the planner's data. You acknowledge when your statements are

opinions versus goal details.

1. Use the content material plan to craft a compelling weblog publish.

2. Construction with an attractive introduction, insightful physique, and summarizing conclusion.

3. Sections/Subtitles are correctly named in an attractive method.

4. CRITICAL: Preserve the full output below 500 phrases. Every part ought to have 1-2 transient paragraphs.

Write in markdown format, prepared for publication.

content_writer_pipeline:

_type: sequential_executor

tool_list: [planner, writer]

description: A device that plans and writes content material on the requested subject.

function_groups:

mcp_tools:

_type: datarobot_mcp_client

authentication:

datarobot_mcp_auth:

_type: datarobot_mcp_auth

llms:

datarobot_llm:

_type: datarobot-llm-component

workflow:

_type: tool_calling_agent

llm_name: datarobot_llm

tool_names:

- content_writer_pipeline

- mcp_tools

return_direct:

- content_writer_pipeline

system_prompt:

Select and name a device to reply the question.

Analysis capabilities: The “how-to”

Constructing is barely half the battle; figuring out if it really works is the opposite. Our analysis framework strikes past easy “thumbs up/down” and into data-driven validation.

To guage your agent, you may:

- Outline a take a look at suite: Add a “golden dataset” of anticipated queries and ground-truth solutions.

- Automated metrics: Run your agent towards built-in evaluators for faithfulness, relevance, and toxicity.

- LLM-as-a-Decide: Use a “critic” mannequin to attain agent responses based mostly on customized rubrics (e.g., “Did the agent observe the model’s tone of voice?”).

- Aspect-by-side comparability: Run two variations of your agent (e.g., one utilizing NAT and one utilizing LangChain) towards the identical dataset to check price, latency, and accuracy in a single dashboard.

Enterprise hooks: Deployment-ready from day one

We automate the “enterprise tax” (safety, logging, auth) that separates notebooks from manufacturing providers by embedding construct “hooks”:

- Observability: Computerized OTel-compliant tracing captures each step with out boilerplate.

- Identification & auth: Constructed-in OAuth 2.0 and Service Accounts guarantee brokers use the person’s precise permissions when calling inside APIs (CRM, ERP), sustaining strict safety.

- Manufacturing hand-off: Deployment packages the surroundings, elements, and auth hooks right into a safe, ruled container, making certain a constant agent from dev to manufacturing. Advanced brokers are autoparsed into orchestrated containers for granular monitoring whereas deployed as a single pipeline entity.

Join with us

The DataRobot and Nebius partnership delivers a validated, enterprise-ready deployment stack for agentic AI constructed on NVIDIA accelerated computing. For groups shifting past experimentation, it offers a ruled and scalable path to sustained manufacturing inference.

Nebius and DataRobot will probably be showcasing this resolution at NVIDIA GTC 2026, going down March 16-19 in San Jose, California.

Read the executive summary blog

Connect with DataRobot (booth #104) and Nebius (booth #713) at GTC 2026