, I’ve stored returning to the identical query: if cutting-edge basis fashions are extensively accessible, the place might durable competitive advantage with AI truly come from?

As we speak, I wish to zoom in on context engineering — the self-discipline of dynamically filling the context window of an AI mannequin with info that maximizes its possibilities of success. Context engineering means that you can encode and go in your present experience and area information to an AI system, and I imagine it is a crucial element for strategic differentiation. When you have each distinctive area experience and know the best way to make it usable to your AI methods, you’ll be exhausting to beat.

On this article, I’ll summarize the parts of context engineering in addition to the most effective practices which have established themselves over the previous 12 months. Probably the most important components for achievement is a tight handshake between domain experts and engineers. Area specialists are wanted to encode area information and workflows, whereas engineers are liable for information illustration, orchestration, and dynamic context building. Within the following, I try to elucidate context engineering in a means that’s useful to each area specialists and engineers. Thus, we won’t dive into technical matters like context compacting and compression.

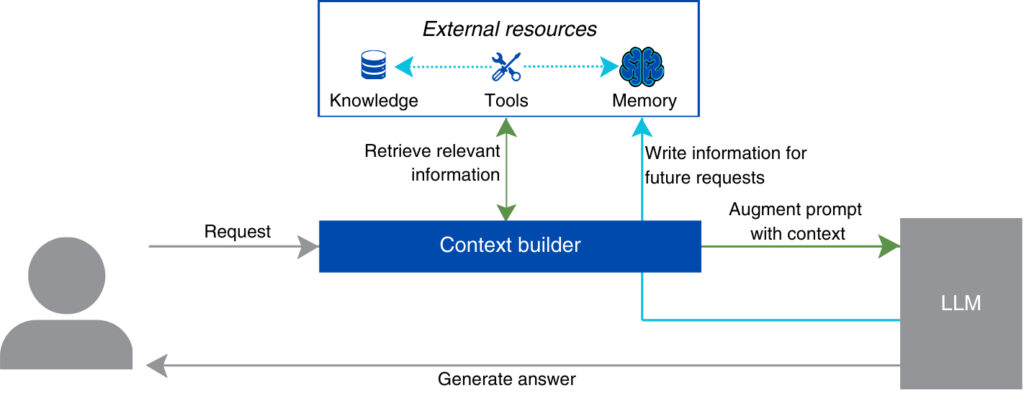

For now, let’s assume our AI system has an summary element — the context builder — which assembles probably the most environment friendly context for each consumer interplay. The context builder sits between the consumer request and the language mannequin executing the request. You’ll be able to consider it as an clever perform that takes the present consumer question, retrieves probably the most related info from exterior sources, and assembles the optimum context for it. After the mannequin produces an output, the context builder can also retailer new info, like consumer edits and suggestions. On this means, the system accumulates continuity and expertise over time.

Conceptually, the context builder should handle three distinct sources:

- Data concerning the area and particular duties turns a generic AI system into a site professional.

- Instruments permit the agent act in the true world.

- Reminiscence permits the agent to personalize its actions and study from consumer suggestions.

Because the system matures, additionally, you will discover increasingly fascinating interdependencies between these three parts, which might be addressed with correct orchestration.

Let’s dive in and look at these parts one after the other. We are going to illustrate them utilizing the instance of an AI system that helps RevOps duties akin to weekly forecasts.

Data

As you start designing your system, you converse with the Head of RevOps to grasp how forecasting is presently executed. She explains: “Once I put together a forecast, I don’t simply take a look at the pipeline. I additionally want to grasp how related offers carried out previously, which segments are trending up or down, whether or not discounting is rising, and the place we traditionally overestimated conversion. Generally, that info is already top-of-mind, however usually, I would like to look via our methods and discuss to salespeople. In any case, the CRM snapshot alone is simply a baseline.”

LLMs include in depth normal information from pre-training. They perceive what a gross sales pipeline is and know widespread forecasting strategies. Nonetheless, they don’t seem to be conscious of your organization’s specifics, akin to:

- Historic shut charges by stage and phase

- Common time-in-stage benchmarks

- Seasonality patterns from comparable quarters

- Pricing and low cost insurance policies

- Present income targets

- Definitions of pipeline phases and chance logic

With out this info, customers should manually modify the system’s outputs. They may clarify that enterprise offers slip extra usually in This autumn, right enlargement assumptions, and remind the mannequin that low cost approvals are presently delayed. Quickly, they could conclude that the AI system is fascinating in itself, however not viable for his or her day-to-day.

Let’s take a look at patterns that let you combine an AI mannequin with company-specific information. We are going to begin with RAG (Retrieval-Augmented Era) because the baseline and progress in the direction of extra structured representations of information.

RAG

In Retrieval-Augmented Era (RAG), company- and domain-specific information is damaged into manageable chunks (discuss with this article for an summary of chunking strategies). Every chunk is transformed right into a textual content embedding and saved in a database. Textual content embeddings symbolize the which means of a textual content as a numerical vector. Semantically related texts are neighbours within the embedding area, so the system can retrieve “related” info via similarity search.

Now, when a forecasting request arrives, the system retrieves probably the most related textual content chunks and consists of them within the immediate:

Conceptually, that is elegant, and each freshly baked B2B AI crew that respects itself has a RAG initiative underway. Nonetheless, most prototypes and MVPs battle with adoption. The naive model of RAG makes a number of oversimplifying assumptions concerning the nature of enterprise information. It makes use of remoted textual content fragments as a supply of fact. It assumes that paperwork are internally constant. It additionally strips the complicated empirical idea of relevance right down to similarity, which is far handier from the computational standpoint.

In actuality, textual content information in its uncooked type gives a complicated context to AI fashions. Paperwork get outdated, insurance policies evolve, metrics are tweaked, and enterprise logic could also be documented in a different way throughout groups. In order for you forecasting outputs that management can belief, you want a extra intentional information illustration.

Articulating information via graphs

Many groups dump their obtainable information into an embedding database with out understanding what’s inside. It is a positive recipe for failure. You’ll want to know the semantics of your information. Your information illustration ought to mirror the core objects, processes, and KPIs of the enterprise in a means that’s interpretable each by people and by machines. For people, this ensures maintainability and governance. For AI methods, it ensures retrievability and proper utilization. The mannequin should not solely entry info, but additionally perceive which supply is acceptable for which job.

Graphs are a promising strategy as a result of they let you construction information whereas preserving flexibility. As an alternative of treating information as an archive of loosely related paperwork, you mannequin the core objects of your small business and the relationships between them.

Relying on what it’s essential to encode, listed here are some graph varieties to contemplate:

- Taxonomies or ontologies that outline core enterprise objects — offers, segments, accounts, reps — together with their properties and relationships

- Canonical knowledge graphs that seize extra complicated, non-hierarchical dependencies

- Context graphs that document previous determination traces and permit retrieval of precedents

Graphs are highly effective as a illustration layer, and RAG variants akin to GraphRAG present a blueprint for his or her integration. Nonetheless, graphs don’t develop on timber. They require an intentional design effort — it’s essential to determine what the graph encodes, how it’s maintained, and which components are uncovered to the mannequin in a given reasoning cycle. Ideally, you’ll be able to view this not as a one-off funding, however flip it right into a steady effort the place human customers collaborate with the AI system in parallel to their each day work. It will let you construct its information whereas participating customers and supporting adoption.

Instruments

Forecasting is just not analytical, however operational and interactive. Your Head of RevOps explains: “I’m continually leaping between methods and conversations — checking the CRM, reconciling with finance, recalculating rollups, and following up with reps when one thing appears off. The entire course of interactive.”

To help this workflow, the AI system wants to maneuver past studying and producing textual content. It should be capable to work together with the digital methods the place the enterprise truly runs. Instruments present this functionality.

Instruments make your system agentic — i.e., capable of act in the true world. Within the RevOps setting, instruments would possibly embody:

- CRM pipeline retrieval (pull open alternatives with stage, quantity, shut date, proprietor, and forecast class)

- Forecast rollup calculation (apply company-specific chance and override logic to compute commit, greatest case, and complete pipeline)

- Variance and threat evaluation (examine present forecast to prior durations and establish slippage, focus threat, or deal dependencies)

- Government abstract era (translate structured outputs right into a leadership-ready forecast narrative)

- Operational follow-up set off (create duties or notifications for high-risk or stale offers)

By hard-coding these actions into instruments, you encapsulate enterprise logic that shouldn’t be left to probabilistic guessing. For instance, the mannequin not must approximate how “commit” is calculated or how variance is decomposed — it simply calls the perform that already displays your inner guidelines. This will increase the arrogance and certainty of your system.

How instruments are referred to as

The next determine reveals the essential loop when you combine instruments in your system:

Let’s stroll via the method:

- A consumer sends a request to the LLM, for instance: “Why did our enterprise forecast drop week over week?” The context builder injects related information (latest pipeline snapshot, forecast definitions, prior totals) and a subset of obtainable instruments.

- The LLM decides whether or not a software is required. If the query requires structured computation — akin to variance decomposition — it selects the suitable perform.

- The chosen software is executed externally. For instance, the variance evaluation perform queries the CRM, calculates deltas (new offers, slipped offers, closed-won, quantity modifications), and returns structured output.

- The software output is added again into the context.

- The LLM generates the ultimate reply. Grounded in a longtime computation, it produces a structured rationalization of the forecast change.

Thus, the duty for creating the enterprise logic is offloaded to the specialists who write the instruments. The AI agent orchestrates predefined logic and causes over the outcomes.

Deciding on the proper instruments

Over time, your stock of instruments will develop. Past CRM retrieval and forecast rollups, you might introduce renewal threat scoring, enlargement modelling, territory mapping, quota monitoring, and extra. Injecting all of those into each immediate will increase complexity and reduces the probability that the proper software is chosen.

The context builder is liable for managing this complexity. As an alternative of exposing the whole software ecosystem, it selects a subset based mostly on the duty at hand. A request akin to “What’s our doubtless end-of-quarter income?” could require CRM retrieval and rollup logic, whereas “Why did enterprise forecast drop week over week?” could require variance decomposition and stage motion evaluation.

Thus, instruments grow to be a part of the dynamic context. To make this work reliably, every software wants clear, AI-friendly documentation:

- What it does

- When it needs to be used

- What its inputs symbolize

- How its outputs needs to be interpreted

This documentation kinds the contract between the mannequin and your operational logic.

Standardizing the interface between LLMs and instruments

Once you join an AI mannequin to predefined instruments, you might be bringing collectively two very completely different worlds: a probabilistic language mannequin and deterministic enterprise logic. One operates on likelihoods and patterns; the opposite executes exact, rule-based operations. If the interface between them is just not clearly specified, the interplay turns into fragile.

Requirements such because the Model Context Protocol (MCP) intention to formalize the interface. MCP gives a structured solution to describe and invoke exterior capabilities, making software integration extra constant throughout methods. WebMCP extends this concept by proposing methods for internet purposes to grow to be callable instruments inside AI-driven workflows.

These requirements matter not just for interoperability, but additionally for governance. They outline which components of your operational logic the mannequin is allowed to execute and underneath which situations.

Reminiscence — the important thing to personalised, self-improving AI

Your Head of RevOps takes a person strategy to each forecasting cycle: “Earlier than I finalize a forecast, I make sure that I perceive how management needs the numbers introduced. I additionally maintain monitor of the changes we’ve already mentioned this week so we don’t revisit the identical assumptions or repeat the identical errors.”

Thus far, our prompts had been stateless. Nonetheless, many generative AI purposes want state and reminiscence. There are lots of completely different approaches to formalize agent memory. Ultimately, the way you construct up and reuse reminiscences is a really particular person design determination.

First, determine what kind of information from consumer interactions might be helpful:

As proven on this desk, the kind of information additionally informs your selection of a storage format. To additional specify it, take into account the next two questions:

- Persistence: For a way lengthy ought to the information be saved? Suppose of the present session because the short-term reminiscence, and of data that persists from one session to a different because the long-term reminiscence.

- Scope: Who ought to have entry to the reminiscence? Normally, we consider reminiscences on the consumer stage. Nonetheless, particularly in B2B settings, it may well make sense to retailer sure interactions, inputs, and sequences within the system’s information base, permitting different customers to profit from it as effectively.

As your reminiscence retailer grows, you’ll be able to more and more align outputs with how the crew truly operates. In the event you additionally retailer procedural reminiscences about execution and outputs (together with people who required changes), your context builder can regularly enhance the way it makes use of reminiscence over time.

Interactions between the three context parts

To scale back complexity, to date, we made a transparent break up between the three parts of an environment friendly context — information, instruments, and reminiscence. In apply, they are going to work together with one another, particularly as your system matures:

- Instruments might be outlined to retrieve information from completely different sources and write various kinds of reminiscences.

- Lengthy-term reminiscences might be written again to information sources and be made persistent for future retrieval.

- If a consumer incessantly repeats a sure job or workflow, the agent can assist them bundle it as a software.

The duty of designing and managing these interactions known as orchestration. Agent frameworks like LangChain and DSPy help this job, however they don’t substitute architectural pondering. For extra complicated agent methods, you would possibly determine to go to your personal implementation. Lastly, as already stated at first, interplay with people — particularly area specialists — is essential for making the agent smarter. This requires educated, engaged customers, correct analysis, and a UX that encourages suggestions.

Summing up

In the event you’re beginning a RevOps forecasting agent tomorrow, start by mapping:

- What info sources exist and are used for this job (information)

- Which operations and computations are repetitive and authoritative (instruments)

- Which workflows choices require continuity (reminiscence)

Ultimately, context engineering determines whether or not your AI system displays how your small business truly works or merely produces guesses that “sound good” to non-experts. The mannequin is interchangeable, however your distinctive context is just not. In the event you study to symbolize and orchestrate it intentionally, you’ll be able to flip generic AI capabilities right into a sturdy aggressive edge.