shipped a readmission-prediction mannequin in early 2024. This can be a composite case drawn from patterns documented by Hernán & Robins in Nature Machine Intelligence, however each element maps to actual deployment failures.

Accuracy on the held-out check set: 94%. The operations staff used it to resolve which sufferers to prioritize for follow-up calls. They anticipated readmission charges to drop.

Charges went up.

The mannequin had captured each correlation within the information: older sufferers, sure zip codes, particular discharge diagnoses. It carried out precisely as designed. The check metrics have been clear. The confusion matrix seemed textbook.

However when the staff acted on these predictions (calling sufferers flagged as high-risk, rearranging discharge protocols) the relationships within the information shifted beneath them. Sufferers who acquired additional follow-up calls didn’t enhance. Those who stored getting readmitted shared a distinct profile solely: they couldn’t afford their medicines, lacked dependable transportation to follow-up appointments, or lived alone with out assist for post-discharge care. The variables that predicted readmission weren’t the identical variables that precipitated it.

The mannequin by no means realized that distinction, as a result of it was by no means designed to. It noticed correlations and assumed they have been handles you can pull. They weren’t. They have been shadows solid by deeper causes the mannequin couldn’t see.

A mannequin that predicts readmission with 94% accuracy instructed the staff precisely who would come again. It instructed them nothing about why, or what to do about it.

In case you’ve constructed a mannequin that predicts nicely however fails when become a choice, you’ve already felt this drawback. You simply didn’t have a reputation for it.

The title is confounding. The answer is causal inference. And in 2026, the instruments to do it correctly are lastly mature sufficient for any information scientist to make use of.

The Query Your Mannequin Can’t Reply

Machine Studying (ML) is constructed for one job: discover patterns in information and predict outcomes. That is associational reasoning. It really works brilliantly for spam filters, picture classifiers, and suggestion engines. Sample in, sample out.

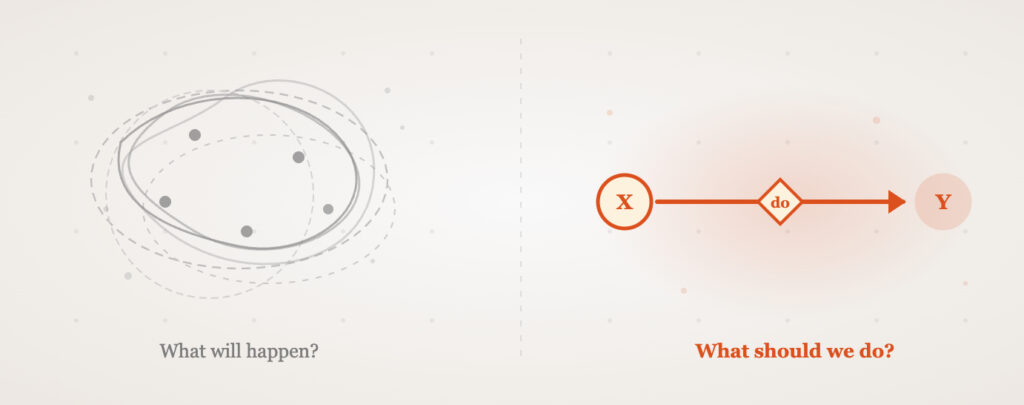

However enterprise stakeholders not often ask “what’s going to occur subsequent?” They ask “what ought to we do?” Ought to we elevate the worth? Ought to we alter the remedy protocol? Ought to we provide this buyer a reduction?

These are causal questions. And answering them with associational fashions is like utilizing a thermometer to set the thermostat. The thermometer tells you the temperature. It doesn’t inform you what would occur in case you modified the dial.

Answering “what ought to we do?” with a instrument designed for “what’s going to occur?” is like utilizing a thermometer to set the thermostat.

Judea Pearl, the pc scientist who received the 2011 Turing Award for his work on probabilistic and causal reasoning, organized this hole into what he calls the Ladder of Causation. The ladder has three rungs, and the gap between them explains why so many ML initiatives fail once they transfer from prediction to motion.

Degree 1: Affiliation (“Seeing”). “Sufferers who take Drug X have higher outcomes.” That is pure correlation. Each customary ML mannequin operates right here. It solutions: what patterns exist within the information?

Degree 2: Intervention (“Doing”). “If we give Drug X to this affected person, will their end result enhance?” This requires understanding what occurs if you change one thing. Pearl formalizes this with the do-operator: P(Y | do(X)). No quantity of observational information, by itself, can reply this.

Degree 3: Counterfactual (“Imagining”). “This affected person took Drug X and recovered. Would they’ve recovered with out it?” This requires reasoning about realities that by no means occurred. It’s the highest type of causal pondering.

Right here’s what every stage seems like in apply. A Degree 1 mannequin at an e-commerce firm says: “Customers who considered product pages for trainers additionally purchased protein bars.” Helpful for suggestions. A Degree 2 query from the identical firm: “If we ship a 20% low cost on protein bars to customers who considered trainers, will purchases enhance?” That requires understanding whether or not the low cost causes purchases or whether or not the identical customers would have purchased anyway. A Degree 3 query: “This consumer purchased protein bars after receiving the low cost. Would they’ve purchased them with out it?” That requires reasoning a couple of world that didn’t occur.

Most ML operates on Degree 1. Most enterprise choices require Degree 2 or 3. That hole is the place mistaken choices are made at scale.

When Accuracy Lies

The hole between prediction and causation just isn’t theoretical. It has a physique depend.

Contemplate the kidney stone study from 1986. Researchers in contrast two therapies for renal calculi. Remedy A outperformed Remedy B for small stones. Remedy A additionally outperformed Remedy B for giant stones. However when the information was pooled throughout each teams, Remedy B appeared superior.

That is Simpson’s paradox. The lurking variable was stone severity. Medical doctors had prescribed Remedy A for tougher instances. Pooling the information erased that context, flipping the obvious conclusion. A prediction mannequin educated on the pooled information would confidently advocate Remedy B. It will be mistaken.

That’s a statistics textbook instance. The hormone remedy case drew blood.

For many years, observational research advised that postmenopausal Hormone Replacement Therapy (HRT) lowered the danger of coronary coronary heart illness. The proof seemed stable. Tens of millions of girls have been prescribed HRT primarily based on these findings. Then the Girls’s Well being Initiative, a large-scale randomized managed trial printed in 2002, revealed the other: HRT truly elevated cardiovascular danger.

For many years, observational research advised hormone remedy protected hearts. A correct trial revealed it broken them. Tens of millions of prescriptions, one confound.

The confound was wealth. More healthy, wealthier girls have been extra prone to each select HRT and have decrease coronary heart illness charges. The observational fashions captured this correlation and mistook it for a remedy impact. A 2019 paper by Miguel Hernán in CHANCE used this actual case to argue that information science wants “a second likelihood to get causal inference proper.”

How widespread is this error? A 2021 scoping review in the European Journal of Epidemiology examined observational research and located that 26% of them conflated prediction with causal claims. One in 4 printed papers, in medical journals, the place folks make life-and-death choices primarily based on the outcomes.

The core construction behind each instances is the confounding fork: a hidden widespread trigger (Z) that influences each the remedy (X) and the end result (Y), making a spurious affiliation between them. Stone severity drove each remedy selection and outcomes. Wealth drove each HRT adoption and coronary heart well being. In every case, the correlation between X and Y was actual within the information. However performing on it as if X precipitated Y produced the mistaken intervention.

The lesson is uncomfortable: a mannequin can have excessive accuracy, go each validation examine, and nonetheless give suggestions that make outcomes worse. Accuracy measures how nicely a mannequin captures current patterns. It says nothing about whether or not these patterns survive if you intervene.

The Toolkit Caught Up

For years, causal inference lived behind a wall of econometrics textbooks, customized R scripts, and a small circle of specialists. That wall has come down.

Microsoft Analysis constructed DoWhy, a Python library that reduces causal evaluation to 4 express steps: mannequin your assumptions, establish the causal estimand, estimate the impact, and refute your personal consequence. That fourth step is what separates causal inference from “I ran a regression and it was important.” DoWhy forces you to attempt to break your conclusion earlier than you belief it.

Alongside DoWhy sits EconML, one other Microsoft Analysis library that gives the estimation algorithms: Double Machine Learning (DML), causal forests, instrumental variable strategies, and doubly strong estimators. Collectively, they kind the PyWhy project, which is shortly changing into the usual causal evaluation stack in Python.

DoWhy reduces causal evaluation to 4 steps: mannequin, establish, estimate, refute. That final step separates causal inference from “I ran a regression.”

The market alerts align. Fortune Business Insights valued the worldwide Causal Synthetic Intelligence (AI) market at $81.4 billion in 2025, projecting $116 billion for 2026 (a 42.5% Compound Annual Development Fee, or CAGR). An extra 25% of organizations plan to undertake causal AI by 2026, which might carry whole adoption amongst AI-driven organizations to just about 70%.

Uber constructed CausalML for uplift modeling and remedy impact estimation. Netflix has printed analysis on causal bandits for content material suggestions. Amazon’s AWS team uses DoWhy for root trigger evaluation in microservice architectures, diagnosing why latency spikes occur somewhat than simply predicting when they’ll. These aren’t tutorial experiments. They’re manufacturing programs working at scale.

The sensible barrier was experience. You wanted to grasp structural causal fashions, the backdoor criterion, and the best way to derive estimands by hand. DoWhy automates the identification step. You draw the DAG (encoding your area information), and the library determines which statistical estimand solutions your causal query. That’s the half that used to take a PhD-level strategies course to do manually.

The place Causal Strategies Break Down

A good objection: most ML purposes work advantageous with out causal reasoning. Advice programs, picture classification, fraud detection, search rating. Sample in, sample out. These issues genuinely don’t want causal construction, and including it will be over-engineering.

Causal inference additionally carries a value that prediction doesn’t. It requires assumptions. You should specify a Directed Acyclic Graph (DAG), a diagram encoding which variables trigger which. In case your DAG is mistaken (a lacking confounder, a reversed arrow) your causal estimate could be worse than a naive correlation. The rubbish-in-garbage-out drawback doesn’t disappear; it strikes from the information to the assumptions.

The argument right here just isn’t that causal inference ought to change prediction. It’s that causal inference should complement prediction everytime you transfer from sample recognition to decision-making. The failure mode just isn’t “ML doesn’t work.” The failure mode is “ML works for prediction, then will get misapplied to a causal query.” Figuring out which query you’re answering is the ability that separates a mannequin builder from a choice scientist.

Does Your Drawback Want Causal Inference?

The 5-Query Diagnostic

Earlier than you choose a technique, run your drawback by way of these 5 questions. In case you reply “sure” to 2 or extra, you want causal inference. In case you reply “sure” to query 1 alone, you want causal inference.

- Are you making a choice or a prediction?

Predicting who will churn = customary ML. Deciding which intervention prevents churn = causal inference. - Would performing in your mannequin change the underlying relationships?

In case your intervention alters the very patterns the mannequin realized, your correlations will shift post-deployment. This can be a causal drawback. - Might a confounding variable clarify your consequence?

If two variables (remedy and end result) share a typical trigger, your noticed affiliation could vanish, reverse, or amplify as soon as the confounder is managed for. Suppose: the HRT case. - Do it is advisable to reply “what if?” or “why?”

“What if we doubled the worth?” is a Degree 2 (intervention) query. “Why did this buyer go away?” is a Degree 3 (counterfactual) query. Each require causal reasoning. - Is there choice bias in how therapies have been assigned?

If medical doctors prescribe Drug A to sicker sufferers, or if customers self-select right into a function, evaluating uncooked outcomes with out adjustment is meaningless.

Which Causal Methodology Matches Your Drawback?

As soon as you understand you want causal inference, the following query is which technique. This matrix maps widespread conditions to the proper instrument.

In case you’re not sure the place to begin: start with a DAG. Draw the causal relationships you consider exist between your remedy, end result, and potential confounders. Even a tough DAG makes your assumptions express, which is the one most vital step. You possibly can refine the estimation technique afterward.

A DoWhy Workflow in Observe

Right here’s a concrete instance: measuring whether or not a buyer loyalty program truly will increase annual spending (versus loyal prospects who would spend extra anyway self-selecting into this system).

# Set up: pip set up dowhy

import dowhy

from dowhy import CausalModel

# Step 1: MODEL your causal assumptions as a DAG

# Earnings impacts each loyalty signup AND spending (confounder)

mannequin = CausalModel(

information=df,

remedy="loyalty_program",

end result="annual_spending",

common_causes=["income", "prior_purchases", "age"],

)

# Step 2: IDENTIFY the causal estimand

# DoWhy determines what statistical amount solutions your query

recognized = mannequin.identify_effect()

# Returns: E[annual_spending | do(loyalty_program=1)]

# - E[annual_spending | do(loyalty_program=0)]

# Step 3: ESTIMATE the causal impact

estimate = mannequin.estimate_effect(

recognized,

method_name="backdoor.propensity_score_matching"

)

print(f"Causal impact: ${estimate.worth:.2f}/yr")

# Step 4: REFUTE your personal consequence

# Add a random variable that should not have an effect on the estimate

refutation = mannequin.refute_estimate(

recognized, estimate,

method_name="random_common_cause"

)

print(refutation)

# If the impact holds beneath random confounders, your result's strong

4 steps. Mannequin your assumptions, establish the estimand, estimate the impact, then attempt to break your personal consequence. The DoWhy documentation supplies full tutorials on integrating EconML estimators for extra superior use instances (DML, causal forests, instrumental variables).

The refutation step deserves emphasis. In customary ML, you validate with held-out check units. In causal inference, you validate by attempting to destroy your personal estimate: including random confounders, utilizing placebo therapies, working the evaluation on information subsets. If the impact survives, you might have one thing actual. If it collapses, you’ve saved your self from a pricey mistaken choice.

In case your mannequin’s suggestions would change the relationships it realized from, you’ve left prediction territory. Welcome to causation.

What Modifications Now

The convergence is already seen. Tech firms are hiring for causal reasoning: Microsoft constructed all the PyWhy stack, Uber launched CausalML, Netflix printed analysis on causal inference in production. The skillset is not confined to economics PhD packages and epidemiology departments. It’s coming into manufacturing ML groups.

Universities are adapting. Hernán’s classification of knowledge science duties into Description, Prediction, and Causal Inference (printed by way of the Harvard School of Public Health) is changing into a typical pedagogical framework. The query is not “ought to information scientists study causal inference?” It’s “how shortly can they?”

For the person practitioner, the return on studying causal strategies is uneven. The information scientist who can reply “what’s going to occur?” is efficacious. The one who can reply “what ought to we do?” (and show why the reply is strong) instructions a distinct type of belief within the room. That belief interprets immediately into affect over choices, useful resource allocation, and technique.

The training curve is actual however shorter than it seems. In case you perceive conditional likelihood and have constructed regression fashions, you have already got 60% of the muse. The remaining 40% is studying to suppose in graphs (DAGs), understanding the distinction between conditioning and intervening, and understanding when to achieve for which estimator. The PyWhy documentation, Brady Neal’s free online course on causal inference, and Pearl’s accessible The Book of Why cowl that hole in weeks, not years.

Keep in mind the health-tech firm from the opening? After the readmission spike, they rebuilt their evaluation utilizing DoWhy. They drew a DAG, recognized that socioeconomic components have been confounders (not causes) of readmission, and remoted the precise causal drivers: treatment adherence and follow-up appointment entry. They redesigned their intervention round these two levers.

Readmission charges dropped 18%.

The mannequin’s accuracy didn’t change. What modified was the query it answered.

The following time a stakeholder asks “what ought to we do?”, you might have two choices: hand them a correlation and hope it survives contact with actuality, or hand them a causal estimate with a refutation report exhibiting precisely how laborious you tried to interrupt it. The instruments exist. The mathematics is settled. The code is four lines.

The one query left is whether or not you’ll hold predicting, or begin inflicting.

References

- Pearl, J. & Mackenzie, D. (2018). The Book of Why: The New Science of Cause and Effect. Primary Books.

- Pearl, J. & Bareinboim, E. (2022). “On Pearl’s Hierarchy and the Foundations of Causal Inference.” Technical Report R-60, UCLA Cognitive Programs Laboratory.

- Hernán, M.A. (2019). “A Second Chance to Get Causal Inference Right: A Classification of Data Science Tasks.” CHANCE, 32(1), 42-49.

- Luijken, Ok. et al. (2021). “Prediction or causality? A scoping review of their conflation within current observational research.” European Journal of Epidemiology, 37, 35-46.

- Hernán, M.A. & Robins, J.M. (2020). “Causal inference and counterfactual prediction in machine learning for actionable healthcare.” Nature Machine Intelligence, 2, 369-375.

- Sharma, A. & Kiciman, E. (2020). DoWhy: An End-to-End Library for Causal Inference. Microsoft Analysis / PyWhy.

- Battocchi, Ok. et al. (2019). EconML: A Python Package for ML-Based Heterogeneous Treatment Effect Estimation. Microsoft Analysis / ALICE.

- Fortune Enterprise Insights. (2025). “Causal AI Market Size, Industry Share | Forecast, 2026-2034.”

- Charig, C.R. et al. (1986). “Comparison of treatment of renal calculi by open surgery, percutaneous nephrolithotomy, and extracorporeal shockwave lithotomy.” BMJ, 292(6524), 879-882.

- PyWhy Contributors. (2024). “Tutorial on Causal Inference and its Connections to Machine Learning (Using DoWhy+EconML).” PyWhy Documentation.