Introduction

that usually operates with shocking inefficiency: handbook processes, piles of paperwork, authorized complexities. Many corporations nonetheless run on paper or Excel and don’t even acquire information on their shipments.

However what if an organization is massive sufficient to avoid wasting thousands and thousands — and even a whole lot of thousands and thousands — of {dollars} via optimization (to say nothing of the environmental impression)? Or what if an organization is small, however poised for speedy progress?

Optimization is usually non-existent or rudimentary — designed for operational comfort somewhat than maximizing financial savings. The business is clearly lagging behind, but there’s a TON of cash on the desk. Cargo networks span the globe, from Alaska to Sydney. I gained’t bore you with market measurement statistics right here. Insiders already know the dimensions, and outsiders could make an informed (or not so educated) guess.

And that’s the place I got here in. As a Knowledge Science and Machine Studying specialist, I discovered myself in a big, fast-growing logistics firm. Crucially, the workforce there wasn’t simply going via the motions; they genuinely needed to optimize. This led to the creation of a line-haul optimization venture that I led for 2 years — and that’s the story I’m right here to inform.

This venture will all the time maintain a heat spot in my coronary heart, though it by no means absolutely made it to manufacturing. I imagine it holds huge potential — particularly within the mixture of logistics and RL’s distinctive capacity to generalize decision-making.

Whereas conventional optimization initiatives often deal with maximizing the target perform or execution pace, essentially the most fascinating metric right here is what number of unseen circumstances we will resolve with the identical mannequin (zero-shot or few-shot).

In different phrases, we’re aiming for a generalizable zero-shot coverage.

Ideally, we prepare an agent, drop it into new situations (ones it has by no means seen), and it simply works— with none retraining or with solely minimal fine-tuning. We don’t want perfection; we simply want it to carry out ‘ok’ to not breach the SLA.

Then we will say: ‘Cool, the agent generalized this case, too.’

I’m assured that this strategy can yield fashions able to ever-increasing generalization over time. I imagine that is the way forward for the business.

And as one in every of my favourite stand-up comedians as soon as mentioned:

Finally, anyone will do it anyway. Let it’s us.

Enterprise Context

The corporate had scaled quickly, rising right into a community of over 100 line-haul terminals. At this magnitude, handbook scheduling reached its operational restrict. As soon as established, a schedule — together with its underlying enterprise contracts and preparations — would typically stay static for months with out a single change.

We noticed a constant inefficiency: vehicles had been ceaselessly dispatched with suboptimal masses — both underutilized (driving up unit prices) or bottlenecked by last-minute overflows.

The monetary impression of this inefficiency was important. In a community of this measurement, even a 1% enhance in car utilization interprets to thousands and thousands of {dollars} in annual financial savings. Due to this fact, maximizing car utilization turned the first lever for price discount.

Large Image Downside

We had entry to historic cargo information. Whereas the storage format was removed from handy, the quantity was ample for modeling. Due to the efforts of my information engineering and information science colleagues, this uncooked information was remodeled right into a clear, usable state (I’ll cowl the precise information engineering challenges in a separate article).

My preliminary aim was to generate a ‘good’ schedule. A Schedule is outlined right here as a tabular dataset the place each row represents a bodily motion (cargo):

- Timestamp: Hourly precision.

- Origin & Vacation spot: The precise edge within the graph.

- Car Kind: The discrete asset class (e.g., 20-ton semi, 5-ton van, and so forth.).

- Load Manifest: The actual set of aggregated ‘pallets’ packed inside.

Due to this fact, constructing a schedule requires 4 distinct selections:

- Select what packages to ship. What can go flawed: if low-priority packages are despatched first, useful or pressing cargo would possibly get stranded on the warehouse. We don’t need that, as a result of the penalty is larger for the extra useful packages.

- Select the following warehouse (the place to ship). Basically, it is a routing downside: choosing the optimum ‘subsequent edge’ on the graph for each single bundle.

- Select car varieties and their amount. This can be a balancing act. What can go flawed: sending a number of small automobiles as a substitute of 1 massive one creates fleet inefficiency, whereas dispatching massive vehicles that drive largely empty means paying for air. Conversely, under-provisioning the fleet results in delays, costing us in each SLA penalties and status.

- Lastly, inaction can be an motion. For any given time step, the optimum transfer could be to ship no vehicles in any respect. To create an optimized schedule, the system should completely stability lively shipments with ‘doing nothing’.

Nonetheless, actuality introduces further complexities and constraints into the issue house:

- Tempo of Change: Enterprise guidelines are quite a few, complicated, and evolve quickly. The actual world may be way more complicated and messier than a fundamental simulation. And adjustments in the true world result in costly and time-consuming code updates.

- Stochastic Demand: Demand is non-deterministic, unknown prematurely, and dynamic (e.g., a number of visits to a buyer inside a window).

- Multi-Goal Optimization: We aren’t simply minimizing price; we’re balancing price towards SLA penalties (lateness) and fleet bills.

So now, we perceive that we not solely have to create schedule, but additionally create a system that respects dynamic demand, truck capability, and quite a few customized enterprise guidelines, which may additionally typically change. This crystallized into the next.

Want-Checklist

- Low-Price Reusability. We’d like the flexibility to reuse the mechanism for brand spanking new duties and contexts cheaply. Since real-world issues shift shortly, the answer should be versatile — adaptable to new settings with out requiring us to retrain the mannequin from scratch each time.

- Quick Inference. Whereas gradual coaching is suitable if it yields stronger generalization, the inference (decision-making) should be quick.

- ‘Good Sufficient’ Effectiveness. The system doesn’t should be good, however it should strictly adhere to the baseline SLA ranges.

- International Optimization. We have to optimize the system as an entire, somewhat than optimizing its particular person parts in isolation.

System Specs

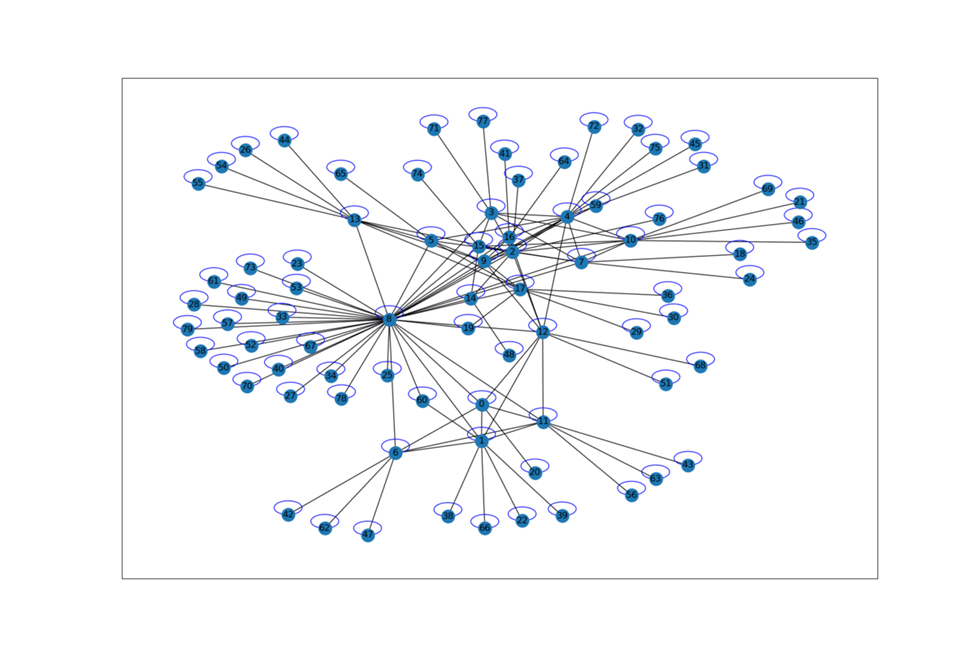

- Topology: Customized graph containing 2 to 100 nodes

- Resolution frequency: 1-hour intervals, 480 steps/episode (representing 20 days)

- Brokers: Decentralized hubs performing as impartial decision-makers

- Constraints: Onerous bodily limits on car quantity (m³) and weight (kg). Onerous restrict on the variety of automobiles dispatched from a terminal per hour.

- Goal: Decrease international price whereas adhering to dynamic SLA home windows.

- Main metrics: Shipments price, proportion of late packages (SLA violations), depend of dispatched automobiles by sort

- Secondary “Lengthy-term” Metrics: Common transit time and car capability utilization.

Why Not Commonplace Solvers?

Spoiler: They will’t reduce it, and they aren’t ok.

Naturally, we began by exploring normal solvers and off-the-shelf instruments like Google OR-Instruments. Nonetheless, the consensus was discouraging: these instruments would both resolve our precise downside poorly, or they might completely resolve a special, imaginary model of the issue. Finally, I concluded that this strategy was a useless finish.

Linear Optimization

That is the best and most cost-effective strategy, however it has a deadly flaw: a linear formulation fails to account for temporal dynamics (each different step is determined by the earlier one).

Basically, LP assumes your complete optimization downside suits right into a single, static snapshot. It ignores the truth that each step is determined by the earlier one. That is basically incorrect and divorced from actuality, the place each motion within the community creates ripple results elsewhere.

Moreover, the sheer quantity of enterprise guidelines makes it virtually unattainable to cram all of them right into a “flat” solver. Briefly, whereas Linear Programming is a good instrument, it is just too inflexible for an issue of this magnitude.

Genetic Algorithms

Genetic Algorithms (GA) had been nearer in philosophy to what we wanted. Whereas they do work, they arrive with important drawbacks of their very own.

First, gradual Inference. To get a end result, you basically must run the optimization from scratch each time (evolving the inhabitants). You can’t merely “prepare” a mannequin and freeze the weights, as a result of there aren’t any weights to freeze. Consequently, the system’s response time is measured in seconds and even minutes — not milliseconds — typical of a neural community or a heuristic. In a manufacturing surroundings coping with a whole lot of hubs in real-time, this turns into a significant bottleneck.

Second, lack of determinism. For those who run the scheduler twice on the identical dataset, a GA can yield two fully completely different schedules. Enterprise prospects often don’t like that very a lot, which may result in belief points.

Why not Pure RL?

Theoretically, one may attempt to resolve your complete downside end-to-end utilizing pure Reinforcement Studying. However that’s undoubtedly the exhausting approach.

A possible pure RL resolution would take one in every of two types: both a single “God Mode” Agent that sees every little thing and allocates each bundle to each truck on each route at each step. Or a workforce of Sequential Brokers performing one after one other.

God-Mode Agent

Within the first case, the motion house turns into unmanageable. You aren’t simply choosing a route — you must select each truck (from N varieties) Ok instances for each path. With packages, it will get even worse: you don’t simply want to pick out a subset of cargo — you must assign particular packages to particular vehicles. Plus, you keep the choice to depart a bundle on the warehouse.

Even with a small fleet, the variety of methods to assign particular packages to particular vehicles is astronomical. Asking a neural community to discover this whole house from scratch is inefficient. It could spend eons simply attempting to determine which bundle suits into which bin.

Sequential Brokers

A series of brokers passing packages down the road would create a non-stationarity nightmare.

Whereas Agent 1 is studying, its habits is actually random. Agent 2 tries to adapt to Agent 1, however since Agent 1 retains altering its technique, Agent 2 can by no means stabilize. As a substitute of fixing logistics, every agent is pressured to infinitely adapt to its neighbor’s instability. It turns into a case of the blind main the blind, unlikely to converge in any affordable time.

Moreover, pure RL struggles to be taught exhausting constraints (like most weight limits) with out incurring huge penalties. It tends to “hallucinate” options — outputs that look environment friendly however are bodily unattainable.

Alternatively, we have now Linear Programming (LP): a quick, easy solver that handles exhausting constraints natively. The temptation to carve out a sub-problem and offload it to LP was too nice to withstand.

And that’s the reason I selected a hybrid strategy.

Carried out Resolution

MARL + LP Hybrid Structure

Let’s construct an RL agent that observes the state of the logistics community and orchestrates the move of packages — deciding precisely what quantity of cargo strikes between warehouses at any given second. Ideally, this agent makes selections strategically, factoring within the international state of the system somewhat than simply optimizing particular person warehouses in isolation.

Then, an Agent represents a particular warehouse chargeable for transport packages to its neighbors. We then join these brokers right into a multi-agent community. Since each motion taken by an agent corresponds to a cargo to a number of locations, the combination sequence of those actions constitutes the ultimate schedule.

Technically, we carried out a Multi-Agent Reinforcement Studying (MARL) framework. The RL surroundings trains the algorithms to generate viable transportation schedules for real-world shipments. Crucially, this venture contains each the surroundings creation and the agent coaching pipelines, guaranteeing that the answer can adapt (by way of continuous studying) to more and more complicated eventualities with minimal human intervention.

What brokers see

Beneath are the important thing observations (mannequin inputs) fed into the agent (I’ll cowl extra of the implementation particulars in Half 2).

- Native Stock: The amount of packages at every warehouse.

- In-Transit Quantity: The amount of packages presently touring on the sides between warehouses.

- Cargo Worth: The full monetary worth of the stock (essential for threat administration) at every warehouse.

- SLA Heatmap: The closest deadlines for the present inventory (figuring out pressing cargo).

- Inbound Forecast: The amount of packages anticipated to reach throughout the subsequent 24 hours.

- Heuristic Hints: Used completely through the imitation studying stage to bootstrap coaching.

Model 1. Brokers Slicing a PriorityQueue

On this model, packages are lined up in a precedence queue, sorted in descending order based mostly on a easy system: Precedence = Worth x Urgency (proximity to deadline). The RL agent “slices” a portion of this queue by choosing a fraction of the highest packages and deciding which warehouse to ship them to.

We use heuristics to pre-filter the choices — discarding packages we undoubtedly don’t need to ship but, or ruling out nonsensical locations (e.g., transport a bundle in the wrong way of its vacation spot).

As soon as the RL selects the what and the place, the Linear Programming solver steps in to select the amount and kind of automobiles. The LP enforces exhausting constraints on weight, quantity, and fleet availability to make sure the simulation doesn’t violate the legal guidelines of physics.

In Model 1, a single motion consists of sending packages to 1 neighbor solely. The amount is set by the “fraction” (0.0 to 1.0) chosen by the agent. “Doing nothing” is just selecting a fraction of 0.

However then, it hit me!

Model 2. Brokers Sending Vehicles

TL;DR: As a substitute of choosing packages, we constructed an agent that selects what number of vehicles to dispatch to every vacation spot. The Linear Programming (LP) solver then decides precisely which packages to pack into these vehicles.

What if the agent managed the fleet capability instantly? This permits the LP solver to deal with the low-level “bin packing” work, whereas the RL agent focuses purely on high-level move administration. That is precisely what we wanted!

Right here is the brand new division of labor:

RL Agent — Fleet Supervisor. Decides the amount of automobiles and their locations.

- Instinct: It appears to be like on the map, checks the calendar, and shouts: “Ship 5 vehicles to the North Hub!” It handles the move administration.

- Talent: Technique, foresight, and balancing.

LP Solver — Dock Employee. Selects the precise car varieties (optimizing the fleet combine) and picks the precise packages to pack.

- Instinct: It takes the “5 vehicles” order and the pile of bins, then packs them completely to maximise worth density.

- Talent: Tetris, algebra, and bodily validity.

Beforehand, the agent managed a “fraction of the queue,” which decided the bundle depend, which decided the truck depend, which lastly decided the reward. Now, the agent controls the truck depend instantly. The hyperlink between Motion and Reward turned a lot shorter and extra predictable, making coaching sooner and extra secure. In technical phrases, we considerably diminished the stochastic noise within the reward sign. The LP now optimizes solely the packaging and fleet combine after the strategic capability resolution has already been made.

However the engineering advantages didn’t cease there. Because the LP now selects the packages, we now not want to take care of a sorted Precedence Queue. This simplified the structure in three important methods. First is concurrency: We eradicated the technical multiprocessing complications related to sharing complicated PriorityQueue objects between processes. Second is vectorization: We now not must iterate via a queue item-by-item (a gradual Python loop). We are able to now rewrite every little thing utilizing matrix operations. This unlocked an enormous potential for pace optimization. Plus, the code turned considerably shorter and cleaner. And at last, multi-destination actions: The agent can now dispatch X vehicles to N completely different warehouses in a single step (not like V1, which was restricted to 1 vacation spot per step). It turned instantly clear that this was the successful structure.

Scale-Invariant Statement House and Generalization

TL;DR: I exploit histogram state representations normalized to 0–1 as a substitute of absolute values to make the brokers transferable to new circumstances.

A core pillar of this venture’s philosophy is universality — the flexibility to reuse the answer throughout completely different duties and new situations with out retraining. Nonetheless, normal RL requires a rigidly fastened motion and statement house.

To reconcile this, we normalized the statement house to make it scale-invariant. As a substitute of monitoring uncooked counts (e.g., “what number of packages had been despatched”), we monitor ratios (e.g., “what proportion of the whole backlog was despatched”). This permits the agent to function on a better stage of abstraction the place absolute numbers are irrelevant.

The result’s a mannequin able to generalizing throughout completely different eventualities, enabling zero-shot switch throughout nodes with vastly completely different capacities.

A Glimpse of the Efficiency

Brokers Discovered “LTL Consolidation” Habits

TL;DR: Elevated cargo price led to extra idle actions and fewer automobiles.

One of the crucial spectacular emergent behaviors was the brokers’ capacity to carry out LTL (Much less-Than-Truckload) Consolidation. At the start of coaching, the brokers had been trigger-happy, dispatching many partially stuffed vehicles at each step. Over time, their habits shifted.

The cargo price is calculated as a product of the car price and the cargo price multiplier. When the cargo price multiplier will increase, a cargo prices extra in relation to the worth of the packages. That offers us a easy technique to alter the cargo price a part of the reward manually.

As we elevated the cargo price multiplier (making logistics dearer relative to the bundle worth), the brokers realized to be affected person. They started selecting extra “idle” actions, successfully accumulating stock to ship fewer, fuller vehicles.

As a result of it’s pricey to ship a truck half-empty (or half-full, relying in your worldview), brokers began ready to fill the vehicles nearer to 100% capability. In different phrases, the brokers realized to optimize car utilization not directly, purely as a byproduct of the price/reward perform.

Alternatively, sending fewer automobiles led to a better variety of overdue packages. I imagine this sort of trade-off — price vs. pace — must be determined by every enterprise independently, based mostly on their particular technique and SLAs. In our particular case, we had a tough cap on the share of allowed delays, therefore we may optimize by staying beneath that cap.

Extra outcomes and experiments might be proven within the coming Half 3

Constraints and Advantages

As I discussed earlier, high-quality information is essential for this engine. For those who don’t have information, you don’t have any simulation, no schedules, and no bundle move forecasts — the very basis of your complete system.

You additionally want the willingness to adapt your small business processes. In apply, that is typically met with resistance. And, in fact, you want the uncooked compute energy (substantial RAM + CPU) to run the simulations.

However when you can overcome these hurdles, you would possibly discover that your logistics community has remodeled into one thing rather more highly effective — a community that:

- Can stand up to overloads, peak seasons, and sudden occasions. It is because you have got a quick, dependable technique to generate a brand new schedule immediately by merely making use of your pre-trained brokers to the brand new information.

- Is extra environment friendly than the competitors. MARL has the potential to attain not simply native optimization, however international optimization of your complete community over a steady time horizon.

- Can quickly broaden or contract as wanted. This flexibility is achieved exactly via the mannequin’s generalization capabilities.

All one of the best to everybody, and will your shipments all the time be quick and dependable!

See the upcoming Half 2 for the implementation specifics and methods I used to make this work!