you’re analyzing a small dataset:

[X = [15, 8, 13, 7, 7, 12, 15, 6, 8, 9]]

You wish to calculate some abstract statistics to get an concept of the distribution of this knowledge, so you utilize numpy to calculate the imply and variance.

import numpy as np

X = [15, 8, 13, 7, 7, 12, 15, 6, 8, 9]

imply = np.imply(X)

var = np.var(X)

print(f"Imply={imply:.2f}, Variance={var:.2f}")Your output Appears to be like like this:

Imply=10.00, Variance=10.60Nice! Now you might have an concept of the distribution of your knowledge. Nonetheless, your colleague comes alongside and tells you that additionally they calculated some abstract statistics on this identical dataset utilizing the next code:

import pandas as pd

X = pd.Sequence([15, 8, 13, 7, 7, 12, 15, 6, 8, 9])

imply = X.imply()

var = X.var()

print(f"Imply={imply:.2f}, Variance={var:.2f}")Their output appears to be like like this:

Imply=10.00, Variance=11.78The means are the identical, however the variances are completely different! What offers?

This discrepancy arises as a result of numpy and pandas use completely different default equations for calculating the variance of an array. This text will mathematically outline the 2 variances, clarify why they differ, and present methods to use both equation in numerous numerical libraries.

Two Definitions

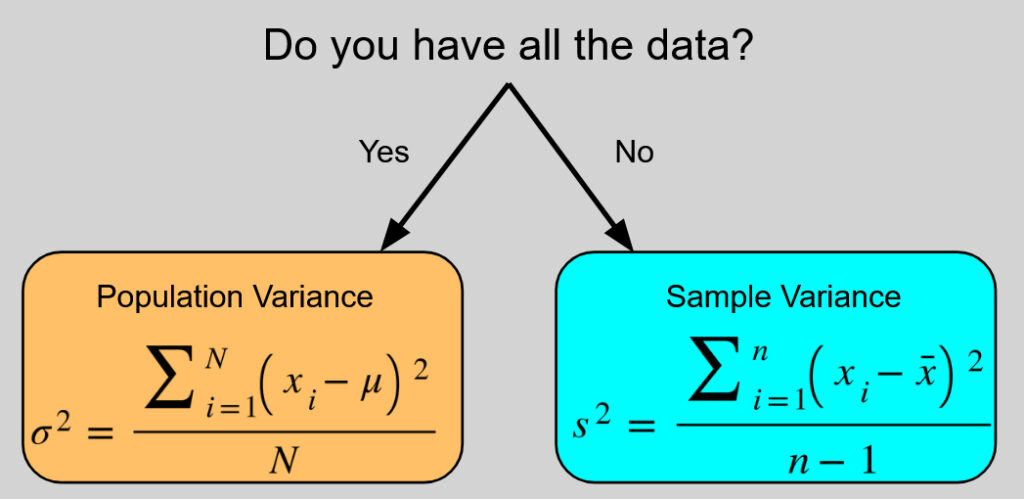

There are two normal methods to calculate the variance, every meant for a unique function. It comes down as to whether you’re calculating the variance of your entire inhabitants (the entire group you’re learning) or only a pattern (a smaller subset of that inhabitants you even have knowledge for).

The inhabitants variance, , is outlined as:

[sigma^2 = frac{sum_{i=1}^N(x_i-mu)^2}{N}]

Whereas the pattern variance, , is outlined as:

[s^2 = frac{sum_{i=1}^n(x_i-bar x)^2}{n-1}]

(Observe: represents every particular person knowledge level in your dataset. represents the whole variety of knowledge factors in a inhabitants, represents the whole variety of knowledge factors in a pattern, and is the pattern imply).

Discover the 2 key variations between these equations:

- Within the numerator’s sum, is calculated utilizing the inhabitants imply, , whereas is calculated utilizing the pattern imply, .

- Within the denominator, divides by the whole inhabitants dimension , whereas divides by the pattern dimension minus one, .

It ought to be famous that the excellence between these two definitions issues essentially the most for small pattern sizes. As grows, the excellence between and turns into much less and fewer vital.

Why Are They Totally different?

When calculating the inhabitants variance, it’s assumed that you’ve got all the info. the precise heart (the inhabitants imply ) and precisely how far each level is from that heart. Dividing by the whole variety of knowledge factors offers the true, precise common of these squared variations.

Nonetheless, when calculating the pattern variance, it isn’t assumed that you’ve got all the info so that you shouldn’t have the true inhabitants imply . As a substitute, you solely have an estimate of , which is the pattern imply . Nonetheless, it seems that utilizing the pattern imply as a substitute of the true inhabitants imply tends to underestimate the true inhabitants variance on common.

This occurs as a result of the pattern imply is calculated straight from the pattern knowledge, which means it sits within the precise mathematical heart of that particular pattern. Consequently, the info factors in your pattern will all the time be nearer to their very own pattern imply than they’re to the true inhabitants imply, resulting in an artificially smaller sum of squared variations.

To appropriate for this underestimation, we apply what is known as Bessel’s correction (named for German mathematician Friedrich Wilhelm Bessel), the place we divide not by , however by the marginally smaller to appropriate for this bias, as dividing by a smaller quantity makes the ultimate variance barely bigger.

Levels of Freedom

So why divide by and never or or some other correction that additionally will increase the ultimate variance? That comes right down to an idea referred to as the Levels of Freedom.

The levels of freedom refers back to the variety of unbiased values in a calculation which are free to differ. For instance, think about you might have a set of three numbers, . You have no idea what the values of those are however you do know that their pattern imply .

- The primary quantity could possibly be something (let’s say 8)

- The second quantity may be something (let’s say 15)

- As a result of the imply have to be 10, shouldn’t be free to differ and have to be the one quantity such that , which on this case is 7.

So on this instance, despite the fact that there are 3 numbers, there are solely two levels of freedom, as imposing the pattern imply removes the power of one in all them to be free to differ.

Within the context of variance, earlier than making any calculations, we begin with levels of freedom (similar to our knowledge factors). The calculation of the pattern imply primarily makes use of up one diploma of freedom, so by the point the pattern variance is calculated, there are levels of freedom left to work with, which is why is what seems within the denominator.

Library Defaults and Learn how to Align Them

Now that we perceive the maths, we will lastly remedy the thriller from the start of the article! numpy and pandas gave completely different outcomes as a result of they default to completely different variance formulation.

Many numerical libraries management this utilizing a parameter referred to as ddof, which stands for Delta Levels of Freedom. This represents the worth subtracted from the whole variety of observations within the denominator.

- Setting

ddof=0divides the equation by , calculating the inhabitants variance. - Setting

ddof=1divides the equation by , calculating the pattern variance.

These can be utilized when calculating the usual deviation, which is simply the sq. root of the variance.

Here’s a breakdown of how completely different in style libraries deal with these defaults and how one can override them:

numpy

By default, numpy assumes you’re calculating the inhabitants variance (ddof=0). If you’re working with a pattern and want to use Bessel’s correction, you need to explicitly move ddof=1.

import numpy as np

X = [15, 8, 13, 7, 7, 12, 15, 6, 8, 9]

# Pattern variance and normal deviation

np.var(X, ddof=1)

np.std(X, ddof=1)

# Inhabitants variance and normal deviation (Default)

np.var(X)

np.std(X)pandas

By default, pandas takes the alternative method. It assumes your knowledge is a pattern and calculates the pattern variance (ddof=1). To calculate the inhabitants variance as a substitute, you need to move ddof=0.

import pandas as pd

X = pd.Sequence([15, 8, 13, 7, 7, 12, 15, 6, 8, 9])

# Pattern variance and normal deviation (Default)

X.var()

X.std()

# Inhabitants variance and normal deviation

X.var(ddof=0)

X.std(ddof=0)Python’s Constructed-in statistics Module

Python’s normal library doesn’t use a ddof parameter. As a substitute, it supplies explicitly named capabilities so there isn’t a ambiguity about which components is getting used.

import statistics

X = [15, 8, 13, 7, 7, 12, 15, 6, 8, 9]

# Pattern variance and normal deviation

statistics.variance(X)

statistics.stdev(X)

# Inhabitants variance and normal deviation

statistics.pvariance(X)

statistics.pstdev(X)R

In R, the usual var() and sd() capabilities calculate the pattern variance and pattern normal deviation by default. Not like the Python libraries, R doesn’t have a built-in argument to simply swap to the inhabitants components. To calculate the inhabitants variance, you need to manually multiply the pattern variance by .

X <- c(15, 8, 13, 7, 7, 12, 15, 6, 8, 9)

n <- size(X)

# Pattern variance and normal deviation (Default)

var(X)

sd(X)

# Inhabitants variance and normal deviation

var(X) * ((n - 1) / n)

sd(X) * sqrt((n - 1) / n)Conclusion

This text explored a irritating but typically unnoticed trait of various statistical programming languages and libraries — they elect to make use of completely different default definitions of variance and normal deviation. An instance was given the place for a similar enter array, numpy and pandas return completely different values for the variance by default.

This got here right down to the distinction between how variance ought to be calculated for your entire statistical inhabitants being studied vs how variance ought to be calculated based mostly on only a pattern from that inhabitants, with completely different libraries making completely different decisions concerning the default. Lastly it was proven that though every library has its default, all of them can be utilized to calculate each varieties of variance through the use of both a ddof argument, a barely completely different operate, or by way of a easy mathematical transformation.

Thanks for studying!