: once I first began utilizing Pandas, I wrote loops like this on a regular basis:

for i in vary(len(df)):

if df.loc[i, "sales"] > 1000:

df.loc[i, "tier"] = "excessive"

else:

df.loc[i, "tier"] = "low"It labored. And I believed, “Hey, that’s effective, proper?”

Seems… not a lot.

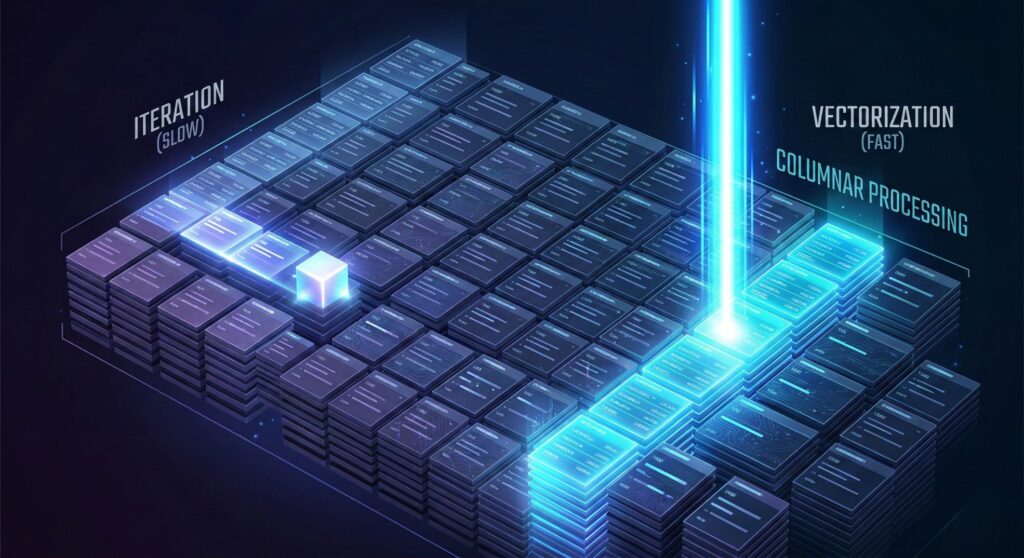

I didn’t understand it on the time, however loops like this are a traditional newbie lure. They make Pandas do far more work than it must, they usually sneak in a psychological mannequin that retains you considering row by row as an alternative of column by column.

As soon as I began considering in columns, issues modified. Code acquired shorter. Execution acquired quicker. And all of the sudden, Pandas felt prefer it was really constructed to assist me, not sluggish me down.

To indicate this, let’s use a tiny dataset we’ll reference all through:

import pandas as pd

df = pd.DataFrame({

"product": ["A", "B", "C", "D", "E"],

"gross sales": [500, 1200, 800, 2000, 300]

})Output:

product gross sales

0 A 500

1 B 1200

2 C 800

3 D 2000

4 E 300Our objective is straightforward: label every row as excessive if gross sales are larger than 1000, in any other case low.

Let me present you the way I did it at first, and why there’s a greater manner.

The Loop Strategy I Began With

Right here’s the loop I used once I was studying:

for i in vary(len(df)):

if df.loc[i, "sales"] > 1000:

df.loc[i, "tier"] = "excessive"

else:

df.loc[i, "tier"] = "low"

print(df)It produces this outcome:

product gross sales tier

0 A 500 low

1 B 1200 excessive

2 C 800 low

3 D 2000 excessive

4 E 300 lowAnd sure, it really works. However right here’s what I discovered the exhausting manner:

Pandas is doing a tiny operation for every row, as an alternative of effectively dealing with the entire column directly.

This method doesn’t scale — what feels effective with 5 rows slows down with 50,000 rows.

Extra importantly, it retains you considering like a newbie — row by row — as an alternative of like knowledgeable Pandas consumer.

Timing the Loop (The Second I Realized It Was Gradual)

After I first ran my loop on this tiny dataset, I believed, “No downside, it’s quick sufficient.” However then I puzzled… what if I had an even bigger dataset?

So I attempted it:

import pandas as pd

import time

# Make an even bigger dataset

df_big = pd.DataFrame({

"product": ["A", "B", "C", "D", "E"] * 100_000,

"gross sales": [500, 1200, 800, 2000, 300] * 100_000

})

# Time the loop

begin = time.time()

for i in vary(len(df_big)):

if df_big.loc[i, "sales"] > 1000:

df_big.loc[i, "tier"] = "excessive"

else:

df_big.loc[i, "tier"] = "low"

finish = time.time()

print("Loop time:", finish - begin)Right here’s what I acquired:

Loop time: 129.27328729629517That’s 129 seconds.

Over two minutes simply to label rows as "excessive" or "low".

That’s the second it clicked for me. The code wasn’t simply “a bit inefficient.” It was essentially utilizing Pandas the flawed manner.

And picture this operating inside a knowledge pipeline, in a dashboard refresh, on hundreds of thousands of rows each single day.

Why It’s That Gradual

The loop forces Pandas to:

- Entry every row individually

- Execute Python-level logic for each iteration

- Replace the DataFrame one cell at a time

In different phrases, it turns a extremely optimized columnar engine right into a glorified Python record processor.

And that’s not what Pandas is constructed for.

The One-Line Repair (And the Second It Clicked)

After seeing 129 seconds, I knew there needed to be a greater manner.

So as an alternative of looping by rows, I attempted expressing the rule on the column degree:

“If gross sales > 1000, label excessive. In any other case, label low.”

That’s it. That’s the rule.

Right here’s the vectorized model:

import numpy as np

import time

begin = time.time()

df_big["tier"] = np.the place(df_big["sales"] > 1000, "excessive", "low")

finish = time.time()

print("Vectorized time:", finish - begin)And the outcome?

Vectorized time: 0.08Let that sink in.

Loop model: 129 seconds

Vectorized model: 0.08 seconds

That’s over 1,600× quicker.

What Simply Occurred?

The important thing distinction is that this:

The loop processed the DataFrame row by row. The vectorized model processed your complete gross sales column in a single optimized operation.

Once you write:

df_big["sales"] > 1000Pandas doesn’t test values separately in Python. It performs the comparability at a decrease degree (through NumPy), in compiled code, throughout your complete array.

Then np.the place() applies the labels in a single environment friendly move.

Right here’s the delicate however highly effective change:

As a substitute of asking:

“What ought to I do with this row?”

You ask:

“What rule applies to this column?”

That’s the road between newbie Pandas {and professional} Pandas.

At this level, I believed I’d “leveled up.” Then I found I might make it even less complicated.

And Then I Found Boolean Indexing

After timing the vectorized model, I felt fairly proud. However then I had one other realization.

I don’t even want np.the place() for this.

Let’s return to our small dataset:

df = pd.DataFrame({

"product": ["A", "B", "C", "D", "E"],

"gross sales": [500, 1200, 800, 2000, 300]

})Our objective continues to be the identical:

Label every row excessive if gross sales > 1000, in any other case low.

With np.the place() we wrote:

df["tier"] = np.the place(df["sales"] > 1000, "excessive", "low")It’s cleaner and quicker. Significantly better than a loop.

However right here’s the half that basically modified how I take into consideration Pandas:

This line proper right here…

df["sales"] > 1000…already returns one thing extremely helpful.

Let’s have a look at it:

Output:

0 False

1 True

2 False

3 True

4 False

Identify: gross sales, dtype: boolThat’s a Boolean Sequence.

Pandas simply evaluated the situation for your complete column directly.

No loop. No if. No row-by-row logic.

It produced a full masks of True/False values in a single shot.

Boolean Indexing Feels Like a Superpower

Now right here’s the place it will get fascinating.

You need to use that Boolean masks on to filter rows:

df[df["sales"] > 1000]And Pandas immediately provides you:

We are able to even construct the tier column utilizing Boolean indexing immediately:

df["tier"] = "low"

df.loc[df["sales"] > 1000, "tier"] = "excessive"I’m mainly saying:

- Assume the whole lot is

"low". - Override solely the rows the place gross sales > 1000.

That’s it.

And all of the sudden, I’m not considering:

“For every row, test the worth…”

I’m considering:

“Begin with a default. Then apply a rule to a subset.”

That shift is delicate, nevertheless it adjustments the whole lot.

As soon as I acquired comfy with Boolean masks, I began questioning:

What occurs when the logic isn’t as clear as “larger than 1000”? What if I want customized guidelines?

That’s the place I found apply(). And at first, it felt like the very best of each worlds.

Isn’t apply() Good Sufficient?

I’ll be trustworthy. After I finished writing loops, I believed I had the whole lot discovered. As a result of there was this magical perform that appeared to unravel the whole lot:apply().

It felt like the proper center floor between messy loops and scary vectorization.

So naturally, I began writing issues like this:

df["tier"] = df["sales"].apply(

lambda x: "excessive" if x > 1000 else "low"

)And at first look?

This appears to be like nice.

- No

forloop - No handbook indexing

- Simple to learn

It feels like knowledgeable resolution.

However right here’s what I didn’t perceive on the time:

apply() continues to be operating Python code for each single row.

It simply hides the loop.

Once you use:

df["sales"].apply(lambda x: ...)

Pandas continues to be:

- Taking every worth

- Passing it right into a Python perform

- Returning the outcome

- Repeating that for each row

It’s cleaner than a for loop, sure. However performance-wise? It’s a lot nearer to a loop than to true vectorization.

That was a little bit of a wake-up name for me. I noticed I used to be changing seen loops with invisible ones.

So When Ought to You Use apply()?

- If the logic will be expressed with vectorized operations → try this.

- If it may be expressed with Boolean masks → try this.

- If it completely requires customized Python logic → then use

apply().

In different phrases:

Vectorize first. Attain for apply()solely when it’s essential to.

Not as a result of apply() is unhealthy. However as a result of Pandas is quickest and cleanest while you suppose in columns, not in row-wise capabilities.

Conclusion

Wanting again, the largest mistake I made wasn’t writing loops. It was assuming that if the code labored, it was ok.

Pandas doesn’t punish you instantly for considering in rows. However as your datasets develop, as your pipelines scale, as your code leads to dashboards and manufacturing workflows, the distinction turns into apparent.

- Row-by-row considering doesn’t scale.

- Hidden Python loops don’t scale.

- Column-level guidelines do.

That’s the actual line between newbie {and professional} Pandas utilization.

So, in abstract:

Cease asking what to do with every row. Begin asking what rule applies to your complete column.

When you make that shift, your code will get quicker, cleaner, simpler to evaluate and simpler to take care of. And also you begin recognizing inefficient patterns immediately, together with your individual.