: Why this comparability issues

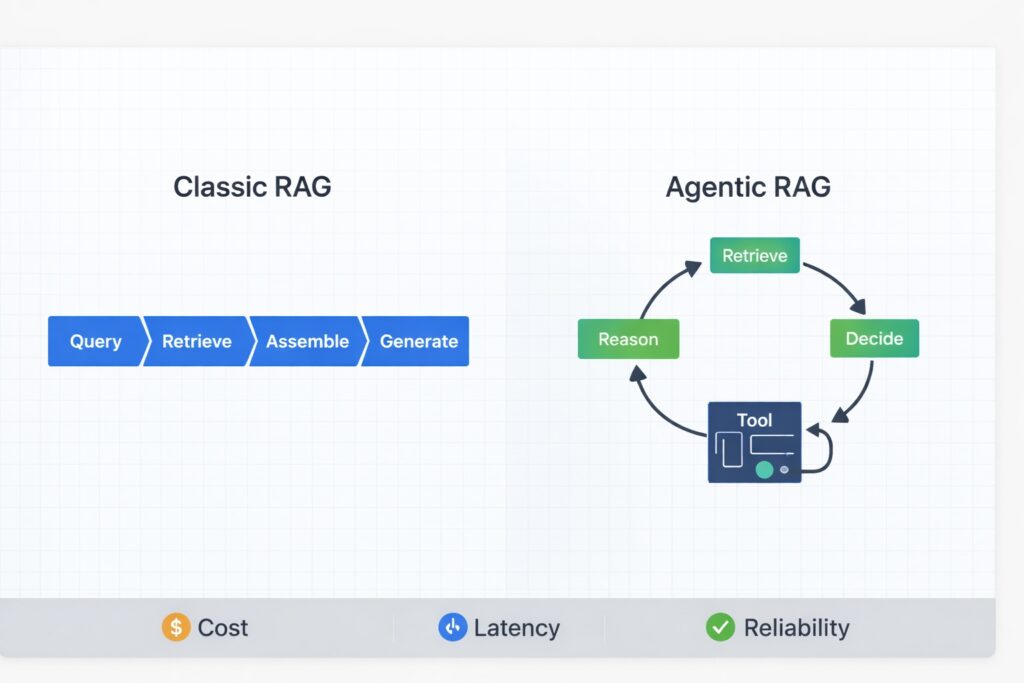

RAG started with a simple aim: floor mannequin outputs in exterior proof quite than relying solely on mannequin weights. Most groups applied this as a pipeline: retrieve as soon as, then generate a solution with citations.

During the last yr, extra groups have began transferring from that “one-pass” pipeline in direction of agent-like loops that may retry retrieval and name instruments when the primary go is weak. Gartner even forecasts that 33% of enterprise software program purposes will embody agentic AI by 2028, an indication that “agentic” patterns have gotten mainstream quite than area of interest.

Agentic RAG adjustments the system construction. Retrieval turns into a management loop: retrieve, purpose, resolve, then retrieve once more or cease. This mirrors the core sample of “purpose and act” approaches, equivalent to ReAct, wherein the system alternates between reasoning and motion to collect new proof.

Nonetheless, brokers don’t improve RAG with out tradeoffs. Introducing loops and gear calls will increase adaptability however reduces predictability. Correctness, latency, observability, and failure modes all change when debugging a course of as a substitute of a single retrieval step.

Basic RAG: the pipeline psychological mannequin

Basic RAG is simple to know as a result of it follows a linear course of. A consumer question is obtained, the system retrieves a hard and fast set of passages, and the mannequin generates a solution based mostly on that single retrieval. If points come up, debugging normally focuses on retrieval relevance or context meeting.

At a excessive stage, the pipeline seems like this:

- Question: take the consumer query (and any system directions) as enter

- Retrieve: fetch the top-k related chunks (normally through vector search, generally hybrid)

- Assemble context: Choose and organize the perfect passages right into a immediate context (usually with reranking)

- Generate: Produce a solution, ideally with citations again to the retrieved passages

What traditional RAG is sweet at

Basic RAG is only when predictable value and latency are priorities. For simple “doc lookup” questions equivalent to “What does this configuration flag do?”, “The place is the API endpoint for X?”, or “What are the bounds of plan Y?”, a single retrieval go is usually ample. Solutions are delivered shortly, and debugging is direct: if outputs are incorrect, first test retrieval relevance and chunking, then overview immediate conduct.

Instance (traditional RAG in observe):

A consumer asks: “What does the MAX_UPLOAD_SIZE config flag do?”

The retriever pulls the configuration reference web page the place the flag is outlined.

The mannequin solutions in a single go, “It units the utmost add dimension allowed per request”, and cites the precise part.

There aren’t any loops or device calls, so value and latency stay steady.

The place traditional RAG hits the wall

Basic RAG is a “one-shot” approach. If retrieval fails, the mannequin lacks a built-in restoration mechanism.

That exhibits up in a couple of widespread methods:

- Multi-hop questions: the reply wants proof unfold throughout a number of sources

- Underspecified queries: the consumer’s wording just isn’t the perfect retrieval question

- Brittle chunking: related context is cut up throughout chunks or obscured by jargon

- Ambiguity: the system could must ask clarifying questions, reformulate, or discover additional earlier than offering an correct reply.

Why this issues:

When traditional RAG fails, it usually does so quietly. The system nonetheless gives a solution, however it might be a assured synthesis based mostly on weak proof.

Agentic RAG: from retrieval to a management loop

Agentic RAG retains the retriever and generator elements however adjustments the management construction. As an alternative of counting on a single retrieval go, retrieval is wrapped in a loop, permitting the system to overview its proof, establish gaps, and try retrieval once more if wanted. This loop offers the system an “agentic” high quality: it not solely generates solutions from proof but in addition actively chooses the way to collect stronger proof till a cease situation is met. A useful analogy is incident debugging: traditional RAG is like working one log question and writing the conclusion from no matter comes again, whereas agentic RAG is a debug loop. You question, examine the proof, discover what’s lacking, refine the question or test a second system, and repeat till you’re assured otherwise you hit a time/value price range and escalate.

A minimal loop is:

- Retrieve: pull candidate proof (docs, search outcomes, or device outputs)

- Motive: synthesize what you could have and establish what’s lacking or unsure

- Resolve: cease and reply, refine the question, change sources/instruments, or escalate

For a analysis reference, ReAct gives a helpful psychological mannequin: reasoning steps and actions are interleaved, enabling the system to collect extra substantial proof earlier than finalizing a solution.

What brokers add

Planning (decomposition)

The agent can decompose a query into smaller evidence-based goals.

Instance: “Why is SSO setup failing for a subset of customers?”

- What error codes are we seeing?

- Which IdP configuration is used

- Is that this a docs query, a log query, or a configuration query

Basic RAG treats your entire query as a single question. An agent can explicitly decide what info is required first.

Software use (past retrieval)

In agentic RAG, retrieval is one among a number of accessible instruments. The agent may use:

- A second index

- A database question

- A search API

- A config checker

- A light-weight verifier

That is essential as a result of related solutions usually exist outdoors the documentation index. The loop permits the system to retrieve proof from its precise supply.

Iterative refinement (deliberate retries)

This represents essentially the most important development. As an alternative of making an attempt to generate a greater reply from weak retrieval, the agent can intentionally requery.

Self-RAG is an effective instance of this analysis route: it’s designed to retrieve on demand the critique of retrieved passages and to generate them, quite than all the time utilizing a hard and fast top-k retrieval step.

That is the core functionality shift: the system can adapt its retrieval technique based mostly on info realized throughout execution.

Tradeoffs: Advantages and Drawbacks of Loops

Agentic RAG is useful as a result of it can repair retrieval quite than counting on guesses. When the preliminary retrieval is weak, the system can rewrite the question, change sources, or collect extra proof earlier than answering. This method is healthier suited to ambiguous questions, multi-hop reasoning, and conditions the place related info is dispersed.

Nonetheless, introducing a loop adjustments manufacturing expectations. What will we imply by a “loop”? On this article, a loop is one full iteration of the agent’s management cycle: Retrieve → Motive → Resolve, repeated till a cease situation is met (excessive confidence + citations, max steps, price range cap, or escalation). That definition issues as a result of as soon as retrieval is iterative, value and latency turn into distributions: some runs cease shortly, whereas others take further iterations, retries, or device calls. In observe, you cease optimizing for the “typical” run and begin managing tail conduct (p95 latency, value spikes, and worst-case device cascades).

Right here’s a tiny instance of what that Retrieve → Motive → Resolve loop can appear to be in code:

# Retrieve → Motive → Resolve Loop (agentic RAG)

proof = []

for step in vary(MAX_STEPS):

docs = retriever.search(question=build_query(user_question, proof))

gaps = find_gaps(user_question, docs, proof)

if gaps.glad and has_citations(docs):

return generate_answer(user_question, docs, proof)

motion = decide_next_action(gaps, step)

if motion.kind == "refine_query":

proof.append(("trace", motion.trace))

elif motion.kind == "call_tool":

proof.append(("device", instruments[action.name](motion.args)))

else:

break # protected cease if looping is not serving to

return safe_stop_response(user_question, proof)The place loops assist

Agentic RAG is most precious when “retrieve as soon as → reply” isn’t sufficient. In observe, loops assist in three typical circumstances:

- The query is underspecified and desires question refinement

- The proof is unfold throughout a number of paperwork or sources

- The primary retrieval returns partial or conflicting info, and the system must confirm earlier than committing

The place loops damage

The tradeoff is operational complexity. With loops, you introduce extra transferring elements (planner, retriever, optionally available instruments, cease circumstances), which will increase variance and makes runs tougher to breed. You additionally develop the floor space for failures: a run would possibly look “effective” on the finish, however nonetheless burn tokens by way of repeated retrieval, retries, or device cascades.

That is additionally why “enterprise RAG” tends to get tough in observe: safety constraints, messy inside information, and integration overhead make naive retrieval brittle.

Failure modes you’ll see early in manufacturing

As soon as you progress from a pipeline to a management loop, a couple of issues present up repeatedly:

- Retrieval thrash: the agent retains retrieving with out enhancing proof high quality.

- Software-call cascades: one device name triggers one other, compounding latency and price.

- Context bloat: the immediate grows till high quality drops or the mannequin begins lacking key particulars.

- Cease-condition bugs: the loop doesn’t cease when it ought to (or stops too early).

- Assured-wrong solutions: the system converges on noisy proof and solutions with excessive confidence.

A key perspective is that traditional RAG primarily fails because of retrieval high quality or prompting. Agentic RAG can encounter these points as properly, but in addition introduces control-related failures, equivalent to poor decision-making, insufficient cease guidelines, and uncontrolled loops. As autonomy will increase, observability turns into much more important.

Fast comparability: Basic vs Agentic RAG

| Dimension | Basic RAG | Agentic RAG |

|---|---|---|

| Price predictability | Excessive | Decrease (relies on loop depth) |

| Latency predictability | Excessive | Decrease (p95 grows with iterations) |

| Multi-hop queries | Typically weak | Stronger |

| Debugging floor | Smaller | Bigger |

| Failure modes | Largely retrieval + immediate | Provides loop management failures |

Resolution Framework: When to remain traditional vs go agentic

A sensible method to picking between traditional and agentic RAG is to judge your use case alongside two axes: question complexity (the extent of multi-step reasoning or proof gathering required) and error tolerance (the chance related to incorrect solutions for customers or the enterprise). Basic RAG is a powerful default because of its predictability. Agentic RAG is preferable when duties incessantly require retries, decomposition, or cross-source verification.

Resolution matrix: complexity × error tolerance

Right here, excessive error tolerance means a improper reply is suitable (low stakes), whereas low error tolerance means a improper reply is dear (excessive stakes).

| Excessive error tolerance | Low error tolerance | |

|---|---|---|

| Low Complexity | Basic RAG for quick solutions and predictable latency/value. | Basic RAG with citations, cautious retrieval, escalation |

| Excessive Complexity | Basic RAG + second go on failure alerts (solely loop when wanted). | Agentic RAG with strict cease circumstances, budgets, and debugging |

Sensible gating guidelines (what to route the place)

Basic RAG is normally the higher match when the duty is generally lookup or extraction:

- FAQs and documentation Q&A

- Single-document solutions (insurance policies, specs, limits)

- Quick help the place latency predictability issues greater than excellent protection

Agentic RAG is normally well worth the added complexity when the duty wants multi-step proof gathering:

- Decomposing a query into sub-questions

- Iterative retrieval (rewrite, broaden/slender, change sources)

- Verification and cross-checking throughout sources/instruments

- Workflows the place “attempt once more” is required to succeed in a assured, cited reply.

A easy rule: don’t pay for loops until your job routinely fails in a single go.

Rollout steerage: add a second go earlier than going “full agent.”

You don’t want to decide on between a everlasting pipeline and full agentic implementation. A sensible compromise is to make use of traditional RAG by default and set off a second-pass loop solely when failure alerts are detected, equivalent to lacking citations, low retrieval confidence, contradictory proof, or consumer follow-ups indicating the preliminary reply was inadequate. This method retains most queries environment friendly whereas offering a restoration path for extra advanced circumstances.

Core Takeaway

Agentic RAG just isn’t merely an improved model of RAG; it’s RAG with an added management loop. This loop can improve correctness for advanced, ambiguous, or multi-hop queries by permitting the system to refine retrieval and confirm proof earlier than answering. The tradeoff is operational: elevated complexity, increased tail latency and price spikes, and extra failure modes to debug. Clear budgets, cease guidelines, and traceability are important in case you undertake this method.

Conclusion

In case your product primarily entails doc lookup, extraction, or fast help, traditional RAG is usually the perfect default. It’s easier, less expensive, and simpler to handle. Contemplate agentic RAG solely when there’s clear proof that single-pass retrieval fails for a good portion of queries, or when the price of incorrect solutions justifies the extra verification and iterative proof gathering.

A sensible compromise is to start with traditional RAG and introduce a managed second go solely when failure alerts come up, equivalent to lacking citations, low retrieval confidence, contradictory proof, or repeated consumer follow-ups. If second-pass utilization turns into frequent, implementing an agent loop with outlined budgets and cease circumstances could also be helpful.

For additional exploration of improved retrieval, analysis, and tool-calling patterns, the next references are advisable.