(AWS) are the world’s two largest cloud computing platforms, offering database, community, and compute sources at international scale. Collectively, they maintain about 50% of the worldwide enterprise cloud infrastructure companies market—AWS at 30% and Azure at 20%. Azure ML and AWS SageMaker are machine studying companies that allow information scientists and ML engineers to develop and handle the complete ML lifecycle, from information preprocessing and have engineering to mannequin coaching, deployment, and monitoring. You may create and handle these ML companies in AWS and Azure by console interfaces, or cloud CLI, or software program improvement kits (SDK) in your most well-liked programming language – the strategy mentioned on this article.

Azure ML & AWS SageMaker Coaching Jobs

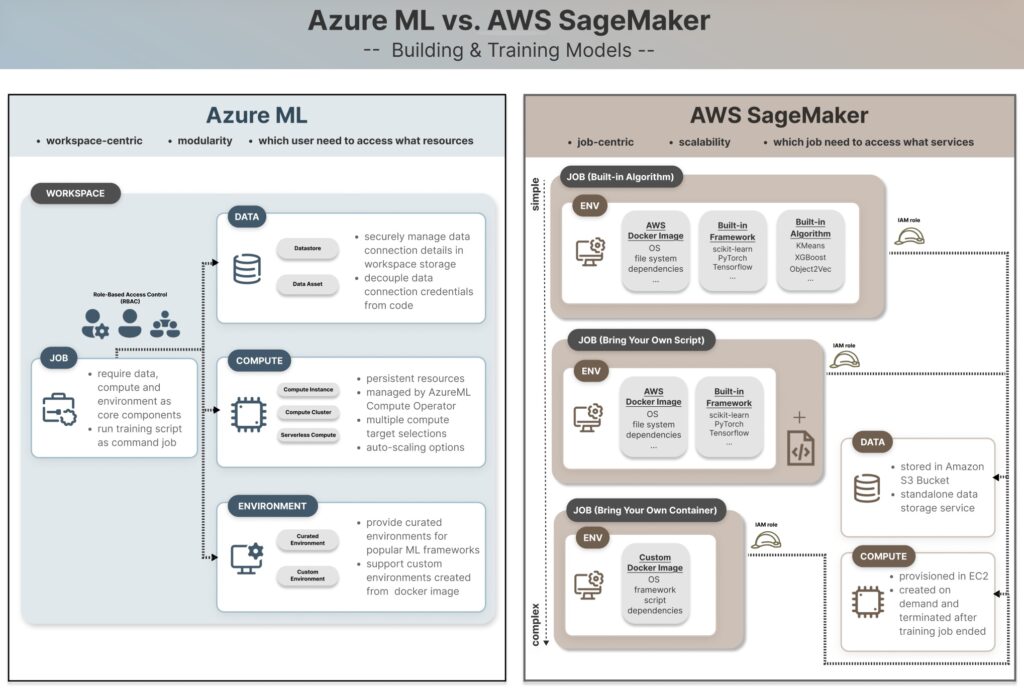

Whereas they provide comparable high-level functionalities, Azure ML and AWS SageMaker have elementary variations that decide which platform most closely fits you, your group, or your organization. Firstly, think about the ecosystem of the prevailing information storage, compute sources, and monitoring companies. As an illustration, if your organization’s information primarily sits in an AWS S3 bucket, then SageMaker might turn out to be a extra pure selection for growing your ML companies, because it reduces the overhead of connecting to and transferring information throughout totally different cloud suppliers. Nonetheless, this doesn’t imply that different elements usually are not price contemplating, and we’ll dive into the main points of how Azure ML differs from AWS SageMaker in a standard ML situation—coaching and constructing fashions at scale utilizing jobs.

Though Jupyter notebooks are helpful for experimentation and exploration in an interactive improvement workflow on a single machine, they aren’t designed for productionization or distribution. Coaching jobs (and different ML jobs) turn out to be important within the ML workflow at this stage by deploying the duty to a number of cloud situations as a way to run for an extended time, and course of extra information. This requires establishing the information, code, compute situations and runtime environments to make sure constant outputs when it’s not executed on one native machine. Consider it just like the distinction between growing a dinner recipe (Jupyter pocket book) and hiring a catering group to prepare dinner it for 500 clients (ML job). It wants everybody within the catering group to entry the identical substances, recipe and instruments, following the identical cooking process.

Now that we perceive the significance of coaching jobs, let’s take a look at how they’re outlined in Azure ML vs. SageMaker in a nutshell.

Outline Azure ML coaching job

from azure.ai.ml import command

job = command(

code=...

command=...

surroundings=...

compute=...

)

ml_client.jobs.create_or_update(job)Create SageMaker coaching job estimator

from sagemaker.estimator import Estimator

estimator = Estimator(

image_uri=...

position=...

instance_type=...

)

estimator.match(training_data_s3_location)We’ll break down the comparability into following dimensions:

- Venture and Permission Administration

- Information storage

- Compute

- Surroundings

Partly 1, we’ll begin with evaluating the high-level venture setup and permission administration, then speak about storing and accessing the information required for mannequin coaching. Half 2 will focus on numerous compute choices below each cloud platforms, and create and handle runtime environments for coaching jobs.

Venture and Permission Administration

Let’s begin by understanding a typical ML workflow in a medium-to-large group of information scientists, information engineers, and ML engineers. Every member might focus on a selected position and accountability, and assigned to a number of tasks. For instance, an information engineer is tasked with extracting information from the supply and storing it in a centralized location for information scientists to course of. They don’t must spin up compute situations for working coaching jobs. On this case, they might have learn and write entry to the information storage location however don’t essentially want entry to create GPU situations for heavy workloads. Relying on information sensitivity and their position in an ML venture, group members want totally different ranges of entry to the information and underlying cloud infrastructure. We’re going to discover how two cloud platforms construction their sources and companies to steadiness the necessities of group collaboration and accountability separation.

Azure ML

Venture administration in Azure ML is Workspace-centric, beginning by making a Workspace (below your Azure subscription ID and useful resource group) for storing related useful resource and belongings, and shared throughout the venture group for collaboration.

Permissions to entry and handle sources are granted on the user-level primarily based on their roles – i.e. role-based entry management (RBAC). Generic roles in Azure embody proprietor, contributor and reader. ML specialised roles embody AzureML Information Scientist and AzureML Compute Operator, which is chargeable for creating and managing compute situations as they’re typically the most important value ingredient in an ML venture. The targets of establishing an Azure ML Workspace is to create a contained environments for storing information, compute, mannequin and different sources, in order that solely customers inside the Workspace are given related entry to learn or edit the information belongings, use current or create new compute situations primarily based on their tasks.

Within the code snippet beneath, we hook up with the Azure ML workspace by MLClient by passing the workspace’s subscription ID, useful resource group and the default credential – Azure follows the hierarchical construction Subscription > Useful resource Group > Workspace.

Upon workspace creation, related companies like an Azure Storage Account (shops metadata and artifacts and may retailer coaching information) and an Azure Key Vault (shops secrets and techniques like usernames, passwords, and credentials) are additionally instantiated mechanically.

from azure.ai.ml import MLClient

from azure.id import DefaultAzureCredential

subscription_id = '<YOUR_SUBSCRIPTION_ID>'

resource_group = '<YOUR_RESOURCE_GROUP>'

workspace = '<YOUR_AZUREML_WORKSPACE>'

# Hook up with the workspace

credential = DefaultAzureCredential()

ml_client = MLClient(credential, subscription, resource_group, workspace)When builders run the code throughout an interactive improvement session, the workspace connection is authenticated by the developer’s private credentials. They’d be capable to create a coaching job utilizing the command ml_client.jobs.create_or_update(job) as demonstrated beneath. To detach private account credentials within the manufacturing surroundings, it is strongly recommended to make use of a service principal account to authenticate for automated pipelines or scheduled jobs. Extra data could be discovered on this article “Authenticate in your workspace using a service principal”.

# Outline Azure ML coaching job

from azure.ai.ml import command

job = command(

code=...

command=...

surroundings=...

compute=...

)

ml_client.jobs.create_or_update(job)AWS SageMaker

Roles and permissions in SageMaker are designed primarily based on a totally totally different precept, primarily utilizing “Roles” in AWS Id Entry Administration (IAM) service. Though IAM permits creating user-level (or account-level) entry much like Azure, AWS recommends granting permissions on the job-level all through the ML lifecycle. On this method, your private AWS permissions are irrelevant at runtime and SageMaker assumes a job (i.e. SageMaker execution position) to entry related AWS companies, akin to S3 bucket, SageMaker Coaching Pipeline, compute situations for executing the job.

For instance, here’s a fast peek of establishing an Estimator with the SageMaker execution position for working the Coaching Job.

import sagemaker

from sagemaker.estimator import Estimator

# Get the SageMaker execution position

position = sagemaker.get_execution_role()

# Outline the estimator

estimator = Estimator(

image_uri=image_uri,

position=position, # assume the SageMaker execution position throughout runtime

instance_type="ml.m5.xlarge",

instance_count=1,

)

# Begin coaching

estimator.match("s3://my-training-bucket/prepare/")

It signifies that we will arrange sufficient granularity to grant position permissions to run solely coaching jobs within the improvement surroundings however not touching the manufacturing surroundings. For instance, the position is given entry to an S3 bucket that holds check information and is blocked from the one which holds manufacturing information, then the coaching job that assumes this position gained’t have the possibility to overwrite the manufacturing information accidentally.

Permission Administration in AWS is a complicated area by itself, and I gained’t faux I can absolutely clarify this subject. I like to recommend studying this text for extra greatest practices from AWS official documentation “Permissions management“.

What does this imply in observe?

- Azure ML: Azure’s Function Primarily based Entry Management (RBAC) suits firms or groups that handle which consumer must entry what sources. Extra intuitive to know and helpful for centralized consumer entry management.

- AWS SageMaker AI: AWS suits methods that care about which job must entry what companies. Decouple particular person consumer permissions with job execution for higher automation and MLOps practices. AWS suits for giant information science group with granular job and pipeline definitions and remoted environments.

Reference

Information Storage

You’ll have the query — can I retailer the information within the working listing? At the least that’s been my query for a very long time, and I imagine the reply continues to be sure in case you are experimenting or prototyping utilizing a easy script or pocket book in an interactive improvement surroundings. However information storage location is necessary to contemplate within the context of making ML jobs.

Since code runs in a cloud-managed surroundings or a docker container separate out of your native listing, any regionally saved information can’t be accessed when executing pipelines and jobs in SageMaker or Azure ML. This requires centralized, managed information storage companies. In Azure, that is dealt with by a storage account inside the Workspace that helps datastores and information belongings.

Datastores comprise connection data, whereas information belongings are versioned snapshots of information used for coaching or inference. AWS, alternatively, depends closely on S3 buckets as centralized storage places that allow safe, sturdy, cross-region entry throughout totally different accounts, and customers can entry information by its distinctive URI path.

Azure ML

Azure ML treats information as hooked up sources and belongings within the Workspaces, with one storage account and 4 built-in datastores mechanically created upon the instantiation of every Workspace as a way to retailer information (in Azure File Share) and datasets (in Azure Blob Storage).

Since datastores securely preserve information connection data and mechanically deal with the credential/id behind the scene, it decouples information location and entry permission from the code, in order that the code to stay unchanged even when the underlying information connection modifications. Datastores could be accessed by their distinctive URI. Right here’s an instance of making an Enter object with the kind uri_file by passing the datastore path.

# create coaching information utilizing Datastore

training_data=Enter(

kind="uri_file",

path="<azureml://datastores/Workspaceblobstore/paths/demo-datasets/prepare/information.csv>",

)Then this information can be utilized because the coaching information for an AutoML classification job.

classification_job = automl.classification(

compute='aml-cluster',

training_data=training_data,

target_column_name='Survived',

primary_metric='accuracy',

)Information Asset is one other choice to entry information in an ML job, particularly when it’s helpful to maintain observe of a number of information variations, so information scientists can determine the right information snapshots getting used for mannequin constructing or experimentations. Right here is an instance code for creating an Enter object with AssetTypes.URI_FILE kind by passing the information asset path “azureml:my_train_data:1” (which incorporates the information asset title + model quantity) and utilizing the mode InputOutputModes.RO_MOUNT for learn solely entry. You’ll find extra data within the documentation “Access data in a job”.

# creating coaching information utilizing Information Asset

training_data = Enter(

kind=AssetTypes.URI_FILE,

path="azureml:my_train_data:1",

mode=InputOutputModes.RO_MOUNT

)AWS SageMaker

AWS SageMaker is tightly built-in with Amazon S3 (Easy Storage Service) for ML workflows, in order that SageMaker coaching jobs, inference endpoints, and pipelines can course of enter information from S3 buckets and write output information again to them. Chances are you’ll discover that making a SageMaker managed job surroundings (which will probably be mentioned in Half 2) requires S3 bucket location as a key parameter, alternatively a default bucket will probably be created if unspecified.

Not like Azure ML’s Workspace-centric datastore strategy, AWS S3 is a standalone information storage service that gives scalable, sturdy, and safe cloud storage that may be shared throughout different AWS companies and accounts. This gives extra flexibility for permission administration on the particular person folder stage, however on the similar time requires explicitly granting the SageMaker execution position entry to the S3 bucket.

On this code snippet, we use estimator.match(train_data_uri)to suit the mannequin on the coaching information by passing its S3 URI instantly, then generates the output mannequin and shops it on the specified S3 bucket location. Extra situations could be discovered of their documentation: “Amazon S3 examples using SDK for Python (Boto3)”.

import sagemaker

# Outline S3 paths

train_data_uri = "<s3://demo-bucket/prepare/information.csv>"

output_folder_uri = "<s3://demo-bucket/mannequin/>"

# Use in coaching job

estimator = Estimator(

image_uri=image_uri,

position=position,

instance_type="ml.m5.xlarge",

output_path=output_folder_uri

)

estimator.match(train_data_uri)What does it imply in observe?

- Azure ML: use Datastore to handle information connections, which handles the credential/id data behind the scene. Subsequently, this strategy decouples information location and entry permission from the code, permitting the code stay unchanged when the underlying connection modifications.

- AWS SageMaker: use S3 buckets as the first information storage service for managing enter and output information of SageMaker jobs by their URI paths. This strategy requires express permission administration to grant the SageMaker execution position entry to the required S3 bucket.

Reference

Take-Dwelling Message

Evaluate Azure ML and AWS SageMaker for scalable mannequin coaching, specializing in venture setup, permission administration, and information storage patterns, so groups can higher align platform selections with their current cloud ecosystem and most well-liked MLOps workflows.

Partly 1, we evaluate the high-level venture setup and permission administration, storing and accessing the information required for mannequin coaching. Half 2 will focus on numerous compute choices below each cloud platforms, and the creation and administration of runtime environments for coaching jobs.

Related Resources