is aware of the wait. You kind an set up command and watch the cursor blink. The bundle supervisor churns by way of its index. Seconds stretch. You surprise if one thing broke.

This delay has a particular trigger: metadata bloat. Many bundle managers keep a monolithic index of each obtainable bundle, model, and dependency. As ecosystems develop, these indexes develop with them. Conda-forge surpasses 31,000 packages throughout a number of platforms and architectures. Different ecosystems face related scale challenges with a whole lot of hundreds of packages.

When bundle managers use monolithic indexes, your shopper downloads and parses the whole factor for each operation. You fetch metadata for packages you’ll by no means use. The issue compounds: extra packages imply bigger indexes, slower downloads, greater reminiscence consumption, and unpredictable construct instances.

This isn’t distinctive to any single bundle supervisor. It’s a scaling downside that impacts any bundle ecosystem serving hundreds of packages to tens of millions of customers.

The Structure of Package deal Indexes

Conda-forge, like some bundle managers, distributes its index as a single file. This design has benefits: the solver will get all the knowledge it wants upfront in a single request, enabling environment friendly dependency decision with out round-trip delays. When ecosystems have been small, a 5 MB index downloaded in seconds and parsed with minimal reminiscence.

At scale, the design breaks down.

Take into account conda-forge, one of many largest community-driven bundle channels for scientific Python. Its repodata.json file, which accommodates metadata for all obtainable packages, exceeds 47 MB compressed (363 MB uncompressed). Each surroundings operation requires parsing this file. When any bundle within the channel modifications – which occurs ceaselessly with new builds – the whole file have to be re-downloaded. A single new bundle model invalidates your whole cache. Customers re-download 47+ MB to get entry to at least one replace.

The results are measurable: multi-second fetch instances on quick connections, minutes on slower networks, reminiscence spikes parsing the 363 MB JSON file, and CI pipelines that spend extra time on dependency decision than precise builds.

Sharding: A Completely different Method

The answer borrows from database structure. As a substitute of 1 monolithic index, you cut up metadata into many small items. Every bundle will get its personal “shard” containing solely its metadata. Shoppers fetch the shards they want and ignore the remainder.

This sample seems throughout distributed methods. Database sharding partitions information throughout servers. Content material supply networks cache property by area. Search engines like google and yahoo distribute indexes throughout clusters. The precept is constant: when a single information construction turns into too massive, divide it.

Utilized to bundle administration, sharding transforms metadata fetching from “obtain every part, use little” to “obtain what you want, use all of it.”

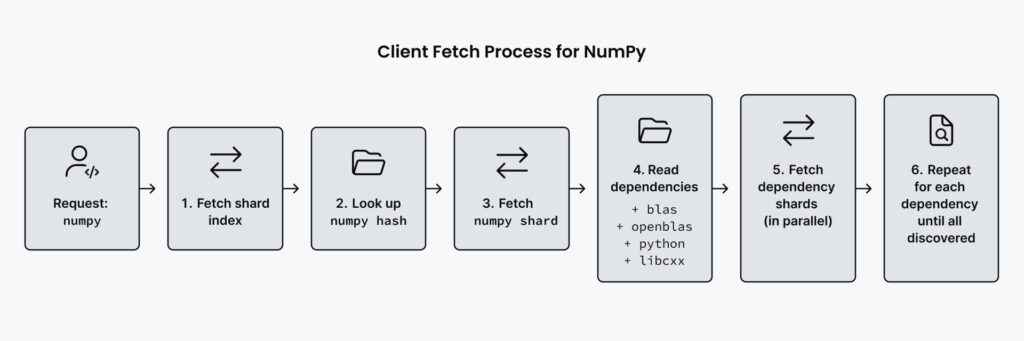

The implementation works by way of a two-part system outlined within the under diagram. First, a light-weight manifest file, known as the shard index, lists all obtainable packages and maps every bundle identify to a hash. Consider a hash as a singular fingerprint generated from the file’s content material. When you change even one byte of the file, you get a very totally different hash.

This hash is computed from the compressed shard file content material, so every shard file is uniquely recognized by its hash. This manifest is small, round 500 KB for conda-forge’s linux-64 subdirectory which accommodates over 12,000 bundle names. It solely wants updating when packages are added or eliminated. Second, particular person shard information comprise the precise bundle metadata. Every shard accommodates all variations of a single bundle identify, saved as a separate compressed file.

The important thing perception is content-addressed storage. Every shard file is called after the hash of its compressed content material. If a bundle hasn’t modified, its shard content material stays the identical, so the hash stays the identical. This implies shoppers can cache shards indefinitely with out checking for updates. No round-trip to the server is required.

If you request a bundle, the shopper performs a dependency traversal mirroring the under diagram. It fetches the shard index to lookup the bundle identify and discover its corresponding hash, then makes use of that hash to fetch the precise shard file. The shard accommodates dependency data, which the shopper makes use of to then fetch the following batch of extra shards in parallel.

This course of discovers solely the packages that might probably be wanted, sometimes 35 to 678 packages for frequent installs, moderately than downloading metadata for all packages throughout all platforms within the channel. Your conda shopper solely downloads the metadata it must replace your surroundings.

Measuring the Impression

The conda ecosystem not too long ago applied sharded repodata by way of CEP-16, a neighborhood specification developed collaboratively by engineers at prefix.dev, Anaconda, Quansight,a volunteer-maintained channel that hosts over 31,000 community-built packages independently of any single firm. This makes it a perfect proving floor for infrastructure modifications that profit the broader ecosystem.

The benchmarks inform a transparent story.

For metadata fetching and parsing, sharded repodata delivers a 10x velocity enchancment. Chilly cache operations that beforehand took 18 seconds full in beneath 2 seconds. Community switch drops by an element of 35. Putting in Python beforehand required downloading 47+ MB of metadata. With sharding, you obtain roughly 2 MB. Peak reminiscence utilization decreases by 15 to 17x, from over 1.4 GB to beneath 100 MB.

Cache conduct additionally modifications. With monolithic indexes, any channel replace invalidates your whole cache. With sharding, solely the affected bundle’s shard wants refreshing. This implies extra cache hits and fewer redundant downloads over time.

Design Tradeoffs

Sharding introduces complexity. Shoppers want logic to find out which shards to fetch. Servers want infrastructure to generate and serve hundreds of small information as an alternative of 1 massive file. Cache invalidation turns into extra granular but in addition extra intricate.

The CEP-16 specification addresses these tradeoffs with a two-tier strategy. A light-weight manifest file lists all obtainable shards and their checksums. Shoppers obtain this manifest first, then fetch solely the shards for packages they should resolve. HTTP caching handles the remainder. Unchanged shards return 304 responses. Modified shards obtain contemporary.

This design retains shopper logic easy whereas shifting complexity to the server, the place it may be optimized as soon as and profit all customers. For conda-forge, Anaconda’s infrastructure group dealt with this server-side work, that means the 31,000+ bundle maintainers and tens of millions of customers profit with out altering their workflows.

Broader Functions

The sample extends past conda-forge. Any bundle supervisor utilizing monolithic indexes faces related scaling challenges. The important thing perception is separating the invention layer (what packages exist) from the decision layer (what metadata do I want for my particular dependencies).

Completely different ecosystems have taken totally different approaches to this downside. Some use per-package APIs the place every bundle’s metadata is fetched individually – this avoids downloading every part, however can lead to many sequential HTTP requests throughout dependency decision. Sharded repodata presents a center floor: you fetch solely the packages you want, however can batch-fetch associated dependencies in parallel, decreasing each bandwidth and request overhead.

For groups constructing inside bundle repositories, the lesson is architectural: design your metadata layer to scale independently of your bundle depend. Whether or not you select per-package APIs, sharded indexes, or one other strategy, the choice is watching your construct instances develop with each bundle you add.

Attempting It Your self

Pixi already has assist for sharded repodata with the conda-forge channel, which is included by default. Simply use pixi usually and also you’re already benefiting from it.

When you use conda with conda-forge, you’ll be able to allow sharded repodata assist:

conda set up --name base 'conda-libmamba-solver>=25.11.0'

conda config --set plugins.use_sharded_repodata trueThe function is in beta for conda and the conda maintainers are gathering suggestions earlier than common availability. When you encounter points, the conda-libmamba-solver repository on GitHub is the place to report them.

For everybody else, the takeaway is easier: when your tooling feels sluggish, have a look at the metadata layer. The packages themselves might not be the bottleneck. The index usually is.

The proprietor of In the direction of Information Science, Perception Companions, additionally invests in Anaconda. Because of this, Anaconda receives choice as a contributor.