of my Machine Studying Introduction Calendar.

Earlier than closing this sequence, I want to sincerely thank everybody who adopted it, shared suggestions, and supported it, specifically the In the direction of Information Science staff.

Ending this calendar with Transformers just isn’t a coincidence. The Transformer isn’t just a flowery identify. It’s the spine of recent Giant Language Fashions.

There’s a lot to say about RNNs, LSTMs, and GRUs. They performed a key historic function in sequence modeling. However right this moment, trendy LLMs are overwhelmingly primarily based on Transformers.

The identify Transformer itself marks a rupture. From a naming perspective, the authors might have chosen one thing like Consideration Neural Networks, in keeping with Recurrent Neural Networks or Convolutional Neural Networks. As a Cartesian thoughts, I’d have appreciated a extra constant naming construction. However naming apart, the conceptual shift launched by Transformers absolutely justifies the excellence.

Transformers can be utilized in numerous methods. Encoder architectures are generally used for classification. Decoder architectures are used for next-token prediction, so for textual content technology.

On this article, we’ll concentrate on one core thought solely: how the eye matrix transforms enter embeddings into one thing extra significant.

Within the earlier article, we launched 1D Convolutional Neural Networks for textual content. We noticed {that a} CNN scans a sentence utilizing small home windows and reacts when it acknowledges native patterns. This method is already very highly effective, but it surely has a transparent limitation: a CNN solely appears regionally.

Immediately, we transfer one step additional.

The Transformer solutions a basically totally different query.

What if each phrase might take a look at all the opposite phrases without delay?

1. The identical phrase in two totally different contexts

To know why consideration is required, we’ll begin with a easy thought.

We are going to use two totally different enter sentences, each containing the phrase mouse, however utilized in totally different contexts.

Within the first enter, mouse seems in a sentence with cat. Within the second enter, mouse seems in a sentence with keyboard.

On the enter stage, we intentionally use the identical embedding for the phrase “mouse” in each instances. That is vital. At this stage, the mannequin doesn’t know which that means is meant.

The embedding for mouse incorporates each:

- a robust animal element

- a robust tech element

This ambiguity is intentional. With out context, mouse might discuss with an animal or to a pc system.

All different phrases present clearer alerts. Cat is strongly animal. Keyboard is strongly tech. Phrases like and or are primarily carry grammatical data. Phrases like pals and helpful are weakly informative on their very own.

At this level, nothing within the enter embeddings permits the mannequin to resolve which that means of mouse is appropriate.

Within the subsequent chapter, we’ll see how the eye matrix performs this transformation, step-by-step.

2. Self-attention: how context is injected into embeddings

2.1 Self-attention, not simply consideration

We first make clear what sort of consideration we’re utilizing right here. This chapter focuses on self-attention.

Self-attention signifies that every phrase appears on the different phrases of the identical enter sequence.

On this simplified instance, we make a further pedagogical alternative. We assume that Queries and Keys are immediately equal to the enter embeddings. In different phrases, there are not any discovered weight matrices for Q and Ok on this chapter.

It is a deliberate simplification. It permits us to focus fully on the eye mechanism, with out introducing further parameters. Similarity between phrases is computed immediately from their embeddings.

Conceptually, this implies:

Q = Enter

Ok = Enter

Solely the Worth vectors are used later to propagate data to the output.

In actual Transformer fashions, Q, Ok, and V are all obtained by means of discovered linear projections. These projections add flexibility, however they don’t change the logic of consideration itself. The simplified model proven right here captures the core thought.

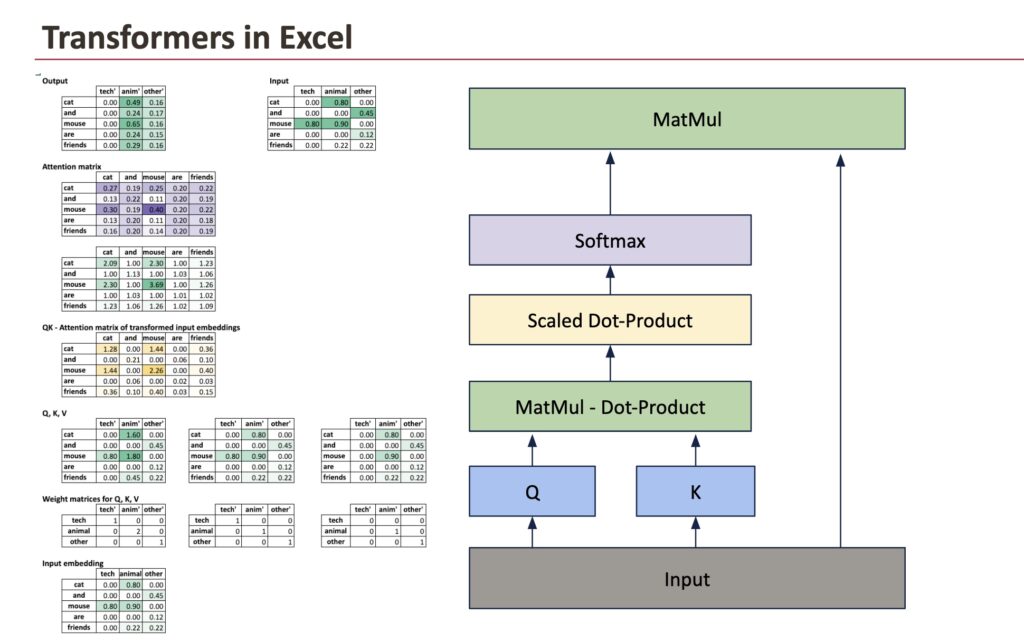

Right here is the entire image that we are going to decompose.

2.2 From enter embeddings to uncooked consideration scores

We begin from the enter embedding matrix, the place every row corresponds to a phrase and every column corresponds to a semantic dimension.

The primary operation is to match each phrase with each different phrase. That is executed by computing dot merchandise between Queries and Keys.

As a result of Queries and Keys are equal to the enter embeddings on this instance, this step reduces to computing dot merchandise between enter vectors.

All dot merchandise are computed without delay utilizing a matrix multiplication:

Scores = Enter × Inputᵀ

Every cell of this matrix solutions a easy query: how comparable are these two phrases, given their embeddings?

At this stage, the values are uncooked scores. They aren’t possibilities, and they don’t but have a direct interpretation as weights.

2.3 Scaling and normalization

Uncooked dot merchandise can develop giant because the embedding dimension will increase. To maintain values in a secure vary, the scores are scaled by the sq. root of the embedding dimension.

ScaledScores = Scores / √d

This scaling step just isn’t conceptually deep, however it’s virtually vital. It prevents the subsequent step, the softmax, from turning into too sharp.

As soon as scaled, a softmax is utilized row by row. This converts uncooked scores into constructive values that sum to 1.

The result’s the consideration matrix.

Every row of this matrix describes how a lot consideration a given phrase pays to each different phrase within the sentence.

2.4 Deciphering the eye matrix

The eye matrix is the central object of self-attention.

For a given phrase, its row within the consideration matrix solutions the query: when updating this phrase, which different phrases matter, and the way a lot?

For instance, the row comparable to mouse assigns greater weights to phrases which might be semantically associated within the present context. Within the sentence with cat and pals, mouse attends extra to animal-related phrases. Within the sentence with keyboard and helpful, it attends extra to technical phrases.

The mechanism is similar in each instances. Solely the encompassing phrases change the result.

2.5 From consideration weights to output embeddings

The eye matrix itself just isn’t the ultimate outcome. It’s a set of weights.

To provide the output embeddings, we mix these weights with the Worth vectors.

Output = Consideration × V

On this simplified instance, the Worth vectors are taken immediately from the enter embeddings. Every output phrase vector is subsequently a weighted common of the enter vectors, with weights given by the corresponding row of the eye matrix.

For a phrase like mouse, because of this its closing illustration turns into a mix of:

- its personal embedding

- the embeddings of the phrases it attends to most

That is the exact second the place context is injected into the illustration.

On the finish of self-attention, the embeddings are now not ambiguous.

The phrase mouse now not has the identical illustration in each sentences. Its output vector displays its context. In a single case, it behaves like an animal. Within the different, it behaves like a technical object.

Nothing within the embedding desk modified. What modified is how data was mixed throughout phrases.

That is the core thought of self-attention, and the muse on which Transformer fashions are constructed.

If we now evaluate the 2 examples, cat and mouse on the left and keyboard and mouse on the suitable, the impact of self-attention turns into specific.

In each instances, the enter embedding of mouse is similar. But the ultimate illustration differs. Within the sentence with cat, the output embedding of mouse is dominated by the animal dimension. Within the sentence with keyboard, the technical dimension turns into extra distinguished. Nothing within the embedding desk modified. The distinction comes fully from how consideration redistributed weights throughout phrases earlier than mixing the values.

This comparability highlights the function of self-attention: it doesn’t change phrases in isolation, however reshapes their representations by taking the total context into consideration.

3. Studying combine data

3.1 Introducing discovered weights for Q, Ok, and V

Till now, we’ve got targeted on the mechanics of self-attention itself. We now introduce an vital component: discovered weights.

In an actual Transformer, Queries, Keys, and Values will not be taken immediately from the enter embeddings. As a substitute, they’re produced by discovered linear transformations.

For every phrase embedding, the mannequin computes:

Q = Enter × W_Q

Ok = Enter × W_K

V = Enter × W_V

These weight matrices are discovered throughout coaching.

At this stage, we normally preserve the identical dimensionality. The enter embeddings, Q, Ok, V, and the output embeddings all have the identical variety of dimensions. This makes the function of consideration simpler to grasp: it modifies representations with out altering the house they stay in.

Conceptually, these weights permit the mannequin to resolve:

- which elements of a phrase matter for comparability (Q and Ok)

- which elements of a phrase ought to be transmitted to others (V)

3.2 What the mannequin truly learns

The eye mechanism itself is mounted. Dot merchandise, scaling, softmax, and matrix multiplications all the time work the identical means. What the mannequin truly learns are the projections.

By adjusting the Q and Ok weights, the mannequin learns measure relationships between phrases for a given activity. By adjusting the V weights, it learns what data ought to be propagated when consideration is excessive. The construction defines how data flows, whereas the weights outline what data flows.

As a result of the eye matrix will depend on Q and Ok, it’s partially interpretable. We are able to examine which phrases attend to which others and observe patterns that always align with syntax or semantics.

This turns into clear when evaluating the identical phrase in two totally different contexts. In each examples, the phrase mouse begins with precisely the identical enter embedding, containing each an animal and a tech element. By itself, it’s ambiguous.

What adjustments just isn’t the phrase, however the consideration it receives. Within the sentence with cat and pals, consideration emphasizes animal-related phrases. Within the sentence with keyboard and helpful, consideration shifts towards technical phrases. The mechanism and the weights are similar in each instances, but the output embeddings differ. The distinction comes fully from how the discovered projections work together with the encompassing context.

That is exactly why the eye matrix is interpretable: it reveals which relationships the mannequin has discovered to contemplate significant for the duty.

3.3 Altering the dimensionality on objective

Nothing, nevertheless, forces Q, Ok, and V to have the identical dimensionality because the enter.

The Worth projection, specifically, can map embeddings into an area of a special dimension. When this occurs, the output embeddings inherit the dimensionality of the Worth vectors.

This isn’t a theoretical curiosity. It’s precisely what occurs in actual fashions, particularly in multi-head consideration. Every head operates in its personal subspace, usually with a smaller dimension, and the outcomes are later concatenated into a bigger illustration.

So consideration can do two issues:

- combine data throughout phrases

- reshape the house during which this data lives

This explains why Transformers scale so properly.

They don’t depend on mounted options. They study:

- evaluate phrases

- route data

- mission that means into totally different areas

The eye matrix controls the place data flows.

The discovered projections management what data flows and how it’s represented.

Collectively, they kind the core mechanism behind trendy language fashions.

Conclusion

This Introduction Calendar was constructed round a easy thought: understanding machine studying fashions by how they really rework knowledge.

Transformers are a becoming approach to shut this journey. They don’t depend on mounted guidelines or native patterns, however on discovered relationships between all parts of a sequence. By means of consideration, they flip static embeddings into contextual representations, which is the muse of recent language fashions.

Thanks once more to everybody who adopted this sequence, shared suggestions, and supported it, particularly the In the direction of Information Science staff.

Merry Christmas 🎄