There may be a whole lot of hype at this time about LLMs. Engineers usually evaluate and reward latest revolutionary fashions like ChatGPT, Llama, Gemini, and Mistral, which certainly deserve a lot consideration for the highly effective capabilities that they’ve. On the similar time, builders have a tendency to not point out so many different impactful fashions that introduced a whole lot of success within the machine studying trade as effectively.

On this article, I wish to speak about some of the iconic fashions developed by OpenAI — CLIP. Launched in 2021, CLIP can be utilized in numerous settings for NLP or laptop imaginative and prescient initiatives and produces state-of-the-art outcomes on totally different duties. Whereas many engineers consider CLIP as simply an embedding mannequin — which is true — its utility is extraordinarily extensive.

On this article, we’ll cowl intimately the CLIP mannequin, together with its structure and coaching course of, efficiency, and functions.

Contrastive studying

Earlier than discussing the CLIP structure, allow us to perceive the that means behind contrastive studying, which performs an integral position within the CLIP design.

Contrastive studying is a self-supervised studying methodology whose goal consists of educating an embedding mannequin to provide embeddings such that related samples are introduced nearer within the area and dissimilar ones are pushed additional away.

Merely talking, in contrastive studying, the mannequin works with pairs of objects. Throughout coaching, it doesn’t know whether or not, in actuality, they’re related or not. After predicting their distance (similarity) by way of calculated embeddings, the loss perform is computed. Principally, there are two circumstances:

- The preliminary objects have been related. The loss perform worth results in a weight replace in a means that adjusts the embeddings to convey their similarity nearer subsequent time.

- The preliminary objects have been dissimilar. On this case, the mannequin updates its weights in order that the similarity between this pair of embeddings might be decrease subsequent time.

Structure & Coaching

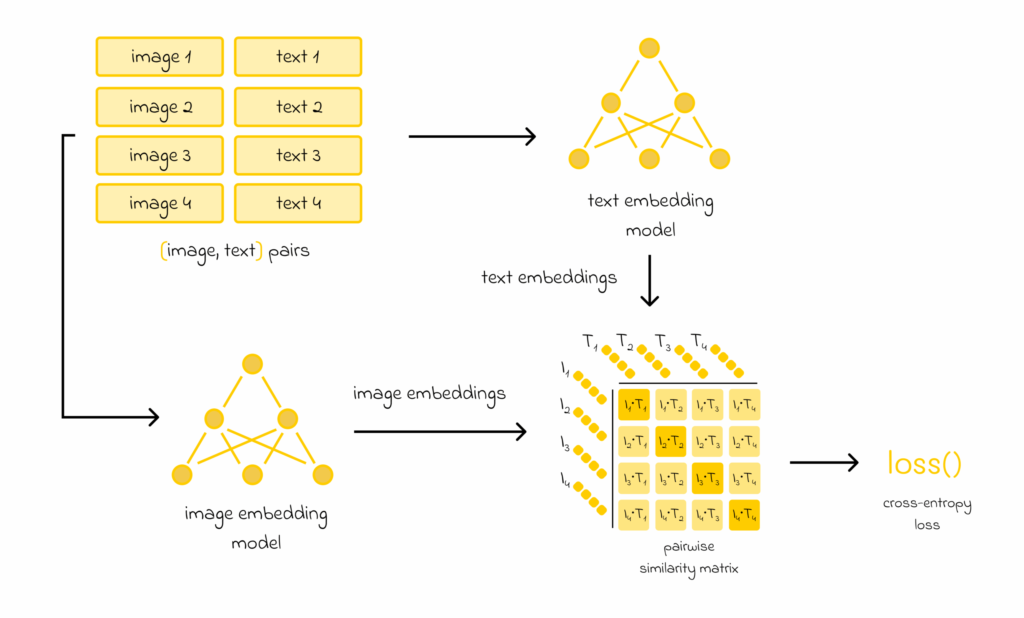

CLIP builders collected an enormous dataset of 400M pairs (picture, textual content). Each picture was supplied with a textual description.

The objective consisted of developing significant embedding representations such that the similarity between them would measure how related a given textual content description is with respect to a picture. For that, the authors took two already current mannequin architectures:

- Textual content embedding mannequin

- Picture embedding mannequin

The preliminary 400M pairs of photos and texts have been cut up into batches. Each picture and textual content in every batch was handed by way of the picture or textual content embedding mannequin to provide embeddings. Consequently, if there have been n embedding pairs within the batch, then n embeddings can be produced for photos and texts.

After that, the cosine pairwise similarity matrix is constructed between picture and textual content embeddings.

Each factor on the primary diagonal of the pairwise matrix represents the similarity between a picture and the textual content that have been coupled collectively within the batch from the start. For the reason that textual content description corresponds effectively to the picture, the similarities on the primary diagonal must be maximized.

However, components off the diagonal weren’t coupled collectively and are available from totally different pairs. Due to this fact, their similarities must be minimized.

Calculated similarities are then handed to the cross-entropy loss perform, which is used to carry out a weight replace for each embedding fashions.

Particulars

The principle parameters of CLIP are the embedding fashions used to encode texts and pictures:

- Textual content is encoded with a Transformer-based mannequin whose structure is much like BERT.

- For photos, the encoding might be performed both by conventional convolutional networks (ResNet) or by a Imaginative and prescient Transformer mannequin (ViT).

Each fashions have been educated from scratch and, by default, generate embeddings of measurement 512. Given the truth that the dataset measurement (400M pairs) is giant, ViT is often most popular over ResNet.

Benefits

There are a number of sturdy sides of CLIP price noting:

- CLIP can be utilized for numerous duties, not only for embedding era (examples are within the following part).

- Zero-shot CLIP efficiency is comparable with easy supervised baselines utilizing a linear classifier on high of ResNet options.

- Computational effectivity: many computations might be run in parallel.

Purposes

Embeddings

The obvious CLIP utility consists of utilizing it for textual content and picture embedding calculation. The embeddings can be utilized individually for textual content or picture duties, for instance, in similarity search pipelines or RAG programs.

Moreover, each texts and pictures can be utilized collectively if there’s a have to affiliate a picture with its corresponding textual content description.

Picture classification

Other than the era of picture and textual content embeddings, one of many strongest sides of CLIP is its capability to unravel different duties in a zero-shot studying fashion.

For instance, let’s take a picture classification activity. If we’re given an animal picture with the target to determine its class from an inventory of animals, we are able to embed each title of an animal. Then, by discovering probably the most related textual embedding to a given picture embedding, we are able to straight determine the animal class.

Talking of this recognition methodology, research have proven that it’s higher to embed each textual content (class title) utilizing the next immediate construction: “a photograph of <animal class>”. For different activity sorts, one of the best immediate may differ.

OCR

OCR stands for optical character recognition and easily means recognizing textual content from photos. OCR duties are often solved by specifically educated supervised fashions. However, CLIP’s spectacular capabilities additionally permit it to determine textual content on photos in zero-shot means.

If there’s a listing of all attainable texts that may seem within the picture, then, in an identical method as within the earlier case, we are able to encode all attainable choices and select probably the most related pair. Nonetheless, on this case, the variety of all attainable phrases or texts is often a lot bigger than the standard variety of labels in a picture classification activity. Encoding all of them can be very lengthy and inefficient. That’s the reason CLIP is never used for OCR duties with lengthy textual content sequences.

By way of OCR, CLIP works a lot better for small phrases, and even higher for image recognition. For instance, it’s straightforward to arrange a digit recognition activity with CLIP since there are solely 10 courses (every class represents a digit between 0 and 9).

One attention-grabbing commentary is that zero-shot CLIP achieves solely an 88% accuracy rating on the well-known MNIST handwritten digit recognition activity, whereas different easy fashions simply attain 99% accuracy. What is important to bear in mind is that even if CLIP has spectacular zero-shot capabilities, there can nonetheless exist very particular picture sorts on which CLIP has not been educated.

Listed here are some vital notes:

CLIP isn’t good for some summary duties, like counting objects in a photograph, estimating how shut two objects are to one another within the picture, and so on.

CLIP produces related zero-shot efficiency for normal laptop imaginative and prescient duties in comparison with different older fashions like ImageNet, and so on. However, to have the ability to beat them, the authors declare that CLIP have to be educated on {hardware} that surpasses trendy {hardware} by a thousand occasions, which is infeasible below present circumstances.

Conclusion

On this article, now we have studied the architectural rules of CLIP. Educated on 400M (picture, textual content) pairs, CLIP reached state-of-the-art efficiency on many duties. Whereas CLIP usually fails on some summary downstream duties, it nonetheless has good capabilities to carry out different commonplace laptop imaginative and prescient duties utilizing zero-shot methods.

Assets

All photos except in any other case famous are by the creator.