“Gradual pondering (system 2) is effortful, rare, logical, calculating, acutely aware.”

— Daniel Kahneman, Pondering, Quick and Gradual

Giant Language fashions (LLMs) corresponding to ChatGPT usually behave as what Daniel Kahneman—winner of the 2002 Nobel Memorial Prize in Financial Sciences—defines as System 1: it’s quick, assured and virtually easy. You’re proper: there’s additionally a System 2, a slower, extra effortful mode of pondering.

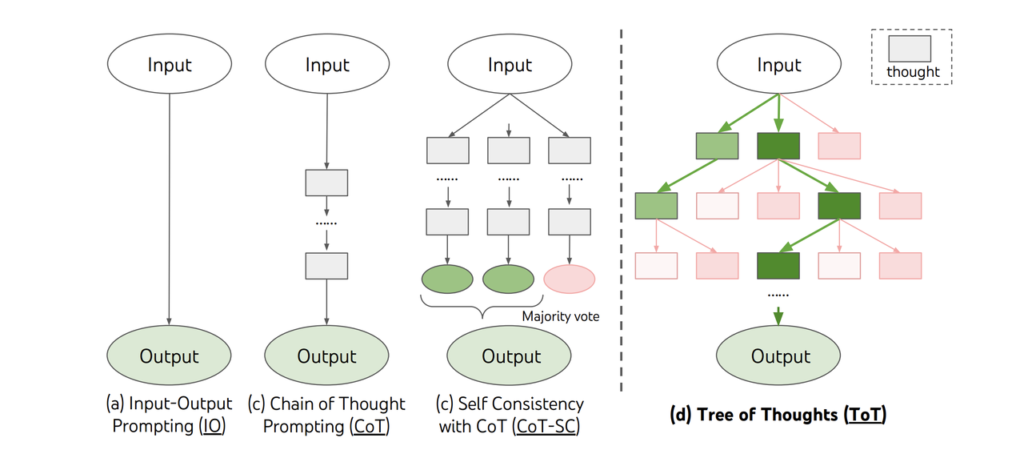

Researchers have been creating strategies these years to convey System 2-style pondering into LLMs by means of higher prompting methods. Tree-of-Thought (ToT) prompting is one essentially the most excellent methods and it permits the mannequin to observe a number of reasoning paths resulting in doubtlessly higher choices.

On this weblog publish, I’ll do a case research the place a ToT-powered LLM agent performs the traditional sport Minesweeper — not by guessing, however by reasoning. Similar to you’d.

CoT and ToT

We’d begin our story with Chain-of-Thought (CoT) prompting — a method that guides LLMs to cause step-by-step. Have a look at the next instance:

Q: I purchased 10 apples within the grocery store. Then I gave 2 apples to the neighbor and one other 2 to my pigs. Not sufficient apples for me now!!I then purchased 5 extra apples from a grocery however 1 was rotten. What number of apples now?

A: You used to have 10 apples. You gave 2 apples to the neighbor and a pair of to the pigs. Now you’ve gotten 6 apples. Then you definitely purchased 5 extra apples, so that you had 11 apples. Lastly, you ate 1 apple, so you continue to have 10 apples in the long run.

As you possibly can see, guided to suppose step-by-step, the LLM simulates higher reasoning.

Tree-of-Thought (ToT) prompting expands on CoT. Because the identify suggests, It organizes reasoning like a tree the place every node is a possible “thought,” and branches are doable paths. It doesn’t observe a linear course of like CoT.

In follow, ToT creates a tree construction with branches, every with sub-steps resulting in a ultimate decision. The mannequin then evaluates every step, assigning every thought a classification as “certain”, “possible” or “unimaginable”. Subsequent, ToT goes by means of your complete drawback area by making use of search algorithms corresponding to Breadth-First Search (BFS) and Depth-First Search (DFS) with a purpose to select one of the best paths.

Right here is an instance in an workplace constructing: I’ve an workplace with a max capability of 20 individuals, however 28 persons are coming this week. I’ve the next three branches as doable options:

- Department 1: Transfer 8 individuals

- Is there one other room close by? → Sure

- Does it have area for 8 extra individuals? → Sure

- Can we transfer individuals with none administrative course of? → Perhaps

- Analysis: Promising!

- Department 2: Develop the Room

- Can we make the room bigger? → Perhaps

- Is that this allowed underneath security? → No

- Can we request an exception within the constructing? → No

- Analysis: It wont work!

- Department 3: Break up the group into two

- Can we divide these individuals into two teams? → Sure

- Can we allow them to come on totally different days? → Perhaps

- Analysis: Good potential!

As you possibly can see, this course of mimics how we remedy laborious issues: we don’t suppose in a straight line. As a substitute, we discover, consider, and select.

Case research

Minesweeper

It’s virtually unimaginable however In case you don’t know, minesweeper is a straightforward online game.

The board is split into cells, with mines randomly distributed. The quantity on a cell exhibits the variety of mines adjoining to it. You win whenever you open all of the cells. Nonetheless, if you happen to hit a mine earlier than opening all of the cells, the sport is over and also you lose.

We’re making use of ToT in Minesweeper which requires some logic guidelines and reasoning underneath constraints.

We simulate the sport with the next code:

# --- Sport Setup ---

def generate_board():

board = np.zeros((BOARD_SIZE, BOARD_SIZE), dtype=int)

mines = set()

whereas len(mines) < NUM_MINES:

r, c = random.randint(0, BOARD_SIZE-1), random.randint(0, BOARD_SIZE-1)

if (r, c) not in mines:

mines.add((r, c))

board[r][c] = -1 # -1 represents a mine

# Fill in adjoining mine counts

for r in vary(BOARD_SIZE):

for c in vary(BOARD_SIZE):

if board[r][c] == -1:

proceed

depend = 0

for dr in [-1, 0, 1]:

for dc in [-1, 0, 1]:

if 0 <= r+dr < BOARD_SIZE and 0 <= c+dc < BOARD_SIZE:

if board[r+dr][c+dc] == -1:

depend += 1

board[r][c] = depend

return board, minesYou’ll be able to see that we generate a BOARD_SIZE*BOARD_SIZE dimension board with NUM_MINES mines.

ToT LLM Agent

We are actually able to construct our ToT LLM agent to resolve the puzzle of minesweeper. First, we have to outline a perform that returns thought on the present board through the use of an LLM corresponding to GPT-4o.

def llm_generate_thoughts(board, revealed, flagged_mines, known_safe, ok=3):

board_text = board_to_text(board, revealed)

valid_moves = [[r, c] for r in vary(BOARD_SIZE) for c in vary(BOARD_SIZE) if not revealed[r][c] and [r, c] not in flagged_mines]

immediate = f"""

You're taking part in a 8x8 Minesweeper sport.

- A quantity (0–10) exhibits what number of adjoining mines a revealed cell has.

- A '?' means the cell is hidden.

- You've got flagged these mines: {flagged_mines}

- You recognize these cells are protected: {known_safe}

- Your job is to decide on ONE hidden cell that's least prone to comprise a mine.

- Use the next logic:

- If a cell exhibits '1' and touches precisely one '?', that cell have to be a mine.

- If a cell exhibits '1' and touches one already flagged mine, different neighbors are protected.

- Cells subsequent to '0's are typically protected.

You've got the next board:

{board_text}

Listed below are all legitimate hidden cells you possibly can select from:

{valid_moves}

Step-by-step:

1. Record {ok} doable cells to click on subsequent.

2. For every, clarify why it is perhaps protected (primarily based on adjoining numbers and recognized information).

3. Price every transfer from 0.0 to 1.0 as a security rating (1 = undoubtedly protected).

Return your reply on this precise JSON format:

[

{{ "cell": [row, col], "cause": "...", "rating": 0.95 }},

...

]

"""

strive:

response = shopper.chat.completions.create(

mannequin="gpt-4o",

messages=[{"role": "user", "content": prompt}],

temperature=0.3,

)

content material = response.decisions[0].message.content material.strip()

print("n[THOUGHTS GENERATED]n", content material)

return json.masses(content material)

besides Exception as e:

print("[Error in LLM Generation]", e)

return []This may look a bit lengthy however the important a part of the perform is the immediate half which not solely explains the foundations of the sport to the LLM (how one can perceive the board, which strikes are legitimate, and many others. ) and likewise the reasoning behind every legitimate transfer. Furthermore, it tells how one can assign a rating to every doable transfer. These assemble our branches of ideas and at last, our tree ToT. For instance, we have now a step-by-step information:

1. Record {ok} doable cells to click on subsequent.

2. For every, clarify why it is perhaps protected (primarily based on adjoining numbers and recognized information).

3. Price every transfer from 0.0 to 1.0 as a security rating (1 = undoubtedly protected).These strains information the LLM to suggest a number of strikes and to justify every of those strikes primarily based on the present state; it then has to guage every of those doable strikes by a rating starting from 0 to 1. The agent will use these scores to seek out the best choice.

We now construct an LLM agent utilizing these generated ideas to maneuver a “actual” transfer. Contemplate the next code:

def tot_llm_agent(board, revealed, flagged_mines, known_safe):

ideas = llm_generate_thoughts(board, revealed, flagged_mines, known_safe, ok=5)

if not ideas:

print("[ToT] Falling again to baseline agent resulting from no ideas.")

return baseline_agent(board, revealed)

ideas = [t for t in thoughts if 0 <= t["cell"][0] < BOARD_SIZE and 0 <= t["cell"][1] < BOARD_SIZE]

ideas.type(key=lambda x: x["score"], reverse=True)

for t in ideas:

if t["score"] >= 0.9:

transfer = tuple(t["cell"])

print(f"[ToT] Confidently selecting {transfer} with rating {t['score']}")

return transfer

print("[ToT] No high-confidence transfer discovered, utilizing baseline.")

return baseline_agent(board, revealed)The agent first calls the LLM to counsel a number of doable subsequent strikes with the arrogance rating. If the LLM fails to return any thought, the agent will fall again to a baseline agent outlined earlier and it might probably solely make random strikes. If we’re lucky sufficient to get a number of strikes proposed by the LLM, the agent will don a primary filter to exclude invalid strikes such these which fall out of the board. It’ll then type the legitimate ideas in keeping with the arrogance rating in a descending order and returns one of the best transfer if the rating is greater than 0.9. If not one of the options are greater than this threshold, it falls again to the baseline agent.

Play

We are going to now attempt to play a typical 8×8 Minesweeper board sport with 10 hidden mines. We performed 10 video games and reached an accuracy of 100%! Please test the notebook for full codes.

Conclusion

ToT prompting provides LLMs corresponding to GPT-4o extra reasoning potential, going past quick and intuitive pondering. Now we have utilized ToT to the Minesweeper sport and acquired good outcomes. This instance exhibits that the ToT can rework LLMs from chat assistants to sophisticated drawback solvers with actual logic and reasoning potential.